“Is this going to be a standup fight, sir, or another bug hunt?” – PFC Hudson, Aliens

Here’s the tl;dr: A pair of separate but related hardware bugs afflict almost all ARM and x86 processors made over the past ten years, and sometimes far longer. Both bugs have lain dormant for all that time, meaning nearly every PC, server, cell phone, and cloud service you can name is affected, and has been for quite a while. Because it’s a silicon problem, there’s no easy hardware patch, but software workarounds have already begun rolling out. The bugs are obscure – obviously – and are difficult to exploit, but in the worst-case scenario they could be used to expose sensitive data like passwords.

Called Meltdown and Spectre, the bugs affect MacOS, Windows, Linux… just about anything. They don’t exploit any software or operating system weakness, because they’re not software bugs. They’re both hardware issues that work across operating systems, brands, microarchitectures, vendors, and dates.

On the bright side, neither bug seems to make it any easier for an attacker to inject malware into your machines. Rather, they both provide a unique new communication channel for malicious code that’s already there. In other words, it’s not a new way in; it’s a new way to pass sensitive information out.

The Basics

The immediate fallout from yesterday’s disclosure of the processors’ hardware flaws was equal parts uninformed panic (“Every computer in the world is insecure!”), investor nervousness (Intel shares dipped on the news before mostly recovering the next day), and technical head-scratching (“How does this work again?”). Many details of the bugs aren’t yet publicly available, for the simple reason that they weren’t supposed to be revealed until after workarounds had been devised and deployed. But as a side-effect of the group effort to implement several concurrent patches, a bit of the discussion leaked out and made itself public.

What’s remarkable is that these bugs have apparently been around since the Reagan administration, undetected and undiscovered until the middle of last year. That’s when several separate research teams in Austria, California, and elsewhere, discovered them almost simultaneously.

Craig Young, security researcher at Tripwire, says, “Meltdown could have devastating consequences for cloud providers, as Google researchers were able to demonstrate reading of host memory from a KVM guest OS. For a cloud service provider, this could enable attacks between customers.”

All chips have bugs, but unlike the more common but minor silicon errata, neither bug is simply a localized circuit-design defect. Instead, they’re both based on a fundamental architectural weakness of high-performance processors, which is why they appear in both x86 and ARM processors, why AMD’s chips are just as vulnerable as Intel’s, and why the bugs afflict so many successive generations of CPUs designed by different engineering teams at different companies in different parts of the world over so many years. It would be difficult to find any other commonality among so many independent chip designs.

So, what’s the underlying problem at work here? How did so many good CPU designers, all working independently, unwittingly perpetuate this fault? How bad is the risk? And how do we fix, or at least mitigate, its effects? As you might expect, it’s a pretty obscure bug, and one that depends on out-of-order and speculative execution.

Theory of Operation

Let’s start at the beginning. All processors, from the lowliest MCUs to the most explosive multicore server processors, have a pipeline. The simplest pipelines are just three stages long: fetch, decode, execute. Faster processors have longer pipelines with perhaps a dozen or more stages, and superscalar processors have multiple pipelines running in parallel. (Those pipelines may or may not be identical.) The purpose of the pipeline is to overlap the work of one stage (fetching an instruction, for example) with the operation of the next stage (executing the previously fetched instruction). Pipelines are wonderful things, and the longer they get, the more overlap you have and the more concurrent operations you can perform simultaneously. As a rule of thumb, the longer the pipeline, the faster the processor can run.

But there’s also a downside. Pipelines work great as long as your code runs in a straight line, with no branches. The CPU simply keeps fetching the next instruction after the current one. But all programs have branches, and these stop the CPU in its tracks and prevent it from fetching the next sequential instruction until it knows which way to go next. Should it fetch and execute the next instruction after the branch (falling through the branch) or should it fetch from the target of the branch (the taken branch)? That hesitation introduces a “pipeline bubble” that wastes performance. The chip stalls awaiting the outcome of the branch when it could be doing useful work.

There are at least two ways to improve this situation. One is branch prediction and the other is speculative execution. The two work together to reduce pipeline bubbles and recover some of that lost performance. Branch prediction is a science unto itself and doesn’t affect us here. Spectre and Meltdown both rely on unintended side effects of speculative execution.

Speculative execution is just that: the chip guesses which way the branch will go and starts fetching and executing instructions from where it thinks the program will flow before it really knows for sure. If the chip is right, it’s saved a bunch of time by eliminating most or all of the pipeline bubble, almost as if the branch had never happened. (Indeed, correctly predicted branches often execute in zero clock cycles.) That’s all fine and dandy. But what if the chip guesses wrong?

If the branch is predicted incorrectly, the CPU will barrel down the wrong execution path and start executing instructions it shouldn’t. Once it discovers its mistake, it needs to somehow undo all the effects of the incorrect path. It’s not enough to just abandon the wrong path; it needs to undo the collateral damage. If variables have been updated, they need to be put back to the way they were before the branch. If registers have been changed, they need to be reversed. If memory has been updated, those updates need to be rewound back in time, and so on. Speculative execution adds a lot of hardware to the CPU design in the form of reservation stations, register-renaming hardware, branch-prediction caches, and much more. It’s expensive in hardware terms, but vital to improve the performance of high-end processors. Complex as it is, speculative execution has been the norm for about 20 years. It’s not considered exotic anymore; it’s just the price of doing business in the GHz era.

Naturally, unwinding all that work is complicated, and it gets even more complicated the longer the CPU’s pipeline is. For a processor with a very long pipeline, the CPU might have gone pretty far down the wrong path before it realized its mistake. That’s a lot of registers and variables to undo. The key to all of this is to unwind the effects in a way that doesn’t screw up your software.

In processor-speak, undoing the effects of speculative execution must be “architecturally invisible.” That is, it needs to be invisible to software – any software. Neither applications nor operating systems should be able to tell what’s going on. Your code doesn’t know – and doesn’t want to know – whether the CPU briefly went down the wrong execution path, even though it’s probably doing so a million times per second. Every processor on the market, including those with the Spectre and Meltdown bugs, succeeds at this task. Speculative execution really is architecturally invisible. But it’s not completely invisible. And therein lies the problem. It’s Part One of the basis for both Spectre and Meltdown.

What Went Wrong

Any read or write operations to/from memory goes through the processor’s on-chip cache(s). If those memory transactions turn out to have been executed in error because of a mispredicted branch and the subsequent speculative execution of the wrong instruction stream, the effects of the memory updates need to be unwound, but the effects on the cache don’t. Normally, that’s a trivial issue. So what if my cache was tickled by a few stray instructions? It doesn’t hurt anything. Caches are probabilistic anyway. There’s never a guarantee of what’s going to be in the cache, so it doesn’t matter if a couple of cache lines get tweaked now and then by the ephemeral transit of unwanted data. Right?

Caches, like speculative execution, are architecturally invisible. You can’t tell they’re working. But also like speculative execution, their operation isn’t completely invisible. And that lays the groundwork for Part Two of our bug hunt.

Even though the cache effects of speculative execution don’t alter performance in any meaningful way, they do open a teeny window into what the processor was just doing, including what other programs, tasks, or threads were doing. And this can be exploited into seeing data you shouldn’t see. And that, in turn, can be the lever that cracks open a much bigger window into your operating system’s privileged memory space.

There are now well-known hacks to tell if a given memory location is in the cache or not. Basically, it comes down to timing. Cached memory addresses respond faster than uncached addresses (that’s the whole point), so if you have an accurate timer, you can tell whether address 0x543FC042 is cached or not. You can even test it with Java. That doesn’t sound like much of a security hole by itself, or even a very interesting party trick, but remarkably enough, there are proven ways to leverage that information into real data. The Flush+Reload attack, for example, is very good at revealing encryption keys using nothing but cache timing. This technique, combined with other tools, leads to vital information leaks.

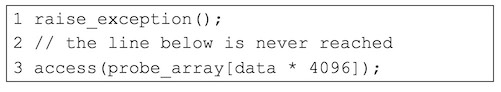

If we combine the (supposedly invisible) effects of speculative execution with the (supposedly invisible) effects of caching, we can trick the processor into revealing more than it should. The Austrian team demonstrated this with a two-line program that deliberately causes a fault, followed by an instruction that accesses external memory.

It doesn’t matter what the specific “exception()” is, as long as it causes the processor to stop executing and jump to system-level code, such as an interrupt handler. The point is that the processor will almost certainly start executing the line after it. Even though we know the second line will never be executed under normal circumstances, the processor doesn’t know that and starts executing it speculatively. And because that line references an array, the speculative execution will trigger an external memory access, along with its resulting cache update.

The value of 4096 in this example is not arbitrary. It corresponds with the processor’s MMU page mapping (most processors, and all of those affected, have 4KB pages). Incrementing the value of the variable data by 1 moves the external access by 4096 bytes, or one MMU page. If you repeat this two-line code fragment while walking through values of data, you can tell which pages of memory are cached and which are not. Again, not dangerous by itself, but it becomes half of the potential attack.

The Problem

What if the memory array is off-limits or inaccessible to the program? Specifically, what if it’s asking for privileged operating-system data? Naturally, the processor would prevent such an illegal access. This is handled by the processor’s MMU hardware, usually by checking a single user/kernel (or user/supervisor) bit in the MMU page tables. Privileged address range? Sorry, you can’t see that data. Access denied.

Except that the chip will access the data anyway – speculatively. It discards the result, but only after the actual, physical access to memory already happens. Plus, that data is copied into the cache, because neither the execution unit nor the cache hardware wait for the outcome of the MMU’s privilege test. That would take too long. Better to perform the operation speculatively and assume it’s legal, and then discard the results afterwards.

If your processor has a long pipeline, it will likely execute even more instructions beyond just the initial (and illegal) memory access. Maybe it performs an add, subtract, shift, or multiply on the contents of that memory location. Maybe there’s even time to squeeze in a few more operations before the CPU starts processing the initial exception and discards all of its speculative work. That small time window gives you a chance to use your ill-gotten result as an address pointer to poke at a different external memory address. And this second access will affect the cache in ways that other programs can monitor. Are you starting to see the weakness?

Meltdown employs a two-part process, sort of like two gang members teaming up for the big bank heist. One part relies on speculative execution to attempt to access protected memory, and then quickly signals its accomplice by means of affecting a cache line. The other part uses cache-timing variations to read the signal. The short timing of a cached line signals a 1, while the longer timing of an uncached line is a 0. (Or vice versa; it doesn’t matter.) By walking through the system’s entire memory map, the leader of the team can determine which physical addresses are valid (i.e., present in memory), as well as what’s in each byte location. That information is then transferred, somewhat laboriously, to the awaiting coconspirator program.

Taken to extremes, you could dump the entire contents of the system’s physical memory, one bit at a time, or perhaps one byte at a time. It would be very slow and tedious, but it would also completely bypass the chip’s hardwired security. Eventually you’d uncover kernel code, driver code, and other off-limits data, possibly including encryption keys or passwords. Whatever’s stored in memory could potentially be exposed.

Note that Meltdown and Spectre don’t really provide a new way to inject malware into a system. They assume that you’ve somehow already got your malicious code in place. Both are really more like a new and unusual communications channel between privileged and nonprivileged memory spaces that can be used to sidestep hardware security. They’re subtle and tricky to exploit, but the vulnerability is also very widespread.

Spectre works similarly to Meltdown, except that it uses a deliberately mispredicted branch, rather than an exception, to trigger speculative execution of code that will eventually reveal secret data. It also has some subtleties that make it harder to patch than Meltdown. The Spectre bug has been proven to exist on Intel, AMD, and some ARM processors, while Meltdown affects Intel chips only (so far). Although they both rely on the same weakness of speculative execution combined with cache hacking, they’re just different enough to justify different names.

The Fix

The discoverers of Meltdown and Spectre do a good job of suggesting fixes and workarounds, some more workable than others. Until the affected CPUs can be redesigned, each operating system will need its own software patch. Many are already available. Because these flaws were discovered around the middle of last year, developers have secretly been working on fixes for several months. The idea was to have the various OS-specific patches in place and deployed before the existence of Meltdown and Spectre became well known to the public. This is standard procedure in the security community. Some details leaked prematurely, however, so here we are scrambling for answers and partial fixes.

Any software patch for a hardware flaw is likely to have some performance implications – software is slower than hardware! – but the practical effect seems to be minimal. Nobody wants to see their chip slowed down, but some initial reports of 20% to 30% speed decreases seem to be overblown. The actual slowdown will be very dependent on chip architecture, cache size, clock speed, memory latency, operating system, and chip-specific MMU implementation. So far, it looks like most CPUs running compute-intensive applications might see a hit of a few percent, nothing more. In short, you lose about as much performance as running an antivirus program. Or Internet Explorer.

There’s not going to be a hardware fix for this. It’s too late for that. The vulnerability is baked into almost every high-end microprocessor made in the last decade or so. Pulling out the afflicted chip and replacing it with a new one isn’t really an option, since today’s chips are every bit as exposed as last year’s. It will be two years before we see “fixed” processors from Intel, AMD, and ARM’s many licensees.

Speaking of antivirus programs, they probably won’t be much defense against this, at least not in the general sense. There’s no particular “signature” that gives these hacks away; no obvious (or even subtle) sign of malfeasance. It’s just normal-looking code, and not much of it. Over time, specific exploits might be blacklisted and blocked, but that’s post facto. Preventing new infections promises to be very challenging. Happy New Year.

5 thoughts on “Hardware Bugs Afflict Nearly All CPUs”