I love being me, which is a good thing when you come to think about it, because I don’t think the alternative would be much fun. In addition to being a trend-setter and leader of fashion, one of the things I’ve been blessed with is a good imagination, but—as we will see—a good imagination can be a double-edged sword.

My poor old noggin is currently coping with conflicting considerations concerning generative artificial intelligence, or generative AI, which refers to an AI capable of generating text, images, or other media in response to prompts. In this case, the AI models learn the patterns and structure of their input training data, after which they can generate new data that has similar characteristics.

In the not-so-distant future, we are going to talk about tools like ChatGPT (I can only imagine your surprise), but first, let’s tarry for a moment to remind ourselves just how fast things are moving in AI space (where no one can hear you scream… at least, not for long if M3GAN has anything to say about it).

As we’ve previously discussed, people have been dipping their toes into the AI waters since the Dartmouth workshop in 1956. One early form of AI came in the form of expert systems, were formally introduced circa 1965, and which used knowledge- and rules-based approaches to provide problem-solving capabilities. There was a lot of excitement around these systems all the way through to the 1990s, but they never really delivered the “oomph” we were all looking for.

To be honest, although (circa 2010) I was vaguely aware that work on AI was advancing in academia, at that time I had little expectation that it would make its presence felt in real-world applications while I still maintained a corporeal presence on this plane of existence. All I can say is that I was young (well, younger) and more foolish in those days of yore (suffice it to say that 2010 seems like a lifetime away now).

Something else I’ve mentioned in previous columns is that there was no mention of artificial intelligence or machine learning (ML) whatsoever in the 2014 edition of the Gartner hype cycle report. Just one year later, the 2015 hype cycle showed machine learning as having already crested the “Peak of Inflated Expectations.”

Even in the early days (by which I mean no more than ten years ago at the time of this writing), people with far too much free time on their hands started to use AI to do things I would never have thought of doing in 1,000 years. In 2016, for example, researchers from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) recorded around 1,000 video snippets showing various objects being tapped, hit, prodded, and scraped with a drumstick (and I thought I needed to get a life). They then fed these videos with accompanying audio into an AI model that analyzed the images and deconstructed the sounds (pitch, loudness, echo, reverberation, etc.). Subsequently, when the AI was shown new video snippets (sans audio) of the drumstick interacting with things, it could generate corresponding sounds of such subtlety that a human observer could not tell if those sounds were real or not (see Artificial Intelligence Produces Realistic Sounds That Fool Humans).

Also, in 2016, I attended the Embedded Vision Summit, where I was astounded by the level of sophistication exhibited by the AI-powered object detection and identification being performed. The exhibit hall was so jampacked it prompted one person to say, “You couldn’t swing a cat in here.” Some wit (not me, I’m only a halfwit striving to attain full wit status) immediately retorted, “If you did, all these AI systems would start displaying, ‘Human swinging a cat (Probability 98%)’!”

The American artificial intelligence research laboratory called OpenAI has a declared intention of developing and promoting “friendly AIs.” I bet you are thinking, “Who in their right minds would develop any other kind?” If so, may I introduce you to the folks at MIT’s Media Lab who felt moved to create Norman, the world’s first psychopath AI.

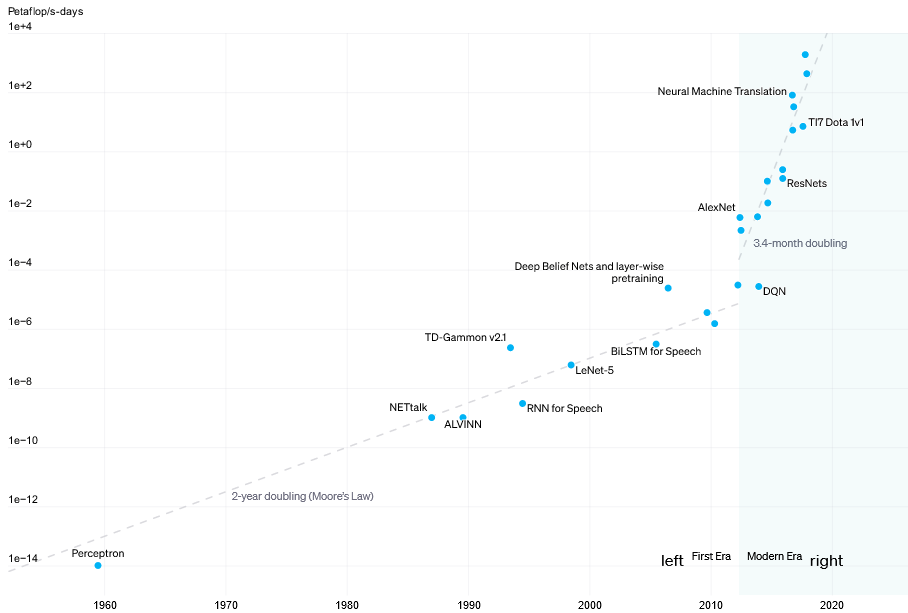

In 2018, the guys and gals at OpenAI published a paper titled AI and Compute. In this paper, they identified two distinct eras with respect to the amount of computational power required to train AI models. In the first era, from 1956 to 2012, these computational requirements doubled approximately every two years, generally corresponding to Moore’s law. However, there was an inflection point in 2012, which I assume was the result of a new generation of AI algorithms, architectures, and models. From the commencement of the second (modern) era, the required computational power has been doubling every 3.4 months! (Read that again, then take a deep breath.)

Compute usage in training AI systems can be split into two distinct eras (Source: OpenAI)

Coming at this from another direction, as part of Intel’s Architecture Day presentations in November 2021, Raja Koduri, who was chief architect and Executive Vice President of Intel’s architecture, graphics, and software (IAGS) division at that time, made mention of the fact that Intel’s high-end customers were demanding a doubling of computer performance approximately every 3.5 months.

On the one hand, I’m a big fan of AI, especially when it’s used to perform tasks that I can easily wrap my brain around. Take photographs, for example. I’m all in favor of using AI to restore, enhance, and colorize old photographs, and I see no problem in using AI to replace a boring sky for a spectacular equivalent. Some of this stuff is really clever, like the tools from Cleanup Pictures that allow you to use AI to remove unwanted people or objects from a scene. There are also AI-based tools out there that can animate family photos.

These applications are progressing in leaps and bounds. Just a couple of days ago, I saw one such offering that blew me away. The example I saw was a picture of a cat, which was looking forward but to the side at, say, a 45-degree angle. The user employed the mouse cursor (how ironic) to identify a couple of key points on the cat’s head, then they rotated the head to be looking front-and-center, with the AI adding any missing details and addressing any artifacts it had introduced during the process. “Now, that is clever!” I thought to myself.

There’s so much more AI-related stuff I want to talk about, including some developments that will leave you lying awake at night, but I’m composing myself to commence the celebrations marking the 33rd anniversary of my 33rd birthday, so I’ll leave you with a happy nugget of knowledge (sad to relate, there aren’t as many of these little beauties lying around as there should be).

Recently, I was talking to a company that is in the process of integrating AI with the ability to monitor one’s brainwaves, all to be deployed in the earbuds used in hearing aids. “Say, what?” you say (which is, of course, what you would say if you needed a pair of these bodacious beauties). Well, that’s certainly what I said when I first heard this.

In fact, this is another take on the classic cocktail party problem, which involves lots of people talking at the same time. As we learn on the Wikipedia (I love the Wikipedia), the cocktail party effect is the phenomenon of the brain’s ability to focus one’s auditory attention on a particular stimulus while filtering out a range of other stimuli, such as when a partygoer can focus on a single conversation in a noisy room. Listeners have the subconscious ability to both segregate different stimuli (e.g., different voices) into different streams, and to subsequently decide which streams are most pertinent to them.

Well, AI also has the ability to segregate different stimuli. Furthermore, when there is constant chatter from multiple sources, our brainwaves change when we are focused on a particular source of interest to us. The AI can use all of this information to boost the voice the wearer wants to hear and fade down any other sounds and voices. All of which prompts me to say that, when it comes to AI, “You Ain’t Heard Nothin’ Yet” (I’m sorry, I simply couldn’t help myself).

In Part 2 of this two-part mini-mega-extravaganza, we’re going to look at some of the mind-blowingly exciting and mind-bogglingly scary tasks that are currently being performed by AIs in general and generative AIs in particular. Be afraid, be very afraid. In the meantime, do you have any thoughts you’d care to share on anything you’ve read here?

4 thoughts on “Gruesome Gambols Gripping Generative AI (Part 1)”