Sit down, take a deep breath, and try to relax. In a few moments, I’m going to show you a video of people (well, dummies that look like people) walking in front of vehicles equipped with pedestrian detection and automatic emergency braking systems. The results will make the hairs stand up on the back of your neck. I’m still shaking my head in disbelief at what I just observed, but first…

Earlier this year, I posted a column describing what happens When Genetic Algorithms Meet Artificial Intelligence. In that column, I described how those clever chaps and chapesses at Algolux are using an evolutionary algorithm approach in their Atlas Camera Optimization Suite. The result is the industry’s first set of machine-learning tools and workflows that can automatically optimize camera architectures intended for computer vision applications.<

In a nutshell, when someone creates a new camera system for things like object detection and recognition in robots or vehicles equipped with advanced driver-assistance systems (ADAS) or even — in the not so distant future — fully autonomous vehicles, then that camera system requires a lot of fine-tuning. Each of the components — lens assembly, sensor, and image signal processor (ISP) — has numerous associated parameters (variables), which means that the image quality for each camera configuration is subject to a massive and convoluted parameter space.

Today’s traditional human-based camera system tuning can involve weeks of initial lab tuning followed by months of field and subjective tuning. The sad part about all this expenditure of effort is that there’s no guarantee of results when it comes to computer vision applications. It turns out that the ways in which images and videos need to be manipulated and presented to artificial intelligence (AI) and machine learning (ML) systems may be very different to the manipulations required for images and videos intended for viewing by people.

Bummer!

Now, this requires a bit of effort to wrap one’s brain around. The purpose of Atlas is not to teach an AI/ML system to detect and identify objects and people. In this case, we can assume that system has already been trained and locked-down. What we are doing here is manipulating the variables in the camera system to process the images and video streams in such a way as to facilitate the operation of the downstream AI/ML system (read my Atlas-related column for more details).

OK, now it’s time to watch the video I mentioned at the start of this column. To be honest, if you’d asked me before I saw this Pedestrian-Detection ADAS Testing film, I would have said that this technology was reasonably advanced and we were doing pretty well in the scheme of things. However, after watching this video — which, let’s face it, was actually taken under optimum lighting conditions (I can only imagine how bad things could get in rain, snow, or fog) — I’m a sadder and wiser man.

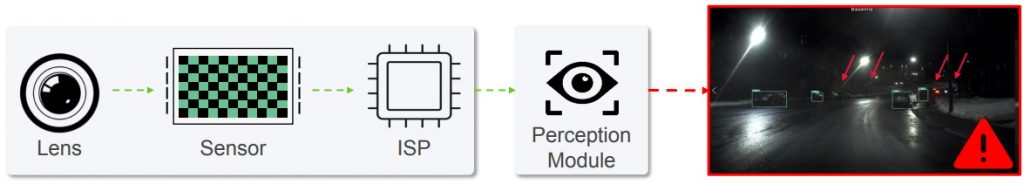

So, what can we do to make things better? Well, we can start by recognizing the fact that, although the Atlas Camera Optimization Suite takes a magnificent stab at things and offers up to 25% improvement over human-tuned camera systems, the poor little rascal is doomed to optimize the accuracy of existing vision systems, which — metaphorically speaking — is equivalent to making it work its magic while standing on one leg with one hand tied behind its back. In fact, we might go so far as to say that today’s vision architectures are designed to fail!

Traditional vision architectures are doomed to fail (red arrows indicate unidentified objects and people) (Image source: Algolux)

Traditional vision architectures are doomed to fail (red arrows indicate unidentified objects and people) (Image source: Algolux)

The problems in a nutshell are the fact that ISPs are designed and tuned for human vision, cameras and vision models are not co-optimized, and the datasets used to train the AI/ML systems do not originate with the actual camera configuration for which everything is being tuned. As a result, object detection and recognition are not robust in difficult environments such as those involving low-light and low-contrast imagery.

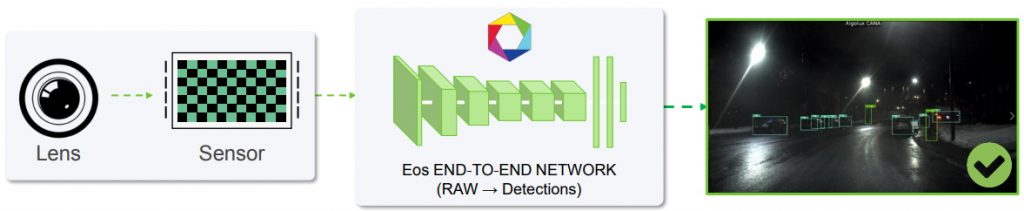

Suppose we were to start with a clean slate. Well, that’s just what the ingenious guys and gals at Algolux have done with regard to their new Eos Embedded Perception software, which is designed to optimize accuracy for new vision systems.

Quite apart from anything else, the Eos training framework employs unsupervised learning in RAW, leveraging vast amounts of unlabeled data by intensive contrastive learning in the RAW domain. This significantly improves robustness and saves hundreds of thousands of dollars of training data capture, curation, and annotation costs per project while quickly enabling the support of new camera configurations.

The Eos framework uniquely enables end-to-end learning and fine-tuning of computer vision systems. In fact, it effectively turns them into “computationally evolving” vision systems through the application of computational co-design of imaging and perception, which is an industry first.

Eos-designed vision architectures are destined for success (Image source: Algolux)

In this case, the image processing and object detection and recognition learn together to handle hard “edge cases.” Eos can be easily adapted to any camera lens or sensor, and the end-to-end learning optimizes robustness, all while massively reducing data capture and annotation time and cost. If you compare the image bordered in green in the figure above to the one bordered in red on the previous figure, I think you’ll find the difference to be a bit of an eye-opener (no pun intended).

Eos delivers a full set of highly robust perception components addressing individual NCAP requirements, L2+ADAS, higher levels of autonomy from highway autopilot, and autonomous valet (self-parking) to L4 autonomous vehicles as well as Smart City applications such as video security and fleet management. Key vision features include object and vulnerable road user (VRU) detection and tracking, free space and lane detection, traffic light state and sign recognition, obstructed sensor detection, reflection removal, multi-sensor fusion, and more.

Of course, “the proof of the pudding is in the eating,” as they say, so let’s take a look at the following videos:

Nvidia Driveworks vs. Algolux Eos object detection comparison (Environment = Night, Snow).

Tesla OTA Model S Autopilot vs. Algolux Eos object detection comparison (Environment = Night, Highway).

Free space detection in harsh conditions.

Wide-angle pedestrian detection with a dirty lens.

As the folks at Algolux will tell you if you fail to get out of the way fast enough (try not to get trapped in an elevator with them): “The Eos end-to-end architecture combines image formation with vision tasks to address the inherent robustness limitations of today’s camera-based vision systems. Through joint design and training of the optics, image processing, and vision tasks, Eos delivers up to 3x improved accuracy across all conditions, especially in low light and harsh weather. This was benchmarked by leading OEM and Tier 1 customers against state-of-the-art public and commercial alternatives. In addition, Eos has been optimized for efficient real-time performance across common target processors, providing customers with their choice of compute platform.”

For myself, I’d like to share one final image showing a camera system designed/trained using Eos detecting people (purple boxes), vehicles (green boxes), and — what I assume to be — signs or traffic signals (blue boxes).

Eos-designed/trained camera system detecting like an Olympic champion (Image source: Algolux)

I’ve been out walking on nights like this myself and I know how hard it can be to determine “what’s what,” so the above image impresses the socks off me, which isn’t something you want to happen in cold weather.

Seeing this image also prompts me to reiterate one of my heartfelt beliefs that — sometime in the not-so-distant future — we will all be ambling around sporting mixed-reality (MR) AI-enhanced goggles that will change the way in which we interact with the world, our systems, and each other (see also What the FAQ are VR, MR, AR, DR, AV, and HR?). After seeing Eos in action, I’m envisaging my MR-AI goggles being equipped with cameras designed/trained using this technology. “The future’s closer than you think,” as they say. I can’t wait!