I’ve made no secret of the fact that I love science fiction. Thinking about it, you probably wish I’d stop waffling on about this topic. If so, I’m afraid that you’re out of luck (sorry). Ever since I first read I Robot and The Rest of the Robots by Isaac Asimov when I was a young lad, I totally bought into the concept that we would one day create humanoid-shaped and other intelligent robots.

I’m not going to bore you with the three laws of robotics here. If you can’t already recite them by rote, then there’s a good chance you’re reading the wrong column.

If you haven’t already done so, then I strongly recommend you ensconce yourself in a comfy chair, put your feet up, and feast your orbs on I Robot and The Rest of the Robots, followed by The Caves of Steel and The Naked Sun. In these latter two tomes, also by Asimov, we meet R. Daneel Olivaw, who is a robot so lifelike that the only way people know he’s not human is the “R.” portion of his appellation.

And let’s not forget Great Sky River by Gregory Benford. Set around 100,000 years in our future, this tells the tale of humans who have made their way close to the center of our galaxy where they run into less-than-friendly mechanoid civilizations. It’s safe to say that these mechanoids have no truck with Asimov’s three laws of robotics. I don’t wish to cast aspersions (my throwing arm is not what it used to be), but these are the sort of robots who give robots a bad name.

One thing I took for granted is that, in addition to the ability to see and hear, robots like R. Daneel Olivaw would also have the ability to smell, taste, and touch. There’s currently a lot of work going on with respect to developing olfactory and gustatory sensors to give machines the ability to smell and taste, but what about the ability to touch?

One very interesting technology is BeBop RoboSkin, which can endow robots with tactile awareness that exceeds the capabilities of human beings with respect to spatial resolution and sensitivity. A robot whose fingertips are thus equipped can even read Braille, for goodness sake (see also BeBop RoboSkin Provides Tactile Awareness for Robots)!

As interesting as BeBop RoboSkin is, however, I wouldn’t expect to see robots with it covering their whole bodies anytime soon. The same thing applies to cars and trucks. As awesome as KITT (Knight Industries two Thousand) was in the Knight Rider TV series, which originally aired from 1982 to 1986, I don’t think anyone imagined it being covered in the equivalent of BeBop RoboSkin.

So, what’s the solution? How do we give machines like cars and robots the ability to feel things happening all over their bodies? Well, the folks at Taifang Technology think they have the answer.

Recently, I had the opportunity to chat with Dr. Charles Do (Founder and CEO), Bob Yang, (Director of Business Development), and Mark Whitlock (VP of Global Sales) at Taifang. I quickly discovered that the company is on a mission to give everything a sense of touch, from wearables like earbuds, to smartphones, to tablet and laptop computers, to cars and robots (I have no idea what they plan to do in their spare time).

Let’s start with the fact that the guys and gals at Taifang are experts with respect to elastic waves. “What are elastic waves?” you ask. I’m glad you raised this question because I was puzzled also. According to the Encyclopedia Britannica, an elastic wave is: “Motion in a medium in which, when particles are displaced, a force proportional to the displacement acts on the particles to restore them to their original position.” The Britannica goes on to say, “If a material has the property of elasticity and the particles in a certain region are set in vibratory motion, an elastic wave will be propagated.”

I also looked up wave on the Wikipedia, which has a section on waves in an elastic medium. On the basis that I need to struggle for my art, I also tracked down a short video on The Elastic Wave Equation.

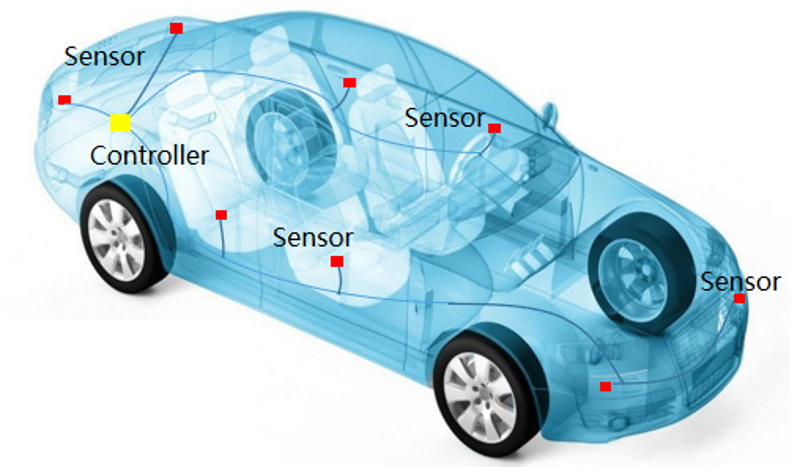

Now my head hurts. From what I can see, the elastic wave equation is reminiscent of the regular wave equation on steroids. The thing is that the chaps and chapesses are doyens in the elastic wave domain, giving them the ability to tease out any information contained in such a wave, no matter how hidden that information is. As an example, consider the image below. This depicts TAIPS, which stands for Taifang Automobile Intelligent Perception System.

Taifang Automobile Intelligent Perception System (TAIPS) (Source: Taifang)

As we see, in this implementation, each main panel on the car has one of Taifang’s elastic wave sensors, where each sensor is described as being “smaller than a grain of rice” with a temperature range of -40°C to 125°C. There’s also a controller running Taifang’s proprietary algorithms.

“But why not use regular MEMS accelerometers?” I hear you cry. I’m glad you asked. Regular accelerometers can detect overall changes only in acceleration and, in the context of automotive applications, are of interest only for collisions >= 30 km/h, in which case all they can do is say “we just collided with something” and trigger the car’s airbags, for example. By comparison, in conjunction with the algorithms running on the controller, Taifang’s sensors can be used to determine the location of a collision >= 1 km/h, the force associated with the collision, and the material (soft = human, hard = not-human) of whatever was collided with.

One interesting point is that there are no blind spots. If you were slowly reversing in a parking lot, for example, and you bumped into a little kid, TAIPS could automatically slam on the brakes leaving the youngster with naught but a bruise (if that).

If some ne’er-do-well bumps into the car while it’s parked, or if some nefarious scoundrel scrapes the car with something like a key, the car could differentiate between the two and activate an appropriate security response (I would opt for a taser in the latter case, but that’s just me).

Apparently, there’s a move in China to impose some new mandatory standards targeted at protecting pedestrians. For example, the folks at Taifang are collaborating with OEMs on advanced research regarding active hoods and outside airbags.

Car with active hood and outside airbag (Source: Taifang)

With a response time of <5ms, TAIPS offers a promising method for detecting a collision, triggering the active hood, and deploying the outside airbag before an unfortunate ex-pedestrian comes flying through the windscreen. Thinking about it, one of my friends here in the USA collided with a deer driving at night (my friend was the one who was driving). I bet he would have loved to have one of these active hood/outside airbag combos on his car.

One more thing the folks at Taifang showed me was a video showing a demonstration of a robot equipped with their touch sensing technology. All I can say is that it was a bit weird hearing the robot being able to distinguish between pats and slaps on different parts of its body.

Robot equipped with Taifang’s touch sensing technology (Source: Taifang)

So, there we are. Are we heading for a future in which every surface has a sense of touch? If so, what will this mean? Am I going to have to apologize to every inanimate object I blunder into during the course of my day (and I tend to stumble and stagger into a lot of things)? And can you think of any other applications for this technology? As always, I await your comments in dread antici…