Robots have captured our imagination ever since Czech writer Karel Čapek coined the word in his play titled “R.U.R” (Rossumovi Univerzální Roboti or Rossum’s Universal Robots), which he wrote in 1920. The play premiered the following year, and even in that first appearance, things didn’t go well for people. Eventually, Rossum’s robots revolted and wiped out the human race, which set an oft-repeated precedent for the next century’s worth of robot stories.

Robots quickly jumped into the silver and small screens with many memorable appearances including:

- The art deco Maschinenmensch in Fritz Lang’s “Metropolis” (1927)

- The Tin Man in “The Wizard of Oz” (1939)

- The galactic, planet-destroying cop named Gort from “The Day the Earth Stood Still” (1951)

- The durable and multitalented Robbie the Robot, who first appeared in “Forbidden Planet” (1956)

- The robots of Muni-Mula on “Ruff and Reddy” (1957)

- Rosey/Rosie the robot maid in “The Jetsons” (1962)

- The B-9 “That does not compute” robot in “Lost in Space” (1964)

- The politically incorrect Fembots in “Doctor Goldfoot and the Bikini Machine” (1965) and again in “Austin Powers: International Man of Mystery” (1997)

- The robotic repair trio Huey, Dewey, and Louie who starred with Bruce Dern in “Silent Running” (1972)

- R2D2 and C3P0 in “Star Wars” (1977)

- Number 5/Johnny Five in “Short Circuit” (1986)

- Commander Data in “Star Trek: The Next Generation” (1994)

And that’s just my personal list of a dozen favorites.

Over many decades, we’ve become quite adept at creating robots for entertainment purposes, as long as it’s a person clomping around in a robot suit (Gort, Robbie, B-9, C3P0, and of course, Huey, Dewey, and Louie) or an animation, whether hand-drawn or CGI. However, building real robots is considerably harder, no matter what the moviemakers might have you think.

We’ve been designing and building real robots for more than half a century. I’m not counting the early theatrical robot hoaxes like the chess-playing Mechanical Turk from the late 18th century or even Westinghouse’s Electro, the smoking robot, which appeared at the 1939 World’s Fair in New York City. In my opinion, the era of real robotics started with the introduction of Unimation’s Unimate model 1900 in 1961. The Unimate was a robotic arm that could be programmed for tough, dangerous industrial jobs. Its first job was stacking hot metal parts at a General Motors plant in Ewing Township, New Jersey.

Since then, designing robots has been a bespoke sort of endeavor. Because robots can be assigned so many tasks, from the floor-vacuuming Roomba to Da Vinci’s intricate, multi-armed robotic surgeon, each robot needs to be custom designed for the range of tasks it’s expected to handle. Part of that customization is mechanical. A robot vacuum cleaner is going to look very different from a robot surgeon. The other part of the customization is electronic, and that is the part that AMD/Xilinx has decided to address with the introduction of the Kria KR260 Robotics Starter Kit.

The AMD/Xilinx Kria KR260 Robotics Starter Kit is based on the same KR26 SOM used in the company’s KV260 Vision AI Starter Kit

If you follow the FPGA world at all, you may already be familiar with the Kria brand of SOMs (systems on modules). The Kria K26 SOM allows you to incorporate an XCK26-SFVC784-2LV Zynq UltraScale+ MPSoC into your design with a board-level product. The XCK26-SFVC784-2LV MPSOC combines a quad-core Cortex-A53 Arm processor with 256,200 logic cells and 1248 DSP slices in an UltraScale+ FPGA fabric and is available only on the K26 SOM, not as a separate device.

AMD/Xilinx previously announced a video-oriented starter kit based on the Kria K26 SOM. It’s the $199 Kria KV260 Vision AI Starter Kit. This starter kit contains the Kria K26 SOM; a carrier board for the SOM with key vision-oriented interfaces such as MIPI, HDMI, and DisplayPort broken out; and pre-written and debugged application software supporting several applications including retail analytics, security, smart cameras, and machine vision. The idea behind this starter kit is that you can get a head start with the predesigned hardware and software, and once you have successfully prototyped your design, you can develop the product in final form by designing a custom carrier card for the K26 SOM.

Part of the ongoing development of the Kria KV260 video starter kit series has been the creation of a Kria Embedded App Store. The idea here is that developers can download working apps that support the types of products they’re designing. These apps accelerate various tasks using the programmable-logic resources integrated into the Zynq UltraScale+ MPSOC. You might think of these apps as productized reference designs.

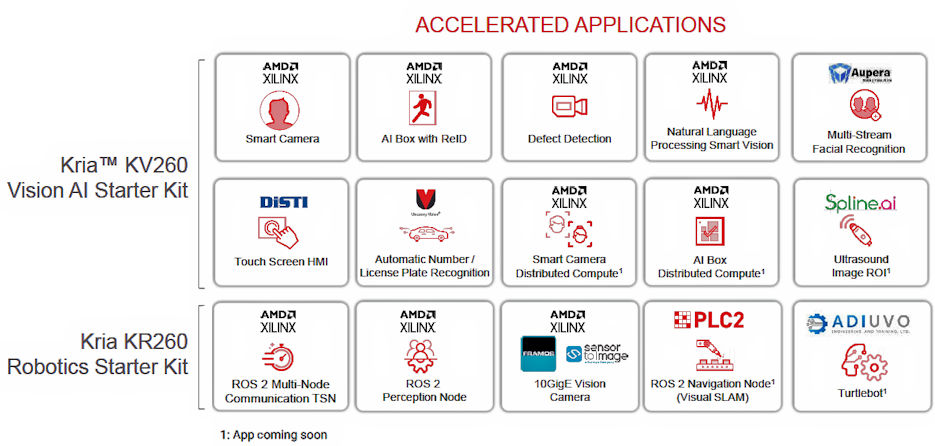

The apps now available for the Kria KV260 Vision AI Starter Kit now include:

- Smart Camera

- AI Box with ReID

- Defect Detection

- Natural Language Processing for Smart Vision

- Multi-Stream Facial Recognition

- Touch Screen HMI

- Automatic Number//License Plate Recognition

- Smart Camera Distributed Compute (coming soon)

- AI Box Distributed Compute (coming soon)

- Ultrasound Image ROI (coming soon)

AMD/Xilinx has just announced another kit specifically aimed at robotics applications, the $349 Kria KR260 Robotics Starter Kit, which is based on the same K26 SOM but with a new carrier card and new software and apps to support robotics development.

Big components of that new software are pre-configured versions of Ubuntu Linux and ROS 2, the latest iteration of the “robot operating system.” ROS 2 is a set of open-source software libraries ranging from drivers to more complex algorithms that you’ll need to build robotics applications. The original ROS project was started in 2007, and much has changed in the robotics sphere since then including cameras, sensors, actuators, processors, and processing elements. The ROS 2 project is an ongoing open-source community effort to accommodate those changes.

AMD/Xilinx is augmenting the ROS 2 project with hardware acceleration based on the Zynq UltraScale+ MPSOC’s programmable-logic fabric, and the company has added robot-centric apps in the Kria Embedded App Store. Initially, there are three robotic apps in the store:

- ROS 2 Multi-Node TSN (Time-Sensitive Networking) Communications

- ROS 2 Perception Node

- 10 GigE Vision Camera

Two more robotic apps are “coming soon”:

- ROS 2 Navigation Node (Visual SLAM)

- Turtlebot

Here’s a graphic depicting the currently available and planned accelerated apps in the Kria Embedded Apps store:

Note that the Turtlebot app, marked “coming soon,” is from Adiuvo Engineering. That’s Adam Taylor’s embedded and FPGA design atelier. Anyone who has followed FPGAs for the past decade or so knows Adam Taylor. He’s a prolific author, an accomplished engineer, a true FPGA expert, and the author of the MicroZed Chronicles. So the Turtlebot app will likely be a winner, because that’s just how Taylor rolls.

Again, the idea of the Kria Embedded Apps Store is to accelerate end-product development through these pre-written apps. In fact, the stated AMD/Xilinx goal for the Kria KR260 starter kit is to get you “up and running in less than one hour, no FPGA experience needed” through five simple steps:

- Connect peripherals and cables

- Insert the programmed microSD card

- Power-on the board

- Load accelerated application of your choice

- Run the accelerated application

Of course, these steps do not represent a complete path to a fully developed robot. They’re more of a fast way to familiarize yourself with the starter kit. However, that is an important first step and not to be discounted.

All of this leads to a very basic question: Is FPGA-based acceleration important for robotics? In the case of TSN, the advantages of FPGA-based hardware acceleration are well proven. If you’d like a two-minute tutorial on the topic, I shot video of a TSN-enabled slot track at National Instruments’ NI Week in 2017 demonstrating FPGA-accelerated TSN. The slot track was controlled in real time by two NI CompactRIO cRIO-9035 controllers augmented with Xilinx Kintex-7 FPGAs, which implemented TSN. Sensors along the track help the control system keep obstacles out of the way of the cars as they scoot along the track. Without TSN to ensure timely packet delivery, the slot cars crash. With TSN operating, no crashes.

AMD/Xilinx presents some convincing numbers of its own to make the case for FPGA-based acceleration using the Kria KR260 starter kit and K26 SOM. According to the company, the Kria SOM delivers 6.25X better performance per watt than the Nvidia Jetson Nano Developer Kit and 8.3X better performance per watt for the ROS Perception Pipeline, when compared with the Nvidia Jetson AGX Xavier Developer Kit. Both Nvidia kits were programmed with the Nvidia Isaac open platform for intelligent robots. The Kria KR260 kit also exhibits lower latency than either of the Nvidia developer kits. Of course, your numbers may, and probably will, vary.

So, if you’re looking for a more powerful way to control your next robot design, the AMD/Xilinx Kria KR260 Robotics Starter Kit is something to consider. Meanwhile, I am looking forward to seeing the Kria Embedded Apps Store grow. It’s a key element in the quest to make FPGAs more accessible to more designers.