I just heard some mega-exciting news from the folks at Cartesiam.ai, whose claim to fame is that they enable embedded developers to bring artificial intelligence (AI) and machine learning (ML) to edge devices easily and quickly.

How easily? How quickly? The answer to both of these questions is “Very!” So, what’s the exciting news? Well, I’ll tell you in a minute, but first…

It’s probably not escaped your notice that there’s a tremendous amount of discussion about the Internet of Things (IoT) these days. When we talk about the IoT, a lot of people think of things close to home, like smart thermostats and smart speakers that are in the home. In reality, of course, this is just the tip of the IoT iceberg because connected devices are proliferating in automobiles, cities, factories… actually, it might be easier to write a list of places where the IoT doesn’t appear, but I can’t think of any such situations off the top of my head.

How many IoT devices are there? I no longer have a clue. If you perform a Google search for “How many IoT devices are there in the world?” the responses vary from 7 to 50 billion, so let’s just say “a lot” and move on with our lives. Perhaps a more relevant question would be, “How many IoT devices do you think there are going to be in the world?” I can comfortably answer this one by saying “a lot more,” but I’ll be happy to refine this response if you are in possession of more accurate data.

Let’s focus on the Industrial IoT (IIoT), which refers to interconnected sensors, instruments, and other devices networked together with computers and industrial applications, including manufacturing and energy management. This connectivity allows for data collection, exchange, and analysis, potentially facilitating improvements in productivity and efficiency as well as providing other economic benefits.

If you take all of the factories and other industrial facilities around the world, it’s estimated they contain about 160 billion machines that, if they were asked and if they were sentient, would say that they just want to do a better job (if you doubt this number, you may feel free to go out there and count them; if you doubt their desire, you may feel free to ask them).

But how can these machines do a better job? The answer is to equip them with both IoT connectivity and AI/ML capabilities. The combination of AI/ML and the IoT — commonly referred to as the AIoT — is mutually beneficial. According to the IoT Agenda, AI adds value to the IoT through ML capabilities, while the IoT adds value to AI through connectivity, signaling, and data exchange. The result is to achieve more efficient operations, improve human-machine interactions, and enhance data management and analytics.

Let us further focus our attention on machine maintenance. As I noted in an earlier column — I Just Created my First AI/ML App! — there are various maintenance strategies available to the people in charge of industrial concerns, including reactive (run it until it fails and then fix it), pre-emptive (fix it before it even thinks about failing), and predictive (use AI/ML to monitor the machine’s health, looking for anomalies or trends, and guiding the maintenance team to address potential problems before they become real problems).

I think we are all agreed that adding AI/ML to embedded systems can be jolly efficacious, the only problem being that so few designers of embedded systems have an AI/ML clue. According to the International Data Corporation (IDC), there are currently around 22 million software developers in the world. Only about 1.2 million of these little scamps focus on embedded systems, and only about 0.2% of those little rascals have even minimal AI/ML skills.

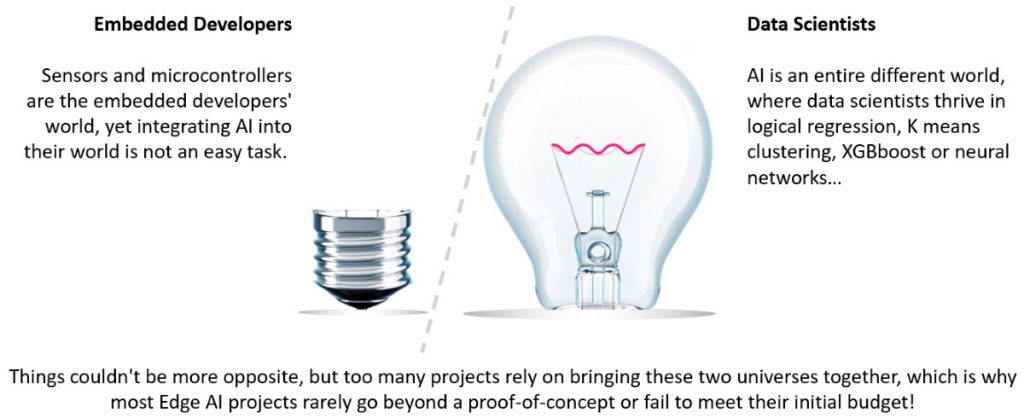

Embedded developers and data scientists live in different worlds (Image source: Cartesiam)

And so we return to the chaps and chapesses at Cartesiam, who are the proud creators of NanoEdge AI Studio. Just to refresh your memory, this is a program that runs on a PC. When you launch NanoEdge AI Studio, you can either open an existing project or create a new one. If you are creating a new project, you will be invited to answer a few simple questions, including the microcontroller you will be using (current options are ARM Cortex M0, M0+, M3, M4, and M7), the number and types of sensors you wish to use (not specific part numbers, just general types like “3-axis accelerometer”), and the maximum amount of RAM you wish to devote to your AI/ML solution.

Preparing to create a new project in NanoEdge AI Studio (Image source: Cartesiam)

Next, you capture some sample data from the device in question, where this data includes both regular (good) and abnormal (bad) signals. Now, this is one of the clever parts because — using the information you’ve provided — NanoEdge AI Studio will generate the best AI/ML solution out of ~500 million possible combinations. The resulting solution, which is provided as a C library that is easily embeddable into your main microcontroller program, is subsequently compiled and downloaded into your embedded system.

Quite apart from anything else, the resulting libraries are almost unbelievably small. In the case of my own AL/ML app that I mentioned earlier, the library was only 2 KB, which boggled what little I have left of what I laughingly call my mind.

At this stage, what you have is a generic library. The next step is to train this library on a specific machine. One way to wrap your brain around this is as follows: suppose you purchase two essentially identical pumps for use in a factory. One pump might be mounted on a concrete floor in a warm, dry part of the factory, connected to whatever it’s pumping via short lengths of PVC pipe. The other might be mounted on a suspended floor in a cold, damp location, connected to whatever it’s pumping via long runs of metal tubing. In such a case, the two machines — although physically similar — may have very different operational characteristics in terms of noise and vibration, for example, which explains why each is best trained independently.

Of course, the really cool thing to note here is that you don’t have to create humongous training data sets yourself, because the library generated by NanoEdge AI Studio trains itself by monitoring the machine with which it is associated in action.

The folks at Cartesiam are obviously doing something right. They first launched NanoEdge AI Studio on 25 February 2020. At that time, they had just one beta customer. Now, just nine months later as I pen these words, they have an enviable and ever-expanding portfolio of customers, including some very well-known names indeed.

It’s good to see an expanding portfolio of customers (Image source: Cartesiam)

I was particularly interested to see Bosch up there, because I recently used a 9DOF (nine degrees of freedom) Fusion breakout board (BOB) from Adafruit to control my 12×12 ping pong ball array (see this video). This BOB features a Bosch BNO055 MEMS device containing a 3-axis accelerometer, a 3-axis gyroscope, and a 3-axis magnetometer. Even better for a bear of little brain like that of yours truly, the BNO055 also contains a 32-bit Arm Cortex-M0+ that performs sensor fusion and presents results to you in a form you can use without your brains leaking out of your ears. But we digress…

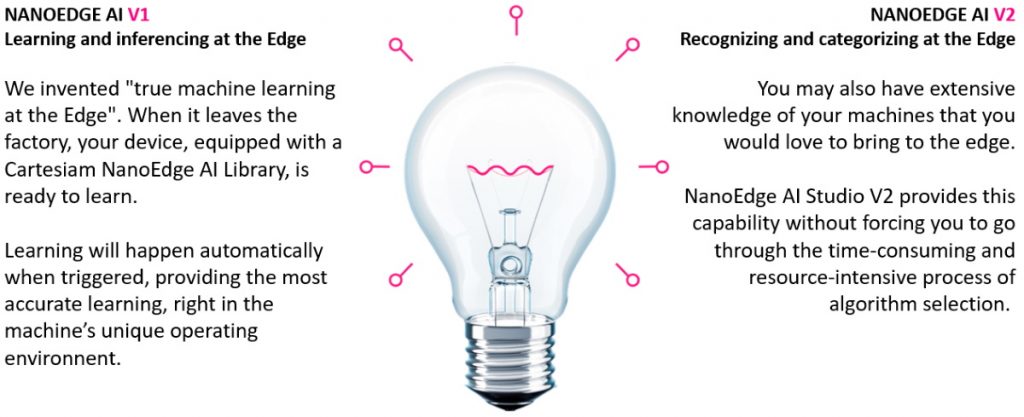

All of this brings us back to the point that, two days ago at the time of this writing, the folks at Cartesiam announced the latest release of NanoEdge AI Studio. In addition to being able to detect anomalies and spot trends, this new version allows you to create a classification library. In fact, you can now use multiple libraries in your solution.

NanoEdge AI Studio V2 allows your AI system to transition from “I’ve detected an anomaly” to “I’ve detected an anomaly and I know what is causing it” (Image source: Cartesiam)

What this means in the real world is that, instead of telling you things like “this dishwasher isn’t draining properly” or “this pump is about to stop working,” your AI system can now recognize and classify the problem and present you with actionable information like “this dishwasher isn’t draining properly because its main drive belt is showing signs of wear” or “this pump is about to stop working due to a failure of a ball bearing in the drive shaft assembly.”

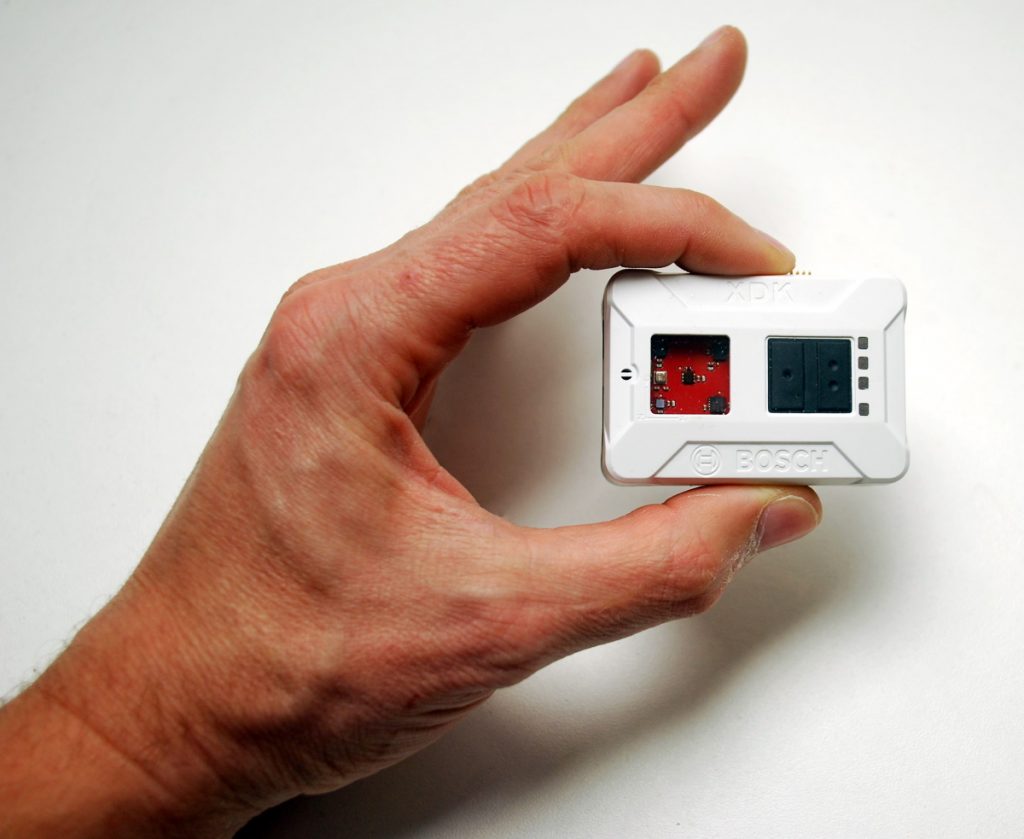

But wait, there’s more, because Cartesiam has also announced a new partnership with Bosch Connected Devices and Solutions, which plans to deploy Cartesiam’s platform in a wide range of internal and external projects. For example, the two companies are currently working closely on a NanoEdge AI Studio integration with the Bosch XDK, which might be described as “A programmable sensor device and prototyping platform for almost any IoT use case you can imagine.”

Meet the Bosch XDK (Image source: Cartesiam)

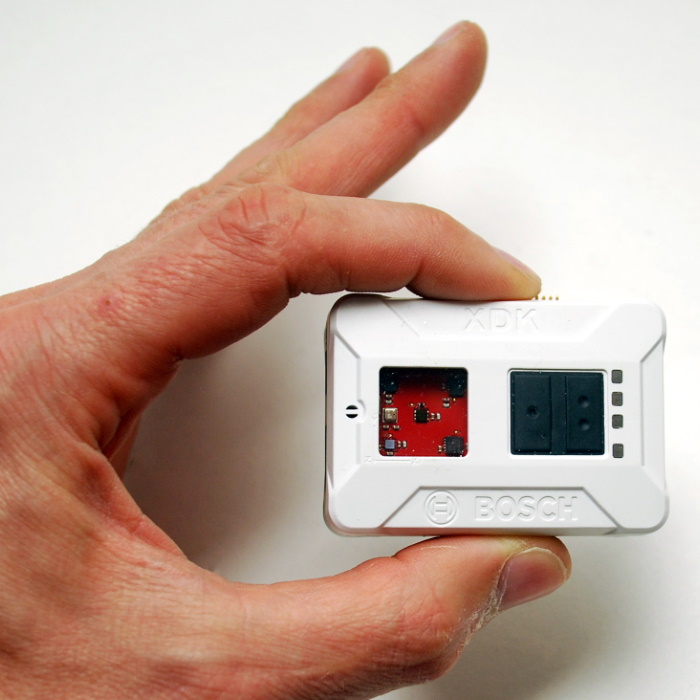

When I first saw the above image, I found it hard to determine a sense of scale. “Just how big is this little rascal?” I wondered. So, I asked one of the guys at Cartesiam to send me a picture of him holding one, and he responded with the image shown below.

Another view of a Bosch XDK (Image source: François de Rochebouët)

Wow! That’s a lot smaller than I first suspected. This is tiny enough to be useful in a tremendous variety of deployments, and it certainly has more than enough sensors to keep me busy for a while. I can see why Bosch and Cartesiam are combining forces on this — the combination of the XDK with NanoEdge AI Studio will open the floodgates to embedded systems developers, allowing them to quickly and easily prototype their own AI/ML-equipped edge devices for a wide variety of consumer, commercial, and industrial deployments. What say you? As always, I welcome your comments, questions, and suggestions.

2 thoughts on “Bosch HW + Cartesiam SW = Rapid Prototyping of AI on the Edge”