“Is this the party to whom I am speaking?” – Lily Tomlin

Amazon has been developing and using AI and IoT technology internally for years:

Personalized recommendations and Daily Deals on Amazon.com

Warehouse robot motion optimization in its fulfillment centers

Drone flight control (Prime Air)

Alexa’s natural language processing

Amazon Go cashierless stores (“Let’s go shoplifting!”)

With years of AI and IoT experience under its belt, Amazon Web Services (AWS) now wants to be your preferred AI and IoT service provider. That was the underlying message in Satyen Yadav’s keynote presentation at the recent ARC Processor Summit held in San Jose, California. Yadav is General Manager for the IoT at AWS. His keynote presentation was titled, “Life on the Edge: AI + IoT (AIoT) driving intelligence to the Edge.” (Note the contraction of AI and IoT to AIoT. Just too cute, no?)

Yadav started with an overly simplistic description of why the industry is so interested in the IoT: “Connect everything to the Internet and good things will happen.”

A wing-and-a-prayer truism if there ever was one. But, “it hasn’t happened yet,” said Yadav.

However, predictions by the consulting firm McKinsey & Company are that the IoT will have a $3.9 to $11.1 trillion dollar economic impact, whatever that means. This tremendously large number, somewhere in the low trillions, is the real reason that the electronics industry is IoT-happy. It’s nice that McKinsey has the resolution of that prediction down to the closest $100 billion, even though the range is about $7 trillion. However, I think we’ll all agree that the IoT’s impact will be large, just as the economic impact of the Internet itself is large and growing.

Yadav focused this keynote on “the edge” so it’s important to understand what he meant by that. The edge of the network includes homes, cars, doctors’ offices, factories, farms, and even wind turbines. All of these locations are at the edges of their respective networks, and the evolution of the IoT in these locations will occur in three phases:

Phase 1: Connecting everything to the Internet. This is a hugely complicated task that will rely on many competing wireless technologies and communications protocol standards. Many of these connections will be accompanied by battery limitations and low-power considerations.

Phase 2: Programming the IoT-connected things to create easily updated IoT systems with enhanced functionality (i.e. adaptive behavior).

Phase 3: Automating these IoT systems so that they can improve their own operation by heuristic learning.

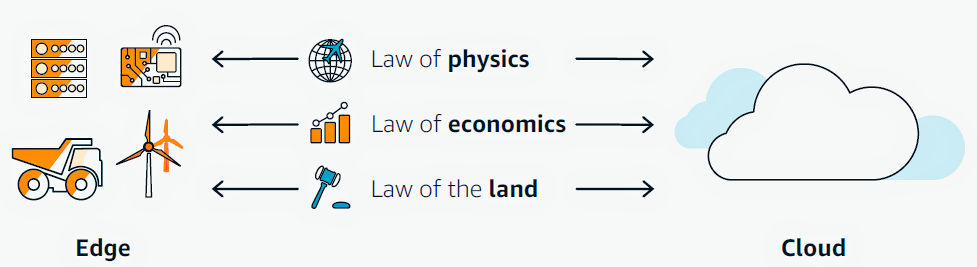

Three set of laws drive edge computing, said Yadav:

The Laws of Physics: The speed of light is a constant, setting the minimum latency for IoT system response.

The Laws of Economics: Not all data has the same value. The data’s value determines what data stays local, what data goes to the Cloud, and what data is discarded.

The Laws of the Land: Many legal rules such as privacy laws, HIPAA, and inter-country boundaries determine where data can be sent and where it can be stored.

IoT system designs must follow all of these laws and, if you’re feeling Asimovian, you could call them the three laws of IoT or the three laws of edge computing.

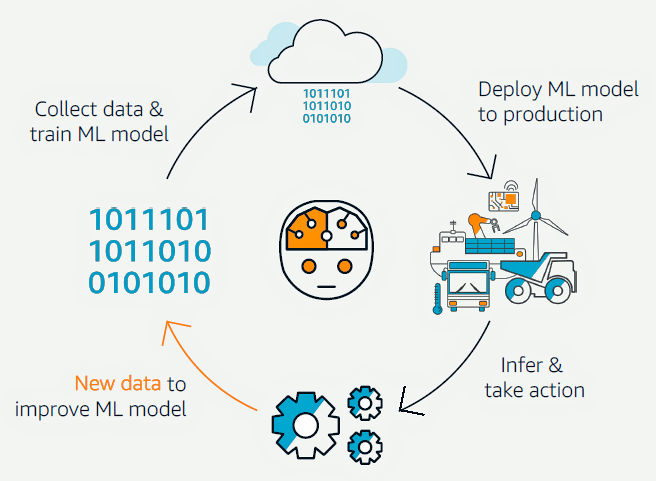

Combining AI or ML (machine learning) with the IoT involves a virtuous, constant-improvement cycle:

- Collect data and train the ML model.

- Deploy the trained model into the IoT/Edge system.

- Start making inferences based on the model and take actions based on those inferences.

- Take more data and feed the ML model to improve it.

- Lather, rinse, repeat.

AI added to the IoT creates the AIoT, said Yadav. The need for such an intelligent system spans nearly all industries and applications including industrial, infrastructure, energy, retail, healthcare, manufacturing, transportation, mining, oil and gas exploration/development, smart homes and cities, and agriculture. These applications encompass a lot of “things,” embedded systems and larger, that can benefit from networking and edge computing. Yadav provided several explicit examples:

Agriculture (Grow food better, improve crop yield): control irrigation, provide environmental support (temperature and lighting control in greenhouses), use cameras in a greenhouse to determine the maturity of plant growth, track plant-growth cycles, measure soil health with networked sensors.

Example: Yanmar started as an engine manufacturer and now focuses on the challenges of food production and the use of energy for food production. One of Yanmar’s challenges is administering the right amount of water and environmental support, including fans and air conditioners, to produce optimum crop yield in greenhouses. The company plans on installing as many as twelve cameras in each greenhouse to take pictures of the plants. They will then use ML algorithms with AWS Greengrass to recognize plant-growth stages and will analyze care factors—including watering patterns and frequency, moisture, and temperature—to heuristically develop optimum growth setting for each stage of plant development.

Energy (Use energy better): Reduce operating costs and improve customer satisfaction through effective energy use with less energy waste.

Example: Enel, an Italian multinational manufacturer and distributor of electricity and gas, serves 61 million customers. The company is planning to use AWS Greengrass in the network gateways to more than one hundred thousand nodes to collect both the electrical meter data and the data from secondary measurements and environmental sensors and to offer customers incentives for timed energy use. By using AWS Greengrass at the edge, Enel saves 21% on compute costs and 60% on storage costs, and it has reduced provisioning time from four weeks to two days.

Healthcare (Treat patients better): Reduce the bottlenecks and errors in the medical supply chain through connected systems.

Example: M3 Health uses AWS IoT 1-Click with Ping to connect healthcare providers to pharmaceutical and biotech companies. Requests for service, such as fulfilment of prescription drug samples, can be initiated at the touch of a button. (This service clearly seems to have been derived from Amazon’s Dash buttons, originally designed to help consumers order staples like detergent and diapers by pressing one button on a WiFi-connected device.

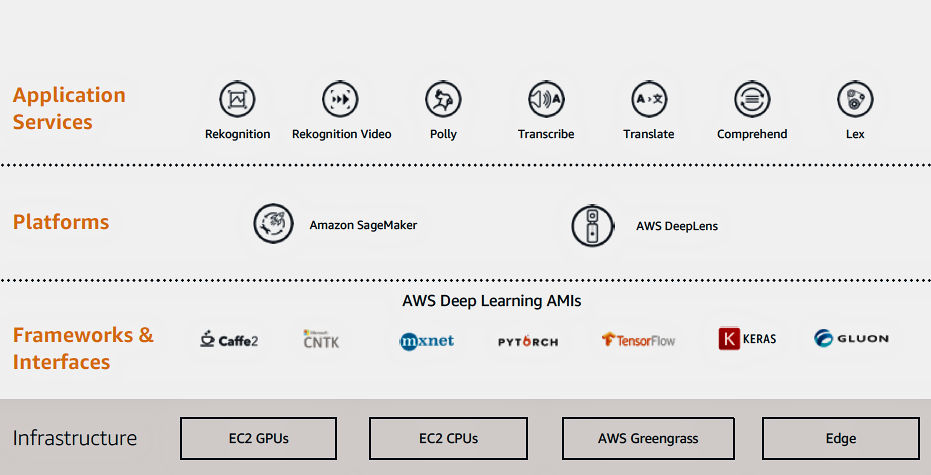

Amazon has taken a layered approach in creating its AI/ML-enhanced, edge-computing services, as shown below:

The foundation of this layer stack consists of AWS’ computing instances including AWS EC2 CPUs and GPUs, as well as AWS Greengrass, which provides local compute, messaging, data-caching, sync, and ML inference capabilities for connected devices. AWS Greengrass runs IoT applications “seamlessly” across the AWS cloud and on local devices using AWS Lambda and AWS IoT Core.

Atop this Amazon-designed infrastructure layer are the familiar AI/ML frameworks including Caffe2, mxnet, PyTorch, TensorFlow, and Gluon. These are the tools that AI/ML experts strongly prefer and use. However, AWS intends its services to be used by non-experts as well, so it has developed more layers to sit over the frameworks.

Two AWS “platforms” sit directly above the framework layer: Amazon SageMaker and DeepLens. SageMaker is based on Jupyter Notebooks and is designed to allow ML network training from captured data with a single click.

DeepLens is a $249 deep-learning development kit and video camera that you can order directly from Amazon.com. Amazon’s DeepLens description claims that this kit “allows developers of all skill levels to get started with deep learning in less than 10 minutes.” If so, that’s a low-cost, extremely quick way to get started with deep learning.

Amazon offers DeepLens with at least half a dozen sample projects to start with, including object detection, face detection and recognition, activity detection (think security cameras and baby monitors), cat versus dog, and “hot dog/no hot dog.” (You might be hard-pressed to come up with practical applications for those last two sample projects unless you have a “Der Wienerschnitzel” fast-food franchise.)

There’s yet one more layer to this AI/ML-enhanced, edge-computing stack: an application layer. Amazon has developed off-the-shelf AI/ML applications including:

Rekognition and Rekognition Video: Provide an image (Rekognition) or video (Rekognition Video) to the Rekognition API, and the service can identify the objects, people, text, scenes, and activities, as well as detect any inappropriate content.

Polly: Converts text into “lifelike speech,” allowing you to create applications that talk, and to build entirely new categories of speech-enabled products.

Transcribe: A speech-recognition service that makes it “easy” for developers to add speech-to-text capability to their applications.

Translate: A machine translation service that delivers “fast, high-quality, and affordable” language translation.

Comprehend: A natural language processing (NLP) service that uses machine learning to find insights and relationships in text.

Lex: A service for building conversational interfaces into any application using voice and text.

Unless you’ve been avoiding the use of the Internet for the last decade or so, you’ll recognize these AI/ML services as useful applications that Amazon originally developed for its retail online sales, including Alexa’s cloud-based guts. The company is now intent on retasking and monetizing these applications more directly by offering them as pay-as-you-go services to the broader IoT (or AIoT) development community.

And why not? Like AWS cloud computing, these services are likely to look like real bargains considering what it would cost for you to develop them to the level of refinement that Amazon needs to coddle its customers and turn them into reliable, frequent, repeat buyers. It seems like a win/win for both Amazon and the IoT development community.

According to Yadav, AWS already has a long and growing customer list for these AWS IoT services. The slide he projected during his keynote included more than 50 company logos. Many were company names you would not associate with IoT development, including Amway, ExxonMobil, MLB (Major League Baseball), MillerCoors, Nestle, Under Armour, and Warby Parker (eyeglasses).

It’s clear that Amazon and AWS have chosen a very big pond here. Perhaps a multi-trillion-dollar pond if we’re to believe McKinsey.

5 thoughts on “AWS wants to be Your AI and IoT Services Supplier”