I fear I am becoming befuddled, bewildered, and bemused. It seems like every day I get to chat with a new company (well, a company new to me) that’s involved with artificial intelligence (AI) and machine learning (ML) in some capacity or other. Some of these companies focus on the cloud, while others target the edge. Some devote themselves to the software side of things, like developing new generative AI tools, while others turn their attentions to creating hardware—chips, modules, and systems—that can execute AI/ML applications at lightning speed.

One thing that really takes my breath away is the speed with which things are moving in AI/ML space (where no one can hear you scream). Things seemed to amble along at a much more leisurely pace in those days of yore when I wore a younger man’s clothes. Now, by comparison, innovative new startups seem to sprout like mushrooms, transitioning from “two people in a garage” to a fully-fledged company shipping fully developed products in the blink of a metaphorical eye.

As a prime example, may I offer Hailo, which started off in 2017. Only six years later, as I pen these words, the Hailo team is 220 persons strong (well, it was when I started writing this column—I have no idea how much the team has grown since then) and they are shipping edge AI processors and vision processing units (VPUs) with gusto and abandon.

Meet the Hailo team (Source: Hailo)

I was just chatting with Avi Baum, who is Hailo’s CTO. As Avi says, “the edge” is a bit of an elusive term these days but—as far as Hailo is concerned—the edge is anything that is not cloud based (I like a man who doesn’t let himself get pushed into a corner). Examples span from something as small as a camera to something as large as a car.

Right from the get-go, Hailo’s philosophy with respect to AI/ML applications was not to attempt to modify what runs on the cloud, but rather to enable applications that run on the cloud to be available for deployment on edge devices.

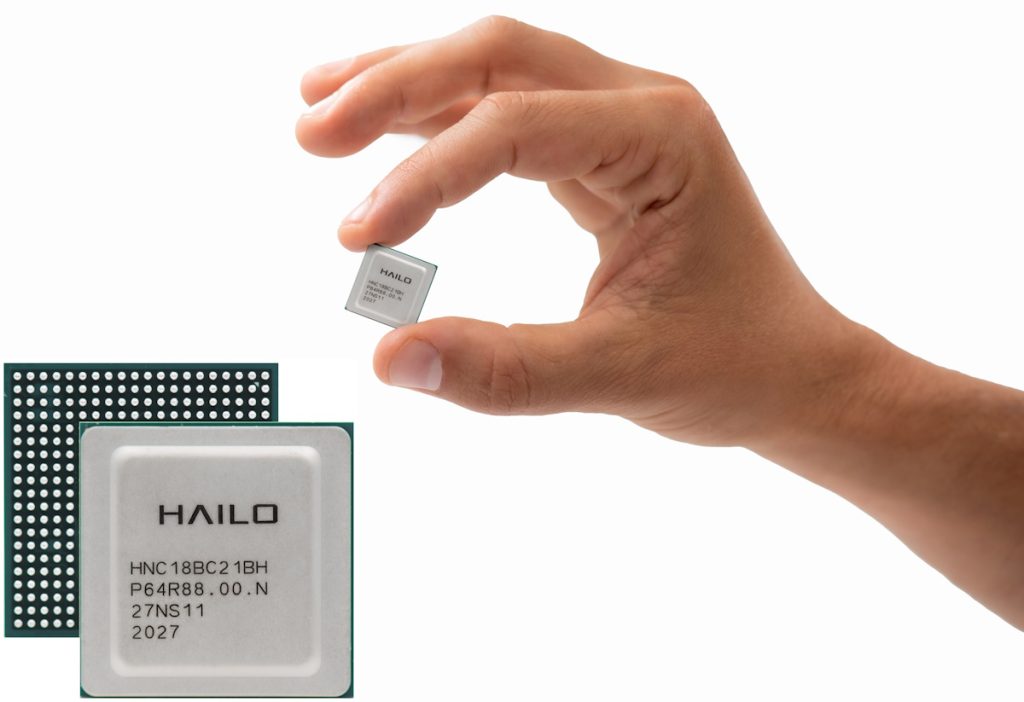

Artificial neural networks (ANNs) have their own way of being processed. Traditional processors can perform this processing, but they aren’t very good at it in terms of speed and power, so the guys and gals at Hailo decided to create their own ANN processing architecture from scratch. The result is the Hailo-8, which is implemented using the TSMC 16nm process node and acts as an AI/ML accelerator to a regular CPU. In the same way that a graphics processing unit (GPU) is a graphics add-on, so a Hailo-8 is an AI add-on. This means that, with Hailo-8, you still require a main processor like an ARM processor or an X86 processor that is running traditional code in the form of a rule-based application.

Meet the Hailo-8 AI/ML accelerator (Source: Hailo)

Avi is keen to note that having this form of AI/ML accelerator to perform the “heavy lifting” is only part of the picture. As he says, this comes hand-in-hand with the assumption that Hailo must build and supply the software tool chain, so their users don’t have to do it themselves and they don’t have to learn a new machine architecture. I must agree that this is where I’ve seen a lot of startups drop the ball—they created some mega-cool hardware that no one could use effectively due to lack of supporting software.

I’m afraid I was a little naughty at this point, because I pointed out to Avi that I’ve talked to quite a few companies dipping their toes in the edge AI/ML waters who have told me how wonderful they are. I asked how Hailo differentiates itself. Avi responded that “the proof is in the pudding,” which is an expression I often use myself.

First, Avi noted that Hailo is among the few companies in this arena that have made it full cycle—all the way from conceptualization to full productization. In his own words: “Many startups drop the ball along the way from concept through design to product to volume production.” By comparison, Hailo-8 is in volume production and deployed in multiple products from multiple customers.

Avi followed up by saying that, as far as he’s aware, Hailo-8 is the most efficient edge AI/ML processor on the market today, and that there’s no other processor at this operational point. The Hailo-8 offers 26 tera operations per second (TOPS), running at about 3 TOPS per watt (all the benchmarks comparing Hailo-8 to competitive offerings are available on Hailo’s website).

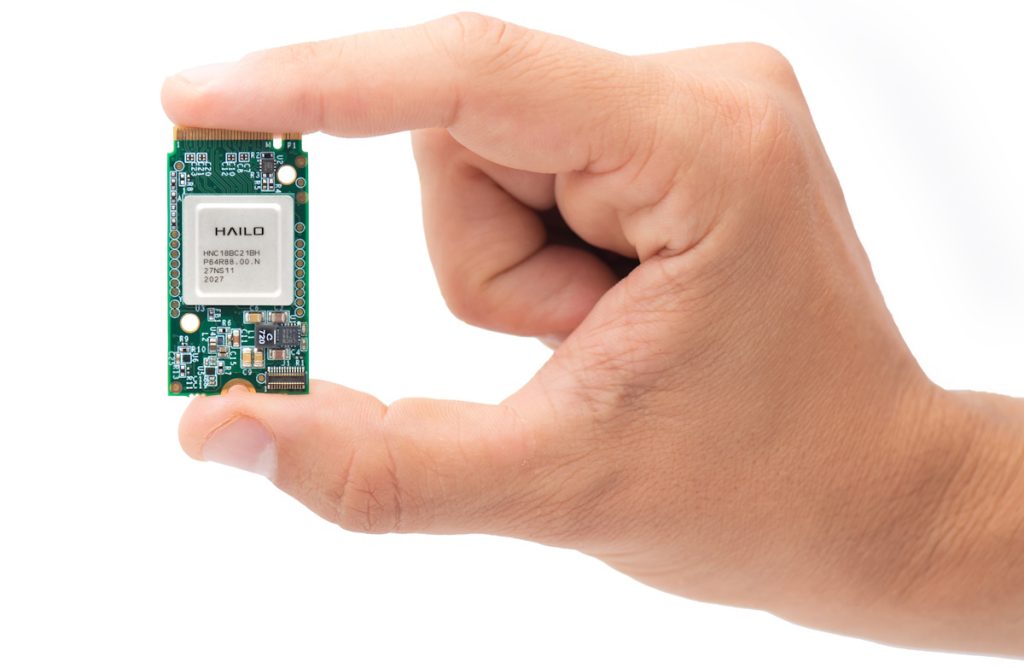

Hailo-8 AI/ML accelerators are available as standalone devices, on M.2 cards, and—recently announced—on Hailo’s Century PCIe card line. In this case, Century cards are available with 2, 4, 6, or 8 Hailo-8 devices, thereby offering anything from 52 to 208 TOPS.

A single Hailo-8 AI/ML accelerator presented on an M.2 card (Source: Hailo)

Hailo’s Century PCIe cards deliver best-in-class power efficiency at 400 FPS per watt on the ResNet50 benchmark model. They also offer the highest cost efficiency, starting at $249 for the 52 TOPS card, which represents up to 70% reduction in edge AI/ML deployment costs. Furthermore, they feature a robust design that supports industrial temperature ranges and ensures compatibility with virtually any environment or application.

But wait, there’s more, because the chaps and chapesses at Hailo have taken their Hailo-8 technology and combined it with a CPU, resulting in the Hailo-15 vision processing unit (VPU). The result is a device with the form factor of a regular CPU that combines the traditional functionality of an ARM-based processor with neural network offloading, all in a single chip.

Meet the Hailo-15 vision processing unit (Source: Hailo)

These little scamps (by which I mean Hailo-15s, not the folks at Hailo) are destined to address deep edge devices with the form factor of a camera, for example.

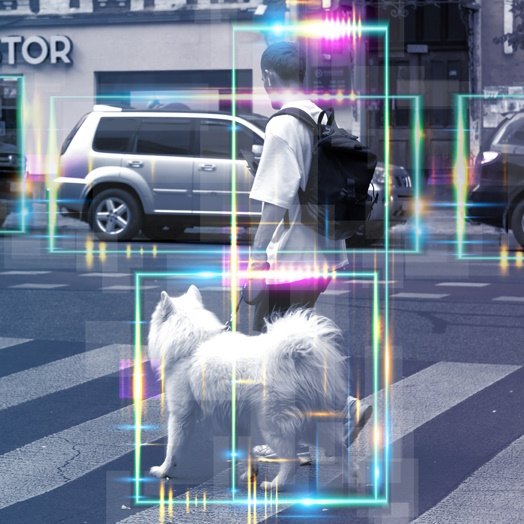

The breadth of markets that can be addressed by these devices and cards is mind-boggling. I’m thinking cars, robots, smart security cameras and surveillance systems, smart homes and smart cities, industrial applications, and… the list goes on.

I don’t know about you, but I must admit to being jolly impressed. For a startup company to be able to transition from concept to volume production of SoCs (both AI/ML accelerators and VPUs) and cards (both M.2 and PCIe form factors) is tremendously inspiring. I’m excited to see what they come up with next. How about you? What are your thoughts on all of this?