It’s midnight; do you know what your IoT device is doing right now?

You do all the right things when designing your gadget: you do extensive verification; you build in some communication security stuff for secure connections; you even disable the debug port when not in use by an authorized repairperson. But… after you ship it, how do you know that it’s not being compromised?

The answer may be that you don’t know; you just have faith, based on the safeguards you built in, that no one has been able to penetrate the fortress. But what if there was a chink in the wall that you didn’t think of? What if someone is in your system right now plotting world domination and/or ruination?

Without specific added safeguards, you can’t say for certain that this isn’t happening. (OK, fair enough, nothing is certain in this world… yes, it all comes down the number of 9s: how many are you good for?)

If you have the system BOM budget for it, you can include some sort of hardware security ID facility. Computers do this with a Trusted Platform Module, or TPM. If you have a credit card or other small item that absolutely requires security, you may have a Secure Element, or SE. Yeah, kind of the same as a TPM, but, since TPMs are associated with bulky computers, small-gadget folks prefer to use a different name.

But these are extra pieces of hardware. They take space and energy, and they cost money. There are numerous smart (or getting-smart) widgets that have little money, power, or space budget to spare. So what can they do?

Software Device Identity

That’s where the Device Identier Composition Engine, or DICE, comes in. (For once, the correct use of the plural of “die,” only not used as such… <le sigh…>) This is a methodology put forth by the Trusted Computing Group, from whom comes the TPM. The idea is that you don’t have to have dedicated hardware to know that your system hasn’t been compromised.

This topic hit my radar based on a conversation with Microchip at the IoT Security DevCon back in June. They had a microcontroller, the CEC1702, that had been Azure-approved, with secure boot capabilities using DICE, among other things. So I dug in a little to see what this is all about.

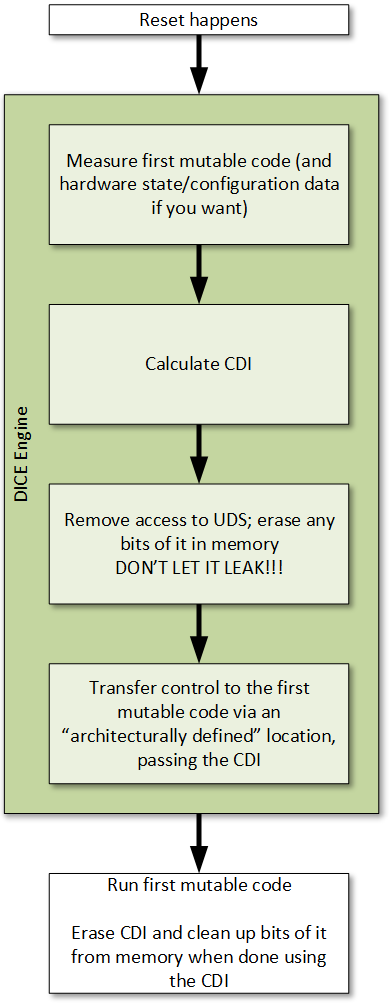

DICE applies to boot-up. It helps to prove – or at least to assure – that your system hasn’t been monkeyed with since you booted up last. It’s part of a Robust IoT (RIoT) as proposed by Microsoft. If malware is inserted after boot, then that won’t be detected until the next boot – assuming that malware is persistent. If not persistent, then DICE doesn’t appear to deal with that.

There are a few notions to sort out here. The first is that of a Unique Device Secret. What is this? Well, it’s a secret, so we really can’t tell you. Oh well, OK, since you insist… we actually don’t know because DICE doesn’t specify how that secret is stored or retrieved. You might have a key stored in ROM – weak if it’s the same key for each device. Or you might use a PUF (which we’ll be talking more about shortly). The point is, you can do what makes most sense for your system and your price point.

Critical here is UDS accessibility. The UDS should be accessible only during boot and only by authorized code, and that code should then be able to shut down access to the UDS until the next boot. If the UDS leaks, the security it provides is gone. Yes, we’ll clarify this after the remaining notions.

The next notion is that of immutable vs. mutable code. As the terms suggest, immutable code can’t be changed. But what does that mean specifically? The DICE spec says that either the code cannot be changed ever after manufacturing, or, alternatively, it can be changed or updated only by some manufacturer in the supply chain.

Most code is not immutable. In fact, in our context, we’re talking about boot code. The first code executed must be immutable, after which we start executing regular, or mutable, code that’s still part of the reset sequence. And DICE makes reference to the first such program to be executed: the first mutable code.

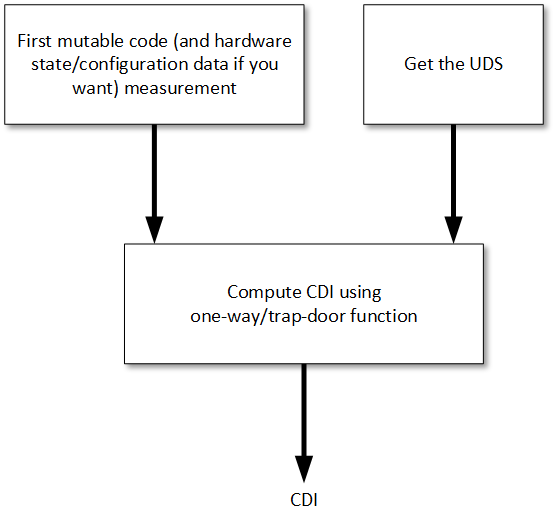

Our third notion is the Compound Device ID, or CDI. This is a combination of the UDS and a hash of the first mutable code. What combination might that be? Again, not specified, because it really doesn’t matter – as long as there’s no way to retrieve the UDS from the CDI. Two examples are shown below (where H() indicates a hash, HMAC(K, m) is a cryptographic hash of a message m with a key K, and || indicates concatenation):

H(UDS||H(first mutable code))

HMAC(UDS, H(first mutable code))

But, if you have a better way to do it, great. You just have to use a so-called one-way or trap-door function that works well in one direction but not at all in the reverse direction. As an option, you can include the hardware state and any configuration data in the first-mutable-code hash.

Booting Securely

The idea is that this CDI can then be handed to the first mutable code for it to use. How will that code use the CDI? Again , that’s not specified; you’re free to do with the CDI what you will. But there are some important considerations.

The first mutable code is still part of the boot process. So it’s where you can do things like decrypting code or checking the authenticity of other code. But it has to do something to ensure that the system can’t operate correctly if the first mutable code has been monkeyed with.

One obvious way to attempt some protection would easily be thwarted. Let’s say that, upon installation, you compute and store the CDI. Now, with each boot, you compare the newly calculated CDI against the stored one, and, if it passes, you simply start the next boot steps. Well, all an attacker would have to do would be to replace the entire first mutable code with something that doesn’t check the CDI, and now you have no protection.

If, on the other hand, the first mutable code then decrypts the following code using the CDI, then the attacker can’t replace the first mutable code outright – because it won’t be able to decrypt the next code, and the boot-up can’t continue.

But what if other code is compromised? Well, the first mutable code could run checks on hashes of other applications as well. But this is all part of the “you’re on your own” nature of this spec. The first mutable code – as long as it catches changes to itself – can handle all other attestations. That’s why it’s so important to make sure that it’s protected – the role of the CDI.

The process, then, for boot-up looks something like this:

Mutability and Updates

The DICE engine is supposed to be immutable; the first mutable code is, well, <duh!>, mutable. What are the practical implications? There are two key things:

- Making the DICE engine updatable is a vendor option. But if you do change the engine, it can’t result in a new CDI. The CDI calculated by the old engine must be the same as that calculated by the updated engine.

- It’s the exact opposite with updates to the first mutable code. Since that code figures in the CDI, then changes to the code will – indeed, must – result in a new CDI.

For this reason, for example, if the CDI accidentally gets leaked, then you can fix it by updating the code (and changing it in a minor way that results in a new hash, even if nothing functionally changes in the code). That’s a pain for the manufacturer to have to do, so there’s still plenty of motivation to protect the CDI. The only difference with the UDS is that, if the UDS is leaked, there’s no way to unleak it. If the CDI is leaked, you can unleak it via the update.

Hopefully, then, this provides a more cost-effective way to trust your cost-and-security-sensitive IoT device.

More info:

2 thoughts on “A Roll of the DICE”