It’s almost obvious that this would be a problem. Well… part of it is obvious; part not so much.

What do accelerometers do? They measure acceleration – including periodic acceleration, more commonly known as vibration. Any vibration within the designed frequency range is subject to detection.

And what is the most prevalent kind of vibration? Sound. And what’s one really popular way to enjoy sound? Music. Music is sound is vibration. Which can be measured by an accelerometer. (By that measure, you can think of a microphone as a specialized accelerometer…).

So really, then, it should come as no surprise that, if you take an accelerometer that’s placidly going about its business measuring some mundane form of acceleration – and then blast it with Led Zeppelin at lots of dBs, you’re going to get a response from the accelerometer.

Most likely, you’d expect just a bunch o’ noise in that accelerometer’s response. (It’s almost like it was designed by those grandparents that kept telling you that rock and roll was “just a bunch o’ noise.”) So – loud noise as a jamming signal? Yeah, it doesn’t take too much imagination to accept that that might happen. Another study a couple of years ago caused a drone to crash thanks to blaring speakers confusing gyroscopes. It’s not necessarily good if it happens, but the specific result – and its severity – depends on what application you’re jamming.

But here’s what’s not so obvious: embedding a malicious audio signal in music such that you literally control the accelerometer response and make it do something. No way that’s possible, right?

Wrong. It’s been done.

A team from Univ. of Michigan and Univ. South Carolina acquired a bunch of accelerometers and tested them – and many were vulnerable. That’s not to say that anyone not on the list is safe; the list just happens to be what they tested.

They didn’t look at the accelerometers only in isolation; they also tested complete systems that used accelerometers.

- They acquired a remote-control (RC) toy car that uses a phone app as a remote control. They played music on the phone within which was embedded “instructions” to change the direction of the car. It worked. (Note that, technically, this “crosstalk” between the music app and the RC car app apparently violates Android’s app separation rules. Who knew!)

- Fitbit provides rewards for crossing step-count thresholds. The team played the resonant frequency of the accelerometer for about 40 minutes and earned 2100 steps – without lifting a foot. (That qualified them for a reward, but they decided that collecting the reward would be unethical, so they didn’t.)

This isn’t, “Wow, here’s a vulnerability, and, if the moon and stars align properly and you hold your mouth just right, something could happen!” This is, “Wow… that just happened.”

“Insecure” Hardware

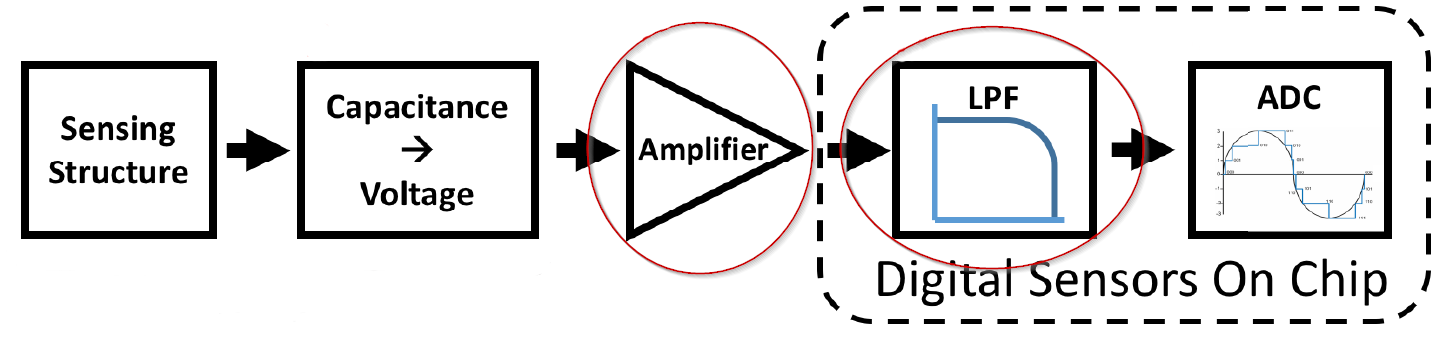

So what’s going on here? Their unusually readable report breaks the issues down with great detail, which I’ll summarize here. There are actually two culprits in two different styles of attack, in accordance with the typical sensor architecture as shown in the following image. The amplifier and low-pass filter (LPF) are where we’re going to focus (highlighting ellipses are mine).

Image courtesy the research team (full citation at bottom)

Summarizing the setup, the sensor mechanism (typically MEMS) converts the motion into voltage signals my means of capacitors. That signal is amplified and then run through an LPF to get rid of signals outside the frequency range of interest; thence it is converted to digital for output.

Which brings up a critical point: this whole discussion is particular to digital accelerometers, not ones with analog outputs. (That’s not to say that there might not also be games to be played with the analog ones, but that’s not what this is about.)

Amplifier: If the signal sent to the amp is too strong – that is, it exceeds the dynamic range of the amp – then it will clip. And it may clip asymmetrically. This results in a DC offset out of the LPF. The amount of clipping can set the offset voltage.

They refer to a “secure” amp as one that can handle the full signal; one that can’t is considered “insecure.” Creating an amp that can detect delicate signals while ready for resonance isn’t necessarily easy…

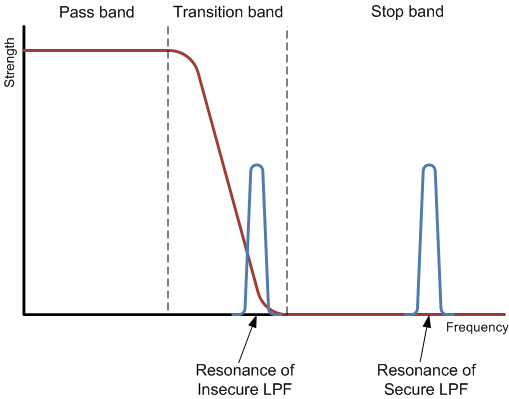

Low-pass filter: The second problem stems from the LPF cutoff frequency – that is, the frequency above which it filters out the signal. Of course, that cutoff isn’t perfectly abrupt; there’s a transition band as you go from the pass band (low frequencies) to the stop band, where no signal gets through.

And the problem plays out when this cutoff frequency (or the transition band) is near the resonant frequency of the accelerometer. That resonance is often not particularly sharp, so there’s a narrow band of frequencies that can cause some level of resonance. If that resonance band overlaps the LPF transition band, then the LPF isn’t going to completely filter that signal, leaving an oscillating false LPF output.

So a “secure” LPF will have a cutoff frequency far below resonance. One without that characteristic is an “insecure” LPF.

This is Nuts!

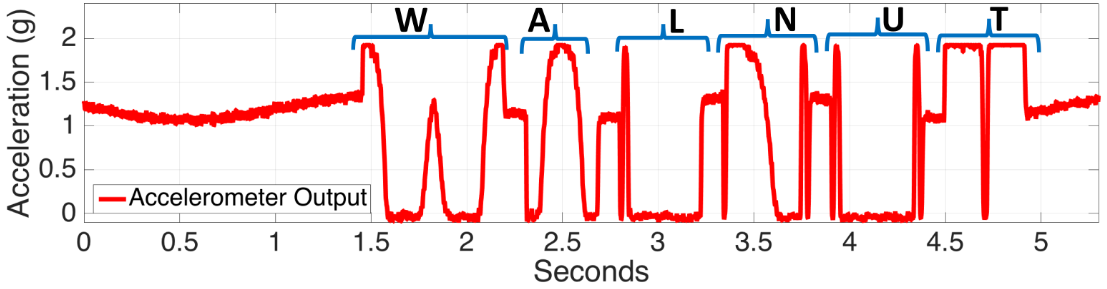

In their most dramatic demonstration, they leveraged these weaknesses to spell the word, “walnut.” I have to admit to initially being unsure of what that meant: did they create a signal that was the ASCII version of “walnut”? No. It all became clear when I looked at the waveform at the output of the accelerometer. Back up and squint your eyes if that helps: it traces out “WALNUT.” This was done using the clipping effect (which they refer to as an “output bias attack”).

Image courtesy the research team (full citation at bottom)

Ironically, sensors with a less accurate ADC didn’t give such a clean signal; the one shown above is from a device with a more accurate ADC.

To be clear, it doesn’t appear that a random hacker could pick up any old phone and immediately start an attack like this. It takes a fair bit of work to figure out, for a given brand of system and accelerometer, what the clipping response is and what the sampling and resonant frequencies are. Only with that knowledge can one start to figure out how to do more than just jamming – to exercise actual control. And then there’s figuring out how to embed sounds into music and then get that onto a target system and then get someone to play it. It’s a lot of effort. But then again, so is a side-channel attack for figuring out security keys – and that’s been done.

Solutions?

Is this unavoidable? Or are there defenses that can be built to block these attacks? The team puts forth some hardware and software solutions, keeping in mind, however, that only the software ones can be applied retroactively to an already-deployed system (assuming it can be updated).

Hardware: Depending on the vulnerability of a particular device, you may need to address the amplifier, the LPF, or the package (or combinations).

- If clipping occurs at the amp:

- Design an amp that can handle a stronger input. (Easy, right?)

- Alternatively, you can add an LPF before the amp to filter out the high-frequency components, assuming that both LPFs have cutoffs well below (ideally, less than half of) the resonant frequency.

- If resonance overlaps the LPF transition band, then you have a few choices:

- Lower the LPF’s cutoff frequency to make it farther from resonance. In general, the cutoff should be less than half the (lowest) resonant frequency. This will lower the bandwidth of the sensor, however.

- Make the transition band narrower – which they say means more components for no benefit other than protecting against this attack.

- Raise the resonant frequency: this means changing the proof-mass/spring assembly, which may change the overall response of the accelerometer, making it less sensitive.

- You can add damping material to the package. But this tends to make the package bigger. And, it occurs to me that, even if there is damping between the outside of the package and the MEMS elements, the package is still soldered down to the board. If the board vibrates with the sound, that can travel in via the leads, bypassing the insulation.

Software: They came up with a couple of clever ways to change the sampling so that resonance won’t be a problem.

- One way is to randomize the sampling. It’s unlikely that the sampling frequency is going to correlate with the resonant frequency, but because the resonance band is slightly wide, an attacker could find a multiple of the sampling frequency within that range and use that frequency in the attack.

By randomizing the sampling period to be different for each measurement – somewhere between 0 and 1/fres – then you’re not consistently sampling the resonance. If there are multiple problem resonant frequencies, then you find the lowest common multiple flcm and place the samples in the 0 – 1/ flcm range.

Of course, this mucks with the results you get from sampling, since you’re not always sampling at the same rate, so you need to take multiple readings (each with a different sample period) and then average the results.

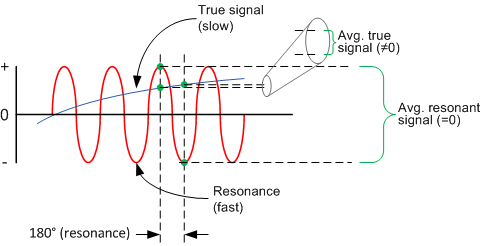

- 180° out-of-phase sampling is another approach. The idea is that you sample at a given time and then 1/2fres later – and then average the results. Any resonant component is going to be cancelled because you’re averaging samples from opposite “sides” of its waveform.

If the true signal you’re trying to measure has a frequency far below the resonance (which is desirable), then these samples will appear at high frequency on a relatively slow-moving signal (as compared to resonance). By averaging the two measurements, you’ll get something very close to the true sample of the desired signal while the resonant components will cancel. You end up with an effect akin to a notch filter around the resonant frequency.

The accelerometers tested in this study came from (in alphabetical order) Analog Devices, Bosch, InvenSense, Murata, and ST Microelectronics. I contacted them all to see if they had a response or any statement to make. I also contacted Kionix – not on the list. The responses were:

- Bosch, ST Microelectronics: they’re aware of the situation and are working on a solution – which they declined to disclose.

- ADI: Analog Devices provided a specific statement: “Analog Devices is aware of the recent research findings published by the University of Michigan and University of South Carolina. While the findings are based on a theoretical vulnerability that would be extremely difficult to exploit, cybersecurity is an important issue for the entire electronics industry and one we take extremely seriously. Analog Devices recently published a detailed advisory that explains to our customers how they can mitigate unwanted vibrations in MEMS accelerometers and avoid the security risks described in the study. The technical recommendations are derived from over two decades of working with customers deploying MEMS sensors in mission-critical applications such as automotive safety and healthcare applications. The advisory can be found at: http://www.analog.com/media/en/Other/Support/product-security-response/ADI_Response-ICS_Alert-17-073-01.pdf”

I reviewed the technical response, and, interestingly, they say that the primary conduit for the acoustic energy comes not from direct impingement of the sound on the chip, but from the board itself. The mitigations focus on ways to reduce the transmission of the sound from the board to the accelerometer. (This late news validates my suspicion (above) that package damping might not be the whole story…)

- Kionix: they’re relieved not to be on the list, but they’re checking things out anyway. Not being on the list doesn’t mean you’re scot-free; it simply means they didn’t test it.

- InvenSense, Murata: no response by publication time.

You can well imagine that this unusual – and unexpected – attack vector has a lot of people with their heads down to make sure their accelerometers don’t show up on the next list.

Select images courtesy of the authors as noted above. Full citation: T. Trippel et al, “WALNUT: Waging Doubt on the Integrity of MEMS Accelerometers with Acoustic Injection Attacks”, IEEE European Symposium on Security & Privacy, Paris, France, April 2017

More info:

WALNUT: Waging Doubt on the Integrity of MEMS Accelerometers with Acoustic Injection Attacks (pdf)

Do you see the Walnut attack as a threat or a curiosity?