“It’s a long way to the top if you wanna rock ‘n’ roll.” – AC/DC

You’ll never lose your job for buying IBM. That was the conventional wisdom in the days of large mainframe computers, the heavy metal that ran your uncle’s downtown business. IBM was considered the safe choice, the blue-chip, reliable, businesslike (“It’s in their name!”) supplier of computers, typewriters, and assorted office technology. Big Blue.

Now we have Big Weird.

But weird is good. More to the point, weird is where we’re heading, ready or not. Someday our great-grandchildren will look back on today’s digital computers as quaint curiosities, right after they post Snapchat updates on their quantum cellphones.

IBM put a proverbial stake in the ground this week, announcing that it was officially entering the race to supply “the first universal quantum computer for business and science.” After commercializing mainframe computers in the 1970s and revolutionizing personal computers in the 1980s, IBM is now setting its sights on the next big thing. And it’s plenty weird.

If you’ve never used, seen, or heard of a quantum computer, you’re not alone. I imagine most folks back in, say, 1954 would’ve said the same thing about computers of any sort (apart from the human “computers,” often women, who tallied sums, of course). Quantum computers are like today’s normal digital computers, inasmuch as they’re boxes with blinking lights that calculate difficult math problems… but that’s about where the similarities end.

Quantum computers are deeply strange because quantum physics is strange. They rely on the profoundly counterintuitive properties of certain superconducting materials operating at extraordinarily low temperatures. In that realm, where Schrödinger’s cat, Heisenberg’s Uncertainty Principle, and Pauli operators rule, data bits are not what they seem.

A quantum bit is called a qubit, and it can retain one of two states, which we arbitrarily call 1 or 0. No problem, right? Except that qubits can be both a 1 and a 0 simultaneously, a condition known as superposition. There is no apparent contradiction here; that’s just how quantum physics works. Moreover, qubit states are more probabilistic than actual (the Born Rule), so you can never really know what your qubit is doing – which would seem to make it completely pointless as a data-storage element.

Except that an eerie characteristic known as quantum entanglement means that the state of one qubit is (somehow) related to the state of another, across any distance and regardless of time (we think). So you can monitor the state of one qubit by observing a different qubit.

It goes farther down the rabbit hole from there, but the point is, making real working quantum computers is hard. So far, IBM has a 5-qubit machine working reliably, and the company has produced systems with 15 or more qubits that it rents out to researchers. The goal is to have a 50-qubit machine, at which point the computers probably won’t need us anymore.

Paradoxically, quantum computers are huge machines. IBM’s current models are vast, steampunk-esque calliopes of brass tubes and mechanical connectors. You almost expect to find steam hissing from fittings among the clanking gears. Presumably, the IBM inventors with top hats and goggles are pulling on huge levers just out of the picture.

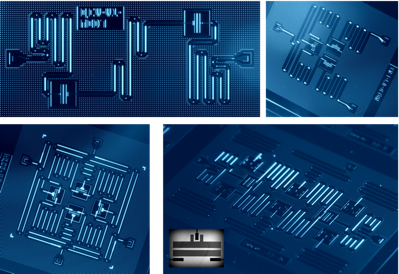

The Victorian-era plumbing is there to keep the little qubits cool. IBM’s qubits are fabricated in silicon, just like “normal” computer hardware, but with lashings of niobium, aluminum, and other superconducting metals. These are all cooled (“frozen” would be more accurate) to just 15 millikelvins, or 0.015 degrees above absolute zero, the temperature at which all physical energy stops. That’s more than 100 times colder than outer space.

But it’s only at these unbelievably low temperatures that quantum materials perform their party tricks. Quantum machines aren’t just remarkably fast, they’re fundamentally different. A few companies have built conventional computers using quantum elements, but IBM dismisses these as half-hearted “science fair experiments.” To truly reap the benefits of quantum computing, you need to embrace the weird.

Quantum machines don’t run serially, like conventional computers do. Complex problems like factoring integers don’t become exponentially harder for a quantum computer, as they do for today’s digital machines. Instead, quantum computers can automatically, almost naturally, parallelize complex problems that would be unfeasible with a conventional machine.

As an example, IBM points to Shor’s Algorithm, the current state of the art in finding the prime factors in large integers. For large factors, such as those commonly used in cryptography, a conventional computer would take an unreasonably long time, which is the whole basis of the crypto algorithm’s security. It’s not that the numbers can’t be factored; it would just take hundreds of years using current technology. A quantum machine, however, could crack the code in a matter of seconds. Big difference.

Quantum machines also lend themselves to real-world problems in weather prediction, pharmaceutical research, and physics experiments – all areas where simulating multiple simultaneous agents is paramount. These are all variations of the “knapsack problem,” and are essentially unsolvable using current methods.

IBM believes that the proverbial knee on the curve comes at around 50 qubits. Once you have a 50-qubit quantum computer running reliably, you’ve passed the point of no return. At that level of performance, you can no longer simulate or duplicate the results of a quantum machine using a conventional machine. That means, for instance, that you can’t design a 50-qubit computer using conventional EDA tools – it simply can’t be simulated in any reasonable amount of time. Nor can you verify quantum results with a digital machine. You’ve passed beyond the capabilities of today’s architectures and you’re in uncharted territory.

Want a taste of quantum computing for yourself? IBM will let you play with one of their machines, for free. The company launched its Quantum Experience website last year as a browser-based portal into a real 5-qubit quantum computer. Like any microcontroller company, IBM allows users to upload programs and watch the results, a kind of “try before you buy” sampler of quantum computing.

Given that IBM is out in left field, are they alone in left field? Is this the only company building quantum computers? In a word, no. There are others, most notably D-Wave, which is arguably out in front of IBM in terms of commercialization. D-Wave’s D2000Q machine is a real, working quantum computer, and the company has paying customers, mostly in government and aerospace, as you might expect.

For its part, IBM feels that its approach will be more general-purpose, more mainstream (if that word can be applied to quantum computing). The company acknowledges D-Wave’s lead in commercialization but also dismisses that company’s quantum architecture as simply a form of “annealing” that’s not appropriate for “business and scientific” applications like IBM’s.

With multiple companies spending lavish amounts to develop quantum computing, it’s fair to ask, why do it at all? Sure, it’s cool and high-tech, but will it ever become… normal? Can it be commercially successful, or will quantum computing always be a specialized niche, like carbon-fiber bicycles or molecular gastronomy?

At least publicly, IBM believes that conventional computing and Moore’s Law scaling are both nearing the end of the road. “There’s no such thing as a zero-nanometer transistor,” says Dave Turk, IBM’s VP of High-Performance Computing. Zeno’s Paradox notwithstanding, we have to stop shrinking transistors eventually. It’s time for a complete rethink of how we design computers.

Personally, I’m always skeptical of claims that the sky is falling and that Technology X is moribund. “Moore’s Law is dead; email is dead; retail is dead; x86 is dead; etc.” We show a remarkable tendency to cling to our old technologies (QWERTY keyboard or ASCII encoding, anyone?) in spite of all the “better” alternatives. I don’t see digital von Neumann architectures disappearing anytime soon. We’ll all have jetpacks and flying cars before that happens.

Still… I can’t help thinking that IBM is onto something. There are some truly intractable problems that conventional computer architectures can’t solve. Crypto technology depends on those problems being unsolvable. Others, we don’t even attempt to model, like complex molecular interactions in physics or medicine. Quantum technology appears to be ideally suited to this class of problems. Conveniently, quantum mechanics is very good at modeling the effects of quantum mechanics.

IBM changed our idea of personal computers in 1981 with the Model 5150. Maybe the company can do it again in a few decades. I wonder if it will still run Windows.

8 thoughts on “Quantum of Solace”