Economics is never far from a semiconductor discussion. Even Moore’s Law, often articulated in physical terms (die size, feature size, etc.) is really an economic law that deals with cost. Yeah, it’s a bit more drab than bits and bytes, but, heck, it pays a lot of bit-and-byte salaries (at least for now, until our AI overlords take over that industry), so it’s not to be given short shrift.

And yet, as much as we think we’re turning out amazing stuff at lower-than-ever cost (probably true), the IoT industry still pushes back on anything perceived as adding to cost. It’s one of the reasons that security is such an issue: the seemingly small additional cost for adding extreme vetting of devices that attempt to attach to the network is more than many manufacturers want to bear.

Which means that any IoT technologies targeting, in particular, the home market (where consumers complain about high device prices and are still not quite sure what’s in it for them) have to prioritize cost at all costs. Fortunately, thanks to Moore’s Law (or what’s left of it), the electronics on chips have a pathway to getting cheaper and cheaper, assuming that volumes get to the level where aggressive nodes can be leveraged to give lower cost. (And that’s not a given, once you cross the FinFET barrier…)

There’s one part of the circuit, however, that this scaling doesn’t necessarily apply to: the radio. Yeah, that’s an analog thing. And, while analog can, in theory, follow Moore into the sub-10-nm realm, there’s not so much of that going on way down there. There seems to be a fair bit of, “If it ain’t broke, don’t fix it” with analog, which means that a transmitter or receiver built on a modest node will tend to stay there for as long as it does the job.

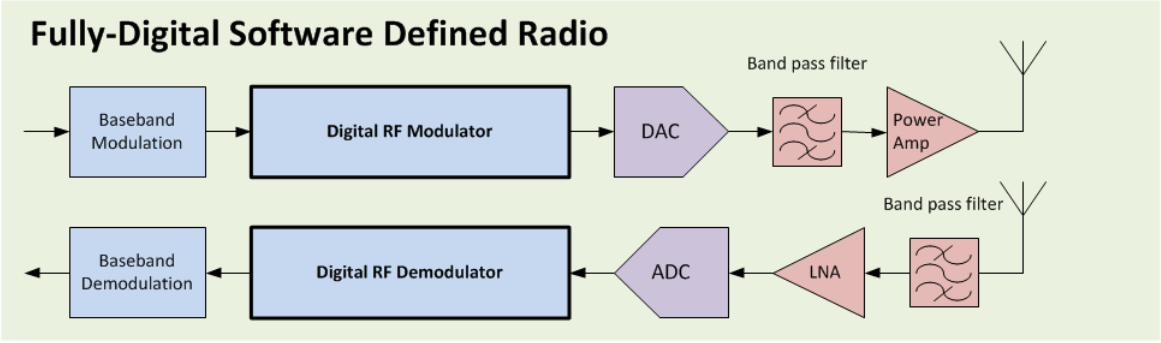

Most of a radio these days is digital; the question really is, at what point in the system do you have to abandon the digital domain and convert to analog? Cambridge Consultants explain that, for the most part, the baseband signal gets processed digitally and is then converted to analog for boosting into the stratospheric frequencies that will launch themselves into the air.

(Image courtesy Cambridge Consultants)

The way they describe it, high-frequency DACs and ADCs are extremely expensive, so simply trying to digitize sooner by moving the DAC/ADC boundaries doesn’t make cost sense. ΔΣ modulators could step in, but, they say, the onerous calculations involved in messing with the resulting digital stream have made this impractical.

And so we keep doing things the way we’ve been doing them. Unless… you could find a way to handle those high-speed ΔΣ computations better.

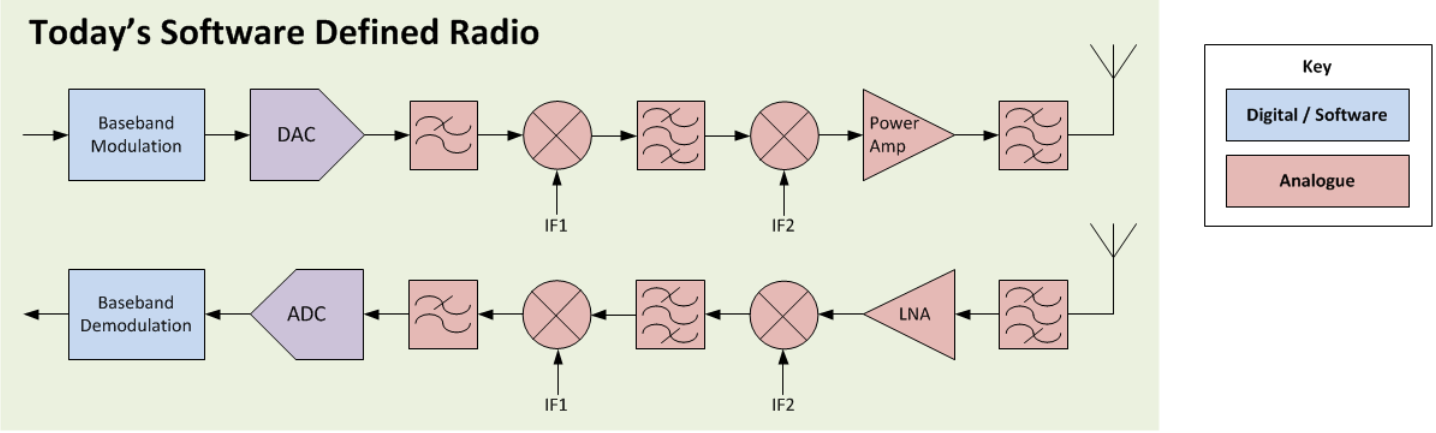

And, in fact, that’s exactly what Cambridge Consultants say they’ve done with the Pizzicato RF circuit. They first worked the transmitter side of things in 2015 and then followed up with the receiver in 2016.

What they’ve done is to parallelize the ΔΣ computations using 64 concurrent computations at hundreds of MHz. They then combine the results into an outgoing SERDES stream at 28 GHz. That runs directly into a passive filter and thence to the antenna for a 14-GHz signal. A power amp can still help if you need to shout more loudly.

At the receiver end of things, they passively filter out extraneous bands and then run through a low-noise amp before digitizing. They then split up the resulting SERDES-in signal into parallel slower streams for reasonable processing.

(Image courtesy Cambridge Consultants)

As to the power amp on the transmitter, they’d also like to move that to the digital side, but for that, they need to come up with a high-efficiency digital amplifier. I didn’t detect any concern as to whether this could be done; it’s a matter of getting it done.

This is more than just an idea; they’ve done it successfully using FPGAs, leveraging their built-in SERDES blocks. They haven’t done a dedicated chip yet, which could come with some help from co-development and/or licensing partners.

So the big move here is that the frequency boost is now done on the digital side of things, leaving only passive filters and amps (maybe) as analog components. Any level of frequency boost can be managed, making this yet another parameter that’s easily controlled by some digital signal (“easily” being a relative concept).

Assuming that passive analog circuits are a lot easier to manage than active ones, a circuit like this can now scale down the process node path if cost savings become available through advances in process. Of course, this also assumes that 64 digital channels doing the processing on an aggressive process node is still cheaper than using analog on an older node. An FPGA implementation isn’t necessarily going to prove out that part of the equation.

Part of me thinks this looks pretty interesting. But I also haven’t seen the world jumping up and down, trying to break the doors down for access to this solution. The transmitter, at the very least, has been out there for a couple of years. So it’s hard to tell whether this is going to have an impact on how things are done.

I checked in with them on this, and Bryan Donaghue, Digital Systems Group Leader at Cambridge Consultants, noted one sort of obvious thing: transmitters aren’t particularly useful by themselves (unless you happen to be implementing an early version of Sigfox). Having a receiver to accompany them makes things more attractive. And, of course, the receiver has had much less time to get traction.

So I’m not sure whether to predict that, on some future date, we’ll look at our radios and wonder why we ever messed with analog. If any of you have thoughts as to what’s great or limiting about this, please post them below.

[Edited to change a couple “MHz” references to “GHz” – pointed out by an alert reader]

More info:

What do you think about this almost-all-digital approach to radio?