Everybody’s trying to break into your… whatever it is you make. No matter what you make or how secure you try to make it, someone will still try to break it. For profit or for bragging rights.

And if the electronics governing the actual communications to and from your device – the main “channel” – are well protected, good job! But that means someone may look elsewhere for a way to break your secrets, using so-called “side-channels.” These typically consist of power or EMI analysis: patterns in both can lead to clues about keys and such, and they have been successfully employed to crack security.

The real goal, of course, is to crack the communications. But if those are encrypted, then you need to crack the key. So this sort of attack really involves two phases: extracting the key by whatever means possible and then using that extracted key to decrypt the messages. And, obviously, if you want to protect against this, you focus on making sure the key is well protected.

In the recent Linley Processor conference, Synopsys presented a discussion of side-channel attacks and ways to thwart them, including some discussion of ARC processor techniques. And one of those was “power randomization.”

The idea of power randomization is pretty straightforward: if a direct read of the power signature gives clues as to the nature of the key, then, by injecting random power variations, you can thwart this particular attack. The big question I was left with, then, is, “What exactly does ‘power randomization’ mean?”

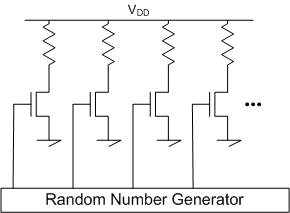

After all, I can think of a really easy way to randomize power: just have some transistors connecting power and ground (OK, if you insist and have room, you can put a resistor in there too), and randomly turn them on. This would certainly work, but in a world of batteries and finite energy, it’s probably not worth pursuing further. (To be clear, this is NOT what Synposys was proposing…)

(Not such a good idea.)

So what other ideas might work, without browning out the local town? After the conference, I had a call with Synopsys’s Angela Raucher to dig in a layer. Part of the premise is the fact that smaller IoT devices aren’t likely to have dedicated hardware security infrastructure – which means that cryptography has to be executed in software.

What we’re talking about, then, is one of the trinity of protection modes: protecting data at rest (stored), in motion (messages), and in use (being computed or executed) – with the latter being our focus. You may store the code securely, but once it’s pulled into the processor for execution, it’s now in the clear. If you can manage debug access, that’s particularly worrisome, but our scenario assumes no such direct access.

Synopsys’s approach was to see where the tell-tale power variations originated, and the cryptography code turns out to be a problem. There are a couple of ways of quelling those power signatures. One of them relates to how long various instructions take to execute; giving everything uniform timing is a start. It’s apparently not easy to do, but they did it – and it’s good, but insufficient.

So they decided to mess with the instruction stream itself – by randomly injecting instructions into the pipeline. Why does that not upset the whole algorithm? Because they’re using NOOP and branch-to-self instructions, so all it does is add some random delays.

“Whoa, whoa, whoa, did you say, ‘branch-to-self’?”

Indeed… and I asked about this. NOOP is really simple: you just have an instruction that does nothing but consume cycles. But branch-to-self? (Can I call it BTS please?) That’s exactly what its name says: you “branch,” landing right back at the same place. There’s no escape: it’s an infinite loop.

Ordinarily, what gets you out of this would be some kind of interrupt. That would be a high-cost solution here, since, once in the loop, you’d have to fake an interrupt somewhere and wait for a dummy interrupt handler to jump in and pull the processor out of its navel-gazing session. That’s a lot of overhead – especially when repeated randomly.

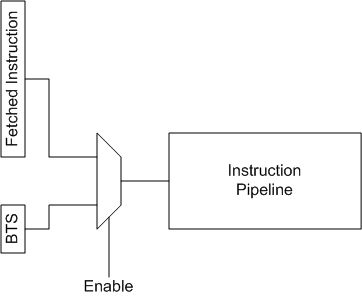

It turns out there’s a perfectly logical explanation, and it gets to how these instructions are “injected.” They have an enable signal at the start of the instruction pipeline, and, ordinarily, it’s off, with the pipeline being fed by fetched instructions. But when an injection event happens, then the pipeline instead sees the BTS. It never gets stuck because, when the enable is removed, the system goes back to the fetched-instruction stream.

This is as much as they told me, so what follows on this topic is my inference (much of which has since been confirmed by Synopsys), including the figure (OK, I didn’t have that yet when I asked for confirmation). You could imagine that the logic controlling the enable could randomly decide how long to keep the injected instruction in place. You could also imagine ways to swap BTS for NOOP, mixing it up just because, well, mixing it up is what this is all about. I can’t be sure that they do this, but it’s definitely possible.

I’ve been vague in the drawing about exactly what’s multiplexed into what, and, as shown, it’s unclear exactly how this avoids the infinite loop. A branch instruction works by changing the program counter (PC), so it would seem that this muxing thing really has to be putting addresses into the PC rather than actually muxing instructions. But then again, by definition, a BTS instruction needs to fetch no new instruction; it can simply re-use the old one. So the exact details of where this is injected are still not quite clear – we’d need a closer look at where this is in the micro-architecture. And their security processors have a purpose-built pipeline, so we can’t assume this is just an add-on. But… hopefully you get the basic point.

The enabling logic incorporates a true random number generator (TRNG). If you use an easier pseudo-random number generator (PRNG), there will be a pattern to the numbers. Yeah, you’d think it would be ridiculously hard to find that pattern, but it’s doable. And, after all, the whole side-channel attack thing is ridiculously hard, so what’s yet another pattern to recognize? So if you go pseudo-random, then you’ve given your attackers one more step to execute first: decode the random pattern, then use that knowledge to filter out the randomness and get back to the true signal.

A TRNG will be based on some physical variation that can’t be replicated in the future or on some other device. Some TRNGs have (or are supplemented by) means of ensuring that once a particular random number has been used, it will never be repeated – even if it happens to come up again. This is useful for key generation, ensuring that a used session key will never be reused, but it’s not necessary for our particular power randomization focus.

This means that, at any given random time during code execution, you’ll get an extra instruction. Or, maybe it’s multiple.

This is great for hiding the goings on while computing something secure, but it could be a drag if you did this all the time. So Synopsys provides a couple of knobs. First, you can turn this feature on and off in real time. So, for standard harmless calculations, you don’t need to suffer that burden. Then, when you’re about to do something more private, you can turn on this jamming apparatus just for that session.

In addition, you can select how frequently these instructions are injected. The specific times are random, but you have control over the average injection rate. What rate you choose would be determined by the level of threat (risk and consequences). In addition, while this might be obvious, it’s good to reinforce that, when you enter a sleep mode, none of this is going on.

This effectively masks the actual computation signature from the power by overlaying additional power variations that have nothing to do with anything. To be sure, they’re clear that this is but one tool in the chest – necessary, but far from sufficient.

More info:

Synopsys ARC Security Processors

What do you think about Synopsys’s approach to randomizing power?

“The reason the American Army does so well in wartime, is that war is chaos, and the American Army practices it on a daily basis.” — German Tactician, WWII

Glad they avoided obvious flaw of using a PRNG.

I am reminded of the quote by John von Neumann:

“Any one who considers arithmetical methods of producing random digits is, of course, in a state of sin.”

[Of course, if executing a NOP op-code consumes a different amount of power than the other op-codes, then this scheme is trivial to break, as you can then filter them all out of the sequence]