Let me first make one thing perfectly clear: I’m proud to be a software engineer. For the first twenty years plus after I graduated from engineering school, I worked as a software engineer and managed teams of software engineers. We did good work, and I took pride in our accomplishments. I worked with some of the brightest, most innovative, hardest-working engineers I have ever met, and we built some amazing technology.

During the time I was in software, the art of software engineering evolved and matured dramatically. The languages, methods, paradigms and best practices underwent spectacular change. Since then, software engineering has evolved even more. This change is a good thing, because, as an engineering discipline, software engineering – what’s the technical term? Oh, yeah. “Sucks.”

Why?

Because software engineering is the newest and least mature of the established engineering disciplines. Sure, some of the best minds in the world have been working diligently on the problem of software engineering for several decades now, and considerable progress has been made. But software is the most complex thing ever created by humans. And the amount and complexity of software the world needs far outstrips the output of all of the world’s software engineers combined. We have an enormous gap between the quantity and quality of software that is possible and useful, and the amount and quality of software engineering can deliver. And the problem is only going to get worse.

Check any team you can find developing systems that include both hardware and software. In just about every case, the software engineers outnumber the hardware engineers by a large factor. In just about every case, it will end up being the development of the software that drives the schedule and the eventual release of the product. And, in just about every case, a difficult judgment call will have to be made about whether the software is yet “good enough” to ship.

One of the biggest vectors driving this gap is Moore’s Law. Moore’s Law has forced a steady, five-decade, exponential increase in computing power. On top of that, the explosion of the IoT has created an even bigger vacuum in the software space. We now have a global hardware infrastructure with the computing, sensing, actuating, and storing capacity to transform life on Earth in wonderful and terrifying ways, and we barely have any idea how to program it correctly.

At the beginning of my career, I worked at a company that developed and sold place-and-route software for ASIC design. We had a goal – to be able to successfully place-and-route gate arrays with 10,000 gates (which was enormous at the time). In order to do that, we had written something like (if memory serves) one million lines of FORTRAN. We had what we considered to be a rigorous software development process – particularly for a company with 50-60 engineers and no computer.

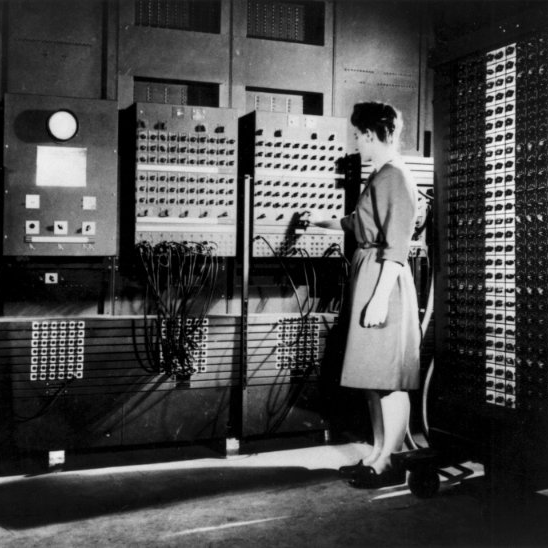

Oops, did I just say we did not own a computer? It’s true. We leased time on a computer that was approximately a thousand miles away. Our engineers would write FORTRAN code on special forms (one character per box, like filling out your tax return). Then, another engineer would “desk check” the code on the forms, manually reviewing it for potential errors. They had to sign a little “desk checked by” line on the coding form, and acknowledging that they were now to blame if problems turned up in the code they had reviewed. Then, a keyboard operator manually typed in the new code using a remote terminal with a phone modem. A thousand miles away, the new code was checked into our code base on giant washing-machine-sized disk drives connected to a VAX. Overnight, the new version of the software system would be compiled and run against a bevy of regression tests. The next day, the results and listings from those batch runs were printed out and Fed-Exed back to our offices.

I may be glamorizing that early-1980s development process a bit from my memory. But the truth was, our software worked. It was the best in the industry. It enabled chips to be designed that would have otherwise been impossible. It moved electronics technology forward in a meaningful way.

But, when I say it “worked,” I am being a bit generous. It never, ever worked the first try, or the second. We’d get a new netlist from a new customer and we’d build the model of the base array and models for all of the components used in the customer’s design. We’d then run the whole thing through our system and it would crash. Badly. Every Single Time. Then, we’d pick up the pieces, sort through the printouts, and spend days-to-weeks finding and fixing all the places it broke. Eventually, we’d get that one design to work for that one customer. We cheered. It was a modern engineering miracle, a triumph of our team spirit.

Fast forward ten years and the software engineering universe had changed. I was working in a new company on a team where every single software engineer had their own high-powered engineering workstation. All of those workstations were networked (via a problematic “token ring” network, it turns out, but networked nonetheless). We programmed in Pascal. We had dynamic memory allocation, compilers, interactive debuggers, and more. We were creating software for schematic capture, simulation, and analysis of ASIC and PCB designs, and engineering teams all over the world were depending on our software for their design work.

Bug reports flowed in by the thousands. There were so many bugs, we barely had the capacity to classify, count, and track them all – let alone make any meaningful dent in the backlog by actually fixing them. Software update releases became grueling multi-year projects, bogged down by bug backlogs. There had to be a better way – a way to make more robust, maintainable, upgradable software. We were determined to find it.

Our solution was that we were the earliest adopters of object-oriented design in our industry. We decided to rewrite our entire code base – over eleven million lines, it turns out – in object-oriented C++. At the time, there was not even a real C++ compiler in existence. Instead, we used a translator called Cfront, which converted our object-oriented C++ code into “mangled” C code, which was then compiled with a normal C compiler. When it came time to debug, however, we were stuck trying to debug the auto-generated C code – with its hundred-character function and variable names, and then somehow mapping the problems we saw there back to the original source code we had written. It was … sub-optimal.

We continued to evolve. A decade later, I was working on high-level synthesis software, and we had sophisticated compilers, debuggers, version control (with check-in, check-out, and merge), configuration management, and robust automated regression testing. We had disciplined vetting and prioritization of bug and enhancement requests. We tracked back deliverables against marketing requirements. We even had the beginnings of what would now be called Agile software development.

We were worlds more sophisticated than my twenty-years earlier team. And now, when a new customer sent us a new design to run through our system… It crashed. Just about every time.

Today, software development has progressed significantly since the time I was in software engineering. And yet, today, in just about every technology product I buy, software is still the weak link. There will always be problems and bugs and clunky things with a new gadget, and those problems and bugs and clunky things are almost always fixed via a subsequent software patch. To be fair, though, in just about every product I buy, software is also delivering most of the value. The “hardware” portion is often just some kind of generic embedded computing system connected to some collection of sensors, actuators, and human interface devices. All of the behavior that makes it useful comes from software.

The thing is, software is hard – and it just keeps getting harder. Being an expert in software almost always means that you have to be an expert in at least two things – software development, and the discipline in which your application is working. In my case, we had to be expert at both software engineering and chip design. If you’re working on medical software, you have to be expert at both software and medicine. And this is a gross oversimplification. Each of these disciplines has sub-disciplines that are critical to software development – mathematics, signal processing, physics, chemistry, probability – the list goes on and on.

In reality, software engineers are constantly trying to create systems that can outperform human counterparts. We made place and route software because no human engineer could complete the task in any reasonable amount of time. It’s the same with most areas of software development.

As software permeates every aspect of our lives, the need for skilled software engineers grows exponentially. The need for better software development processes, languages, and tools grows as well. There is almost no profession that doesn’t need experts fluent in software development – as well as the other aspects of that profession. Our educational system needs to consider this. In many professional fields – engineering, medicine, and many more – there may well be a need for more programmer-experts than for actual practitioners.

As the proverb goes – give a man to fish, and you feed him for a day. But write him a program that can do it…

@Kevin – and why is it that you defend Dick for claiming “that software, unlike real engineering, never learns from its mistakes” In the Wack a Mole piece?

And proceeded to assert incorrectly that software engineers and computer engineers are not even professionals because there is no licensure, when IEEE does in fact provide professional License in these field.

And assert incorrectly that anybody can claim to be a software engineer, even as you say “Any twelve year old with a laptop can declare themselves a “software engineer”.

Sure 12 year old can also claim to be a Doctor, a Lawyer, or even an Aerospace Engineer … but what some imposter claims is just a claim without verified formal education and experience to back it up.

Or do you apply your following assertion to ALL of engineering … including Electrical, Mechanical, Aero, Chemical, Bio, Industrial, … etc?

I disagree with your assertion of:

“Adding fuel to the fire, unlike many other professions, there is no community enforcement of standards to practice software engineering. Doctors and lawyers maintain rigorous professional standards for education, certification, and peer review. If you don’t meet those standards, you can’t practice the profession. Any twelve year old with a laptop can declare themselves a “software engineer”. “

@TotallyLost, my goal wasn’t to defend Dick. He’s perfectly welcome to defend himself – it’s not my job. I was just disagreeing with your position in these aspects:

First, I disagree with your repeated insistence that bashing software engineers is “bigotry”. By your oft-cited dictionary definition, rooting for your favorite sports team (and against their rivals) would also be bigotry. Real bigotry is a serious social problem with terrible consequences for the victims. Co-opting that term because you feel your highly-privileged professional sub-group is systematically demeaned by other highly-privileged professional sub-groups seriously disrespects the victims of actual bigotry. Saying that “Real programmers use assembly, and all those who use high-level languages are just lazy.” doesn’t make me a bigot, and doesn’t make those who use high-level languages a marginalized victimhood. People choose to be software engineers, and are generally well-rewarded for that career choice. People do not get to choose their gender or their race, and the challenges of belonging to a non-privileged group can be severe.

Second, as a software engineer, I just didn’t see the article as attacking software engineers. You and I apparently are wired with different levels of personal sensitivity to that. You’re inferring a lot of intention and motivation behind what appears to me to be simply lamenting the current state of the art of software development. Hey, I lament it too. We all probably think software development needs to improve. I just don’t take that personally.

Third, on this issue of certification – there is a huge difference between professions like medicine and law, and the professional certification of engineers of any type. If you practice law or medicine without a license, you can be jailed. However, the majority of people who practice engineering professionally are not certified professional engineers. Many do not even hold engineering degrees. And, only a tiny fraction of those who write commercial software for a living are actually certified professional engineers. In fact, there has only been an NSPE track for software for a very few years.

@Kevin – Choosing a local sports team to support in typical engineering environments, typically doesn’t affect ones pay check or career … and clearly isn’t bigotry.

However, irrationally asserting software engineers are not REAL engineers, fail to learn from mistakes, and always produce flawed products, does irrationally set a tone in the work place to justify lower pay checks, fewer career advancement choices, and in real life “is a serious social problem with terrible consequences for the victims.” And that is bigotry

It significantly adds to the stigma for the women software engineers. And from my experience, the conflict with men in the workplace leads to their movement to other jobs for career advancement, especially to have access to a management career path.

And it leads to retention problems for good software engineers that are done with being the butt of the joke at the hands of those claiming to be REAL engineers.

So when you proof and edit an article, with such bigotry, be aware, that it may get attention again. This is the second time I’ve done so in this forum … last time was about a year ago, for the same reasons.

As for never learning from mistakes, consider nearly all commercial wireless routers lock-up with brown outs, and fail to properly reset on their own. Power events which cause the incandescent lights to blink, lock the routers up frequently … from several times a day to several times a month. When placed on a UPS, they are stable for a year or more. Where the power is stable in the city, this is almost never a problem … does that qualify for a poor hardware design, that is nearly industry wide?

As for never learning from mistakes, consider that a nearby lightning strike to a power pole, will transfer the surge into nearby homes electrical wiring. Small surge suppressors can only absorb a small number of joules, the rest finds it way through non-isolated switching wall supplies directly into the electronics power rails, when the pass MOSFET is turned on … Russian Roulette, where the manufacturer doesn’t cover lightning damage. This isn’t a problem for most city people, it is a problem for most rural people. An isolated power supply design, with an over current shut-down of the pass MOSFET and local surge suppression (and managed stray capacitance) would protect the product entirely. Does that qualify for a poor hardware design, that is nearly industry wide?

As for never learning from mistakes, consider that many consumer electronics devices contain components and circuits that are not stable above 95F, and require operation in air conditioned environments where ambient temps are higher. Operation at temps above 85F for many of these devices will shorten life span significantly, yet few of these devices have clear environmental specifications on the box or in the manual (if there even is one). In most cases, small relatively low cost changes to the design for better thermal management would extend operation of the device by another 10-20F, and greatly extend the life of the device in non-air-conditioned environments. Does that qualify for a poor hardware design, that is nearly industry wide?

As for never learning from mistakes, consider that SEU events for devices at sea level are not a significant problem, and rarely cause disruption of electronics devices. Above 6,000ft it becomes a significant problem, especial in solar storms, for consumer devices with static memory cell designs. Again nearly every major city is below 6,000ft, and the vast majority of consumers never see SEU failures in their products … and then there are my customers, who are nearly all above 6,000ft. Does that qualify for a poor hardware design, that is nearly industry wide?

As for never learning from mistakes, consider that the radio thermal noise limits for many older 802.11 devices, and some newer devices as well, see impaired SNR operation above 95F … and yet these devices are manufactured for out-door use where ambient temps are frequently higher than 100F, and in case temps significantly higher due to direct solar radiation on the device. I’ve seen similar failures with other devices, where the sun coming in a window heats the device above it’s thermal limits. Does that qualify for a poor hardware design, that is nearly industry wide?

As for never learning from mistakes, consider that thermal cycling of various small SMT parts, and BGA parts, causes known life problems with solder and bond attachments. Consider that every integrated radio for WISP operations has both these components in high numbers … and they do fail reliably with thermal cycling. Does that qualify for a poor hardware design, that is nearly industry wide?

I also own/operate a rural WISP that services about 200 families, in the mountains of Colorado. The above poor electrical/packaging design problems affect them, as do many others. The software supporting them, and the WISP operations, is stable. Hardware failures, especially brown-out lockups, are frequent.

YMMV

It’s the retention problem in the last paragraph that is high concern — this lowers overall participation numbers. This mirrors my own experiences mentoring women in engineering organizations with glass ceiling issues, as well as trying to hire and retain software teams in biased organizations where the primary management is old school engineering, sales, and marketing.

June 30, 2013 National Center for Women & Information Technology (NCWIT)

https://www.ncwit.org/blog/did-you-know-demographics-technical-women

Women currently hold more than 51% of all professional occupations in the U.S., and approximately 26% of the 3,816,000 computing-related occupations. (Department of Labor Current Population Survey, 2012)

In 1991, women held 37% of all computing-related occupations. (NCWIT, 2010)

Women comprise 34% of web developers; 23% of programmers; 37% of database administrators; 20% of software developers; and 15% of information security analysts. (Department of Labor Current Population Survey, 2012)

Successful startups have twice as many women in senior roles than unsuccessful companies. (Dow Jones VentureSource, 2012)

Startups led by women are more capital-efficient than the norm, with 12% higher revenues using 33% less capital. (Illuminate Ventures, 2010)

More than half (56%) of women in technology leave their employers at the mid-level point in their careers (10-20 years). Of the women who leave, 24% take a non-technical job in a different company; 22% become self-employed in a technical field; 20% take time out of the workforce; 17% take a government or non-profit technical job; 10% go to a startup company; and 7% take a non-technical job within the same company. (The Athena Factor via The Facts, 2010)

@Kevin – I’ve spent most of my career working on software and hardware that has significantly better reliability than the products you share about above — in banking/finance on IBM mainframes, desktop mid-level servers, medical systems, and UNIX Operating System development and cross architecture porting (V5, V6, V7, System3 and System5).

Functional errors to the degree you share were NEVER allowed in ANY of the products I was a part of. Because of that, there was significantly more rigor in the specification, design, coding, and test PRIOR to shipment.

In banking/finance our development code test typically ran in parallel with production for week and months before being released to production. This was ALWAYS true at the banks, and larger corporate clients.

In one case at a very large client in Los Angeles, we ran in parallel for nearly a full year before retiring the old system in April to fully verify quarterly and annual reports for AR, AP, GL, orders, forecasting, Inventory and multiple union payroll. For that project, 90% of the report discrepancies were bugs in the old system that we fixed in parallel with the new development system.

We never allowed significant errors in any of the UNIX ports that would compromise stability, and never released without passing significant synthetic load testing. And we worked with the broader UNIX community to clear out bugs in the AT&T UNIX source product, to the point that it wasn’t uncommon to leave servers running for a year, or more, up time.

Similar experiences working on medical devices, and MRI machines … rigorous specification, design, coding, test, and extensive in-house regression testing PRIOR to any customer release. VERY few software bugs of any significance, and NONE that compromised patient safety or diagnostic accuracy.

I’ve worked with engineers out of aerospace, with equally strong rigor from specification to release. Where the pressure was absolute … make a single mistake that caused a flight system to fail, and it was the end of your career in that industry. Banking was nearly as absolute, in that your failure to fully test, would remove you from working on the core banking systems.

In nearly every shop I have ever worked in, it has been a management decision of when to release, and what minor bugs/errata were OK to release with. There has never been a product I have worked with, that we released with known critical problems, or even known moderate problems … and it’s been rare significant problems have been found in the field after release. In every case development has hit the mark before internal release to QA, and it has been relatively rare for QA to find significant problems, or for significant problems to come back from the field.

I have done consulting in poor shops, where development doesn’t even test … they toss garbage at QA to meet deadlines, that were never really met with verified fully tested code. And I’ve done contracts in shops with exceptionally poor QA teams. Both of these are management and staffing problems. Most of these companies failed inside a few years, and sometimes a few months. With such poor management, it was necessary.

Good hardware engineers MUST embrace DFT and BIST to support manufacturing test, as a partnership with test engineering early in the design process. This is always necessary so test fixtures are available early in the testing process, and mature by mfg release.

Good software engineers MUST also embrace the development of regression tests and synthetic workloads during the development process that become part of the full hand-off to QA. A good QA team also has it’s own test engineers to expand on the development teams regression tests, and test coverage.

In good hardware and software development shops there is ALWAYS a partnership between development and test/mfg/QA from the beginning of the project, all the way to ship. This is necessary to manage both time to market and quality.

Any time this is violated, quality suffers for both hardware and software products. Hopefully management making this mistake, lose their jobs quickly. As an engineer in one of these poor shops, it’s best to strongly advocate for best practice, and get out when management is arguing that it’s not necessary.