Privacy is a gnarly problem. It’s a big concern for consumers – part of the reason why the consumer Internet of Things (CIoT) isn’t yet the big deal that proponents were hoping it would be by now. There are no purely technical solutions – or, perhaps better said, any technical efforts to boost privacy ultimately rely on people and the policies they create. Policies are great, but does reality match the policy?

At this point, it has fallen to non-profit organizations to monitor privacy enforcement, naming and shaming if necessary as a way to ensure both friendly policy and adherence to that policy. One such Canadian group is called Open Effect, and they did a recent study on the privacy of data collected by wearable fitness devices. I’ll link to the study below, where you can get the full story, but the thing that got me, and what I’ll focus on here, is the technical side of how they tested the systems. That makes this more of a security story than a privacy story.

At the highest level, they had three questions for this study:

- What are the privacy policies for a given company?

- What data do they define as “personal” (since only personal data is typically considered private)?

- If data is requested from one of these companies, does the reality of that data fit the definitions of personal data, and are the stated policies followed?

It’s one thing simply to read documents and then submit requests, but these guys went further, looking for technical proof of what data is collected by watching the wireless data stream, where possible. It’s probably not surprising that they found issues, and, as is typical with responsible organizations, they first presented their findings to the affected companies so that they could fix any problems in advance of the publication of the report (which did happen in a couple of cases).

The main criterion they used for picking companies to study was market share as measured by popularity on Google Play mid-2015. But they also added two companies for two specific reasons: they wanted to include a Canadian company, and they wanted to include one company in particular that tracks women’s health issues in more depth.

So they landed on the following wearables:

- Apple Watch

- Basis Peak

- Bellabeat LEAF (higher focus on women’s issues)

- Fitbit Charge HR

- Garmin Vivosmart

- Jawbone Up 2

- Mio Fuse (Canadian)

- Withings Pulse O2

- Xiaomi Mi Band

The first job was to learn what data was sent from the device to the Cloud. They did this by capturing packets from the various mobile apps as they radioed the data via WiFi.

“But,” you might object, “they can’t do that because… security!” And you’d hope that you’d be right. And in some cases, that is right, sort of, except for workarounds in some cases. (Apple fans will be glad to know that what follows didn’t work on the Watch.)

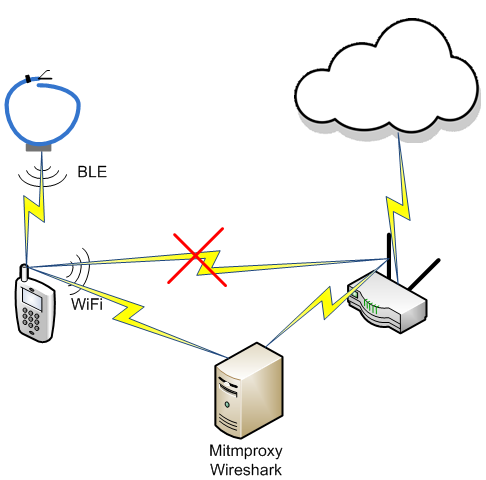

Remember that the gadgets communicate only with the phone, via Bluetooth Low Energy (BLE); the phone acts as relay to the Cloud via WiFi. So hacking the Cloud connection means messing with the WiFi path from the phone to the router. The first thing they did was to create a custom certificate for use on the phone. They then put together a local server running a program called “Mitmproxy.” And with that, you can probably see where they’re heading.

When the phone would try to make a connection to the Cloud server, the Cloud server would send a certificate as part of the TLS handshake. But the local server would intercept that certificate and replace it with a new certificate that was signed by the custom certificate on the phone. So, while the Cloud server wouldn’t trust that new certificate, all the systems in the test lab did, and so that let them capture the traffic.

“Mitm,” of course, stands for “man in the middle,” and that’s what they constructed: a way to read and even to modify the traffic that was heading to the Cloud.

They used a program called “Wireshark” for analyzing the traffic. It would reassemble the packets into the original messages, identify the IP addresses of the other ends of the communication stream, and tell them about what security was in place. And they took the private key from the custom certificate and gave it to Wireshark so that it could unencrypt payloads heading to the cloud (with the help of session keys that it intercepted). The drawing below is a rough illustration of the setup.

Once they had this set up, they performed various tasks on the devices or on the mobile apps and then watched the resulting HTTP or HTTPS traffic to the Cloud. The tasks performed were:

- Signing up for the app;

- Logging into the app;

- Logging out of the app;

- Editing the user profile;

- Editing the privacy settings;

- Editing other settings;

- Sharing and/or adding friends;

- Pairing the fitness device with the phone;

- Syncing with the cloud;

- Syncing with device; and

- Logging activities manually.

When watching the traffic, they looked for various artifacts: keywords, key/value pairs or other structured data, and specific strings such as the MAC address, the IMSI number (SIM card ID) or the IMEI number (phone ID).

In some Android cases, if they couldn’t quite figure out what data they were seeing – or if they couldn’t unencrypt the data – they actually went in and looked at the app code – and even made modifications. App source code is compiled into bytecode and then assembled into a package for download. They used a program called “apktool” to pull apart the package and get to the byte code. They then used “jadx” to turn that back into Java code, where they could make more sense of what was going on.

In one case, their certificate hack didn’t work: the Basis Peak device uses what they call “certificate pinning.” Effectively, its certificate is hard-coded, so the cert swap wouldn’t work. Sounds like good security (and it’s one of Open Effect’s ultimate recommendations), but they were able to get around it. They reconstructed the Java source and eliminated the code that enforced the cert pinning. They recompiled the new code, and – voilà – now they could monitor the traffic.

They also looked at the BLE advertising to see how the MAC address was handled. BLE devices advertise their presence when not paired, and part of that advertising packet is the MAC address. Concerns have been raised, however, about the fact that, if you walk through a mall full of stores full of beacons, someone could monitor the advertising packets with your MAC address and track where you are.

The solution to that is to use randomized MAC addresses. While advertising, a fake MAC address is used, and it changes every so often. That way no one can track the device by the MAC address. Once paired, it uses its real MAC address. There are two ways to randomize the MAC address: purely random, which is called “unresolvable,” and in a way that, through a key-like mechanism, allows the true MAC address to be derived (or resolved) from the fake address.

They monitored MAC addresses over several months to see if they ever changed. Turns out that the Apple Watch was the only device using randomization, changing the MAC address at reboot and approximately every 10 minutes. They used a phone app called RamBLE on Android phones to track locations; it demonstrated that it’s possible to create a map of where you’ve been from the MAC address.

Examples of other security bypasses were found for Garmin and Withings (who have since fixed the following issues, so it’s too late for you to use it). Remember that HTTPS uses TLS security; HTTP doesn’t, meaning that everything is in the clear if you capture the packets. Garmin and Withings didn’t use HTTPS except in certain cases. Garmin used it only for creating an account, logging in, and logging out. The rest of the data was sent using HTTP.

Meanwhile, Withings mostly used HTTPS, except when adding a social media email address for sharing. And that was all Open Effect needed: they looked at that HTTP packet to get the user ID and session ID (which changed roughly every 15 minutes). Someone familiar with the Withings API would have been able to use those identifiers to download data – at least until the session ID changed.

Meanwhile, the Bellabeat uses HTTPS for everything within the app, but when you do a password reset, it sends a link in an email – and that link takes you to a page via HTTP, not HTTPS. Once you’re there and enter your data, that data is sent via HTTPS, but someone could modify the code on the HTTP page to send a clear-text copy of the data somewhere else in addition to sending it where it’s supposed to go. Yeah, this has also been fixed.

In this process, they were also able to send fake data to the Bellabeat, Jawbone, and Withings Cloud servers. For example, they told the Jawbone server that they took 10,000,000,000 steps in one day; the server was perfectly content to record that rather busy day. “So what,” you say? This data could potentially be used in court to prove you were or were not where you said you were on the night of the 28th. (Voting? Oops…) If someone can fake the right data, then either they can cover their own fake alibi, or someone else can disprove their real alibi, just to take one example of how manipulated data might be used.

Whether or not the law considers this data is reliable enough to be admissible in court doesn’t seem to be quite worked out yet, but you know that lawyers for both sides are going to fight the good (or bad) fight until told that it’s settled one way or the other.

That aside, it seems that allowing someone to upload fake data is, on its face, not a great policy.

There are lots and lots of other things they learned – it’s a pretty substantial report, and I’ll let you read it yourself. We’ve been talking security here, while the main point of the report is privacy. But I was really struck by how handily the Open Effect team was able to bypass what security was in place. Even when you think you’ve done a thorough job, there may be one minor thing – like the password reset email – that undermines all of that effort.

I sometimes wonder, when I’m in a naïve state of mind, why, with all the ironclad security technology available, doesn’t everyone simply put it in place and keep out the intruders? This shows just a few examples of how hard that can be.

More info:

Open Effect study (PDF)

What do you think about Open Effect’s efforts to test the security (and privacy) of fitness devices?