One of the notable skills repeatedly demonstrated by director Robert Altman was the ability to take a number of smaller stories and knit them together into a larger story. You know, one narrative that pulls the distinct seemingly-unrelated bits together into a larger truth.

Today, we’ve got a few – 3, to be specific – little stories. How to weave them together? What’s the common theme? Well, test, for one. But that’s a blah theme – on reserve in case there’s nothing else.

How about… 10X – as in, many of the bits have 10X improvements to this or that? But… nah, already called that out in the past. It’s been done. Never mind that.

Yeah, kinda not coming up with much here, so I guess we’re left with a pot-pourri of test-related bits from some concurrent announcements by Synopsys. Then again, I’m no Robert Altman, and we’re dealing with business and marketing as well as technology, so “larger truths” tend not to be the order of the day. So let’s just take them one at a time.

Faster Patterns Faster

The first has to do with the creation of test vectors. It’s obvious that test time – the actual execution of test vectors – has a critical impact on chip and system cost. Test equipment is expensive, and each extra second a device-under-test (DUT) sits atop the jig, the more expensive the DUT becomes.

But that’s only part of the story. Someone – or some tool – has to come up with the vectors being rammed through the device. It’s automatic test pattern generation (ATPG). A generic term, to be sure, and it’s been around for a long time. In fact, in my first few years of electronic employ, I was working on ad hoc ATPG algorithms for getting the innards of my PAL devices properly tested.

My modest software would generate a pattern in a few seconds. And other contemporaneous, more sophisticated software (the professional kind) tackling harder problems might take a few minutes or so.

With that as background, it’s not obvious to me to think of ATPG as something that takes particularly long. Especially since (in theory) it’s a one-and-done kind of thing: you generate the vectors once and then run them during test. You might be forgiven for focusing entirely on the execution time of those vectors, since that’s what’s going to happen over and over again – each time adding cost to the DUT.

But learning that ATPG for a modern SoC can take, according to Synopsys, as much as 800 hours – that’s 33 days – kinda changes that calculus. Especially if you find you need to rerun it more than once. That ATPG time is getting in the way of chip shipments, since other tasks for readying silicon have been sped up, making ATPG a longer pole in the tent. Synopsys says that they’ve improved their long pole by 10X.

One thing to keep in mind about ATPG is that it is tied to the test hardware on an SoC. This is something that we looked at quite some years ago. The idea is that compression logic is added to the SoC, and compressed vectors are fed in; they’re expanded on-chip for executing tests. The tests are pass/fail (finding the cause of a failure is “diagnosis” – more on that in a moment).

So the complete test solution is a combination of the hardware on a chip and the vectors generated by software off-chip. If you change the hardware, then the vectors have to change. On the flip side of that, you can change vector generation only so much before you need to change the hardware as well.

So the first qualification I should make here is that Synopsys’s ATPG improvements involve only the vectors – there’s no change to the underlying test implementation hardware on the chip.

They point to three different ways they improved vector generation time. One is on the obvious side: better algorithms. But it’s also about better implementations of algorithms in some cases as well. Regardless, the result is more algorithmic goodness – but that also points to the fact that there are multiple algorithms from which the ATPG tool can pick and choose.

So the second improvement was making that selection process more efficient.

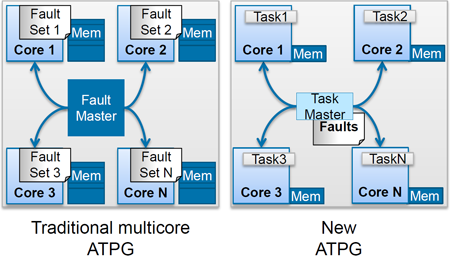

Finally, they turned the multi-threading strategy on its head. In the past, different fault sets would be assigned to different cores, but Synopsys found this to require lots of memory while using cores only to about 25% of capacity. Not efficient.

So instead, they redid implementations in a way that could be parallelized to a finer level of granularity. Now they’re dispatching lower-level tasks and getting much better utilization.

(Image courtesy Synopsys)

Those three efforts were predominantly what gave them the better generation time. But, as it turns out, vector execution time didn’t get completely left out. At the same time, they claim to generate 25% fewer vectors. (I’m going to go out on a limb here and assume that’s for equivalent coverage.) So test time gets a break as well.

They also improved diagnosis time by 10X. This is the extra work the tool has to do to take a specific failure and push back the logic to see what specific faults might have contributed to the failure.

So that’s announcement 1: faster ATPG by 10X; faster diagnosis by 10X; fewer vectors by 25%.

Stay in Your Lane

Announcement 2 has to do with validation of automotive designs per ISO 26262. If you’re not familiar with how it and other similar standards work, you’re more or less tasked with proving why a given design is safe for keeping our airplanes in the air or (in the specific case of 26262) our cars on the road, playing nicely with other cars on the road.

There’s no one rule on how you do this; you work with a consulting team to build a case based not only on the specifics of the design, but also on the process by which the design came about – creation, verification, validation, and all that. You prove that all requirements were met and that, conversely, there are no design bits – software or hardware – that don’t serve specific requirements. No Easter eggs. No flight simulator. (OK, unless you’re specifically building a flight simulator.)

And this can involve an endless chain of, “How do you know that works?” “Because this tool says so.” “Well, how do you know that tool is right?” To that last one, answering, “Because I paid an arm and a leg in licensing fees and it bloody well better be right!” isn’t considered good form.

But it’s also silly to require multiple teams to prove commonly-used tools multiple times. So it’s possible to take specific tools and create a project that proves out the tool. That proof then can be reused by numerous design projects, saving oodles of time.

And that’s what Synopsys has just announced with respect to their test tools. Specifically, TetraMAX ATPG, their DesignWare STAR Memory System, and their STAR Hierarchical System have been certified by SGS-TUV for even the most safety-critical designs (Automotive Safety Integrity Level, or ASIL, D).

This can now be used to cut off a bunch of time that might otherwise be required to prove that these tools aren’t committing anything nefarious or negligent when they run.

Are You Aware of Your Cell?

Finally, you may recall last year’s Synopsys announcement of cell-aware testing (right about this time of year… it’s that ITC thing) as well as our more detailed coverage of the technology a few years ago. Well, Synopsys has made some more improvements on an important step that cell-aware testing brings to the process.

Apparently, despite the better coverage that cell-aware testing can provide, there’s still some resistance to it because the process requires extra steps. The major new step is cell-aware characterization: going through the design to model intra-cellular faults. They’ve sped this step up by – you guessed it – 10X. And by so doing, they’re hoping they’ve also reduced the barrier to cell-aware testing.

So there you have them: three separate test-related mini-stories. I’m sure Mr. Altman could have woven them into some bigger theme, no matter how improbable. But you’re stuck with me, so I present them as is.

More info:

Will Synopsys’s test improvements improve your life?