PCs have a rudimentary form of power management. Under a limited set of circumstances, a PC can reduce its own power consumption without your manually having to put it to sleep. As far as my experience tells me, the events that can cause a power down are inactivity and lid closure. And the power savings can be obtained by turning off the display and entering a sleep or hibernate state. This is pretty much the extent of what’s possible using the top level of the Power Options utility.

But let’s say you want to be a good, safe computer user and back up your system. With many such systems, it’s easier to do this while you’re not using the computer. I’ve found one program, for instance, that can back up email files to the cloud. But each time you get an email, the email storage file changes, causing a backup restart that can block the backup of numerous other files.

So you schedule a backup at, say, 2 AM. No one is sending you emails then, and your work isn’t competing with the backup for CPU time. Perfect, right?

Well, not if you last touched the computer at 10 PM and set it to go to sleep after an hour of inactivity. When 2 AM rolls around, the computer is asleep and doesn’t notice it, and no backup happens.

This is a gross example that illustrates two challenges with this – and most – systems. First off, in order for the computer to be able to wake from a sleep at 2 AM, run your job, and then go back to sleep, there has to be some piece of hardware that stays awake, monitoring the time and any scheduled tasks so that it can wake the main computer when the time comes. That’s not part of the standard PC architecture (as far as I’m aware).

But even if that hardware were available, Windows (as currently configured, and I consider Windows 7 to be the latest usable version of Windows) wouldn’t be able to provide access to that hardware feature. More intelligent power-down options require both hardware than can handle it and an OS that can make the choices available.

Of course, the real challenge is automating these power-down options. There are some high-level decisions that users can make, but asking Uncle Morty whether a particular cache or core should be shut down isn’t going to go down well.

So this leaves the question, how can we power down as much of a circuit as possible and then let the OS take advantage of that? And, while I’ve used the PC as a familiar example, there are two main drivers here, according to Sonics: power-sensitive edge nodes on the Internet of Things (IoT) and power-sucking high-performance computers. Energy Star ratings will also make power management attractive as the electronic content in consumer items increases.

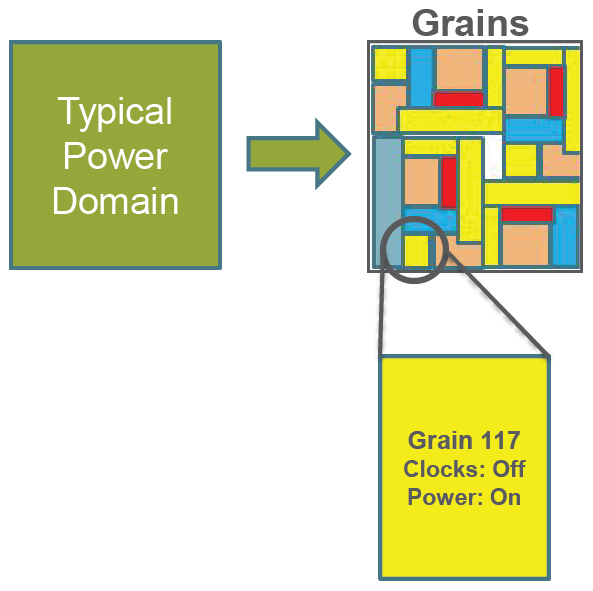

Let’s start with hardware. Sonics, better known for network-on-chip (NoC) technology, has announced ICE-Grain, an architecture for reducing power in SoCs and doing so with a fine level of granularity. And granularity really is the key in this whole discussion. Part of the problem with the PC example is that you’re powering the entire machine on or off – not bits and pieces of it.

If granularity is too coarse, then you’ve got an all-or-nothing approach. You might want to turn off a large block, but if a tiny portion of that block needed to remain awake, then the whole block would have to remain awake, wasting most of the energy used to power the block. By allowing power control of blocks at a much lower level, you have a better chance of leaving awake only those blocks that have to be awake. Exactly where to draw those block boundaries is part of the design process.

(Image courtesy Sonics)

The thing is, deciding when to power off a particular block can be tricky. One resource may be finished with a block and declare that the block can sleep, but another resource may still be counting on it, so it can’t be shut off. In reality, this is a hierarchical set of power control nodes with potentially complex dependencies.

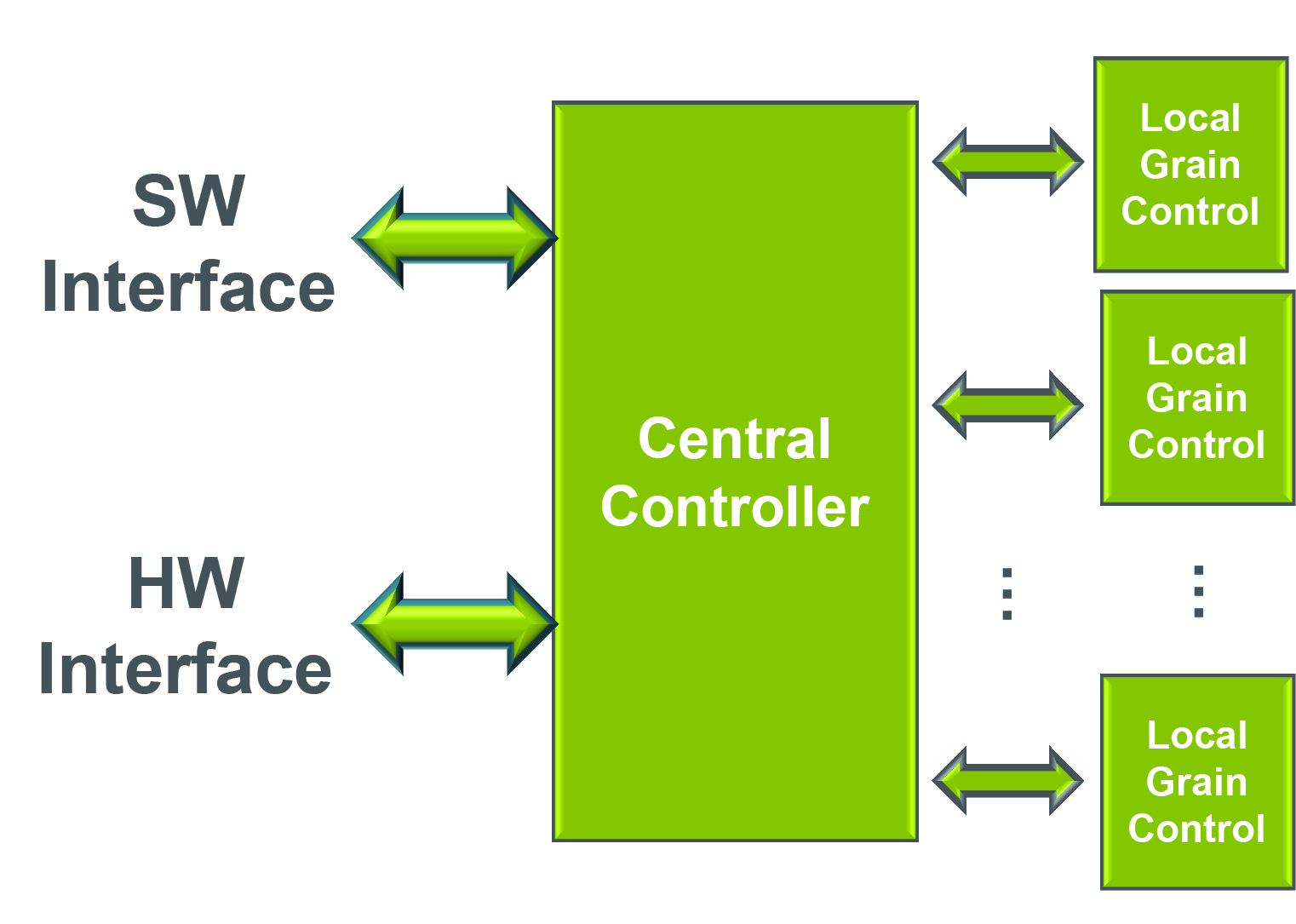

So what Sonics is planning is a partitioning of the circuit into small blocks that they call “grains,” each of which has a local controller. The grain controllers are managed by a central controller. The central controller has access to various hardware and operating-system (OS) events that it can use to direct the power states of various grains.

(Image courtesy Sonics)

There are several benefits to having this control in hardware. The most obvious is the fact that you don’t need to wake the processor to make the necessary power decisions. You can also manage smaller domains than would be practical if a CPU had to track it all. From a software standpoint, it’s an autonomous system.

Finally, software-based power management commands tend to be queued up. If a particular event meant that 20 blocks could be powered down, then the CPU would have to queue up 20 power-down commands that would execute in sequence. With hardware, they can all shut down in parallel. If there are concerns that certain blocks need to be powered down before others, that ordering can be handled by the power management block.

There’s yet one more reason why hardware can help: it’s much faster. With any power on/off scheme, you have to figure out whether it makes sense to power something down, since both power-down and power-up take time. Done in software, they take much longer, meaning that it simply wouldn’t make sense to worry about shorter-term low-level power-down opportunities. But done in hardware, many of these opportunities become fruitful, allowing yet more incremental savings.

The other thing to keep in mind is that there are various ways to save power. For one block, it might involve stopping the clock. For another, it might involve lowering the voltage enough to retain state but not switch at speed. Yet another may power completely off. Or it could be a combination of these and other techniques, depending on events. Done at a low level, there’s far more flexibility – probably enough to get you in trouble if you’re not careful with your power management design.

Designing this right will mean the difference between brilliance and disaster. There are two big challenges to making this work. One is in specifying the power switching rules for each block in a manner that ensures that dependencies and timing are considered. Sonics is releasing a tool that helps to manage this. It accepts various block UPF files, allows the designer to define the power rules for the entire system, keeps track of the block dependencies, and then issues a top-level UPF that translates the high-level rules into hardware power-intent rules.

The other big challenge lies in figuring out which blocks are good targets for power management and where the right level of granularity is. And that requires detailed power analysis that would leverage what can already be done in power analysis tools, but with output and results presentation different from what’s done today. Sonics doesn’t want to write such a tool – that technology problem has mostly already been solved, so they’re working with EDA folks to try to connect this piece of the flow.

Sonics has so far announced only an architecture; product announcements are likely to follow towards the end of this year. They also noted that this capability works well with, but is completely independent of, its NoC technology. So you don’t have to be a NoC customer to do this.

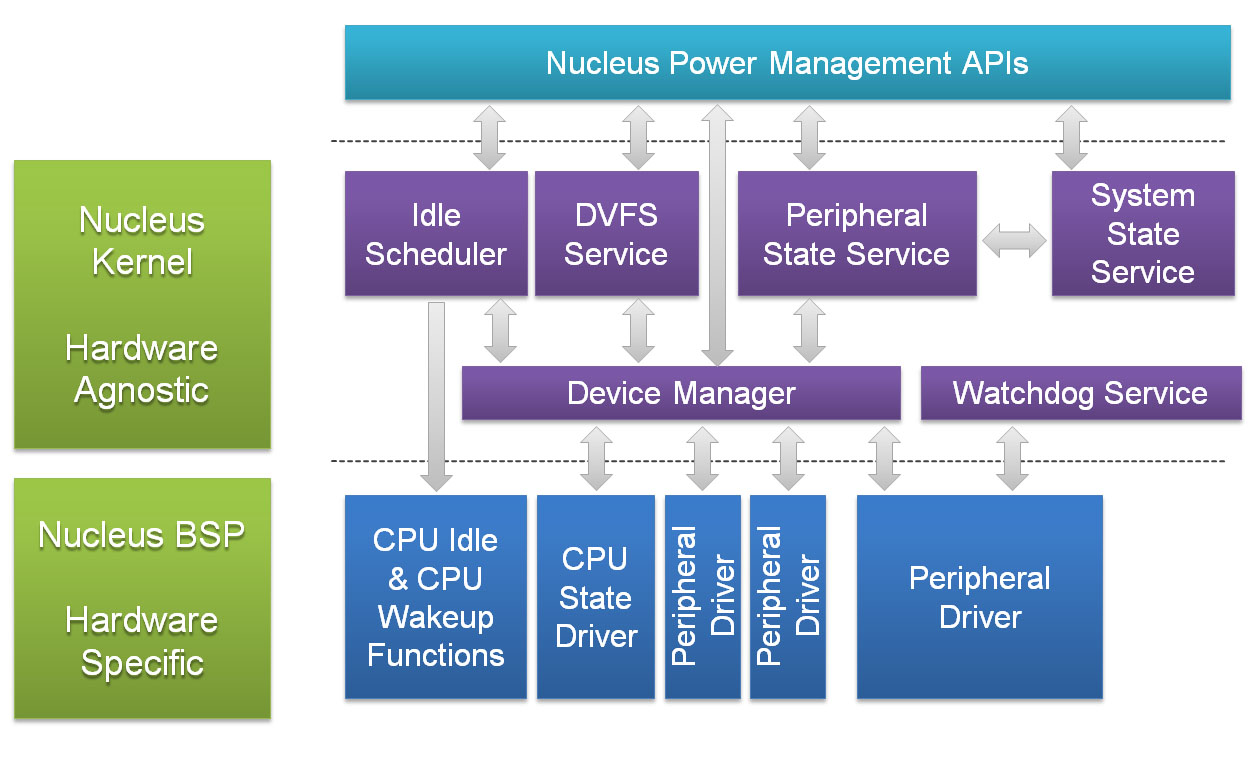

Meanwhile, on the software side of things, and announced completely independently of the Sonics news, Mentor noted that their Nucleus embedded real-time OS (RTOS), traditionally finding success in radio baseband applications, is now finding traction in wearables as well – the earliest wave of consumer IoT gadgets.

And their point is that SoCs can provide all manner of power saving options, but they can be leveraged only if the OS can connect to them. This model, of course, puts the OS at the center of power management, a role that it would share in the Sonics scenario. But the Sonics system still needs to work with the OS (Sonics provides the drivers), so if both OS and hardware can share the duties, then the designer has maximal flexibility as to where each power-saving decision should be made. Presumably, the OS focus would be on events generated by the user, while the hardware focus could be on low-level events that the user might never be aware of.

Unlike what Mentor claims the capabilities of other OSes to be, Nucleus allows more granular power control – again tracking dependencies. Processor cores can be loaded or unloaded individually, for example. The OS would present a consistent API to application programmers, but, underneath, it would accommodate any hardware power-saving capabilities in a custom board-support package (BSP) for that system.

(Image courtesy Mentor Graphics)

Implemented in a way that designers and users can leverage, these techniques can have a dramatic impact on how much power systems draw. While motivated by both ends of the power spectrum – power misers and power gluttons – overall incremental benefits could accrue to all systems.

More info:

What do you think of Sonics’ and Mentor’s proposals for increasing the granularity of power control?