We’ve talked before about the challenges of navigating indoors. It was a hard problem then; it remains a hard problem today, with numerous technological contributions coming here and there to help out. For the most part, there’s still no blockbuster new technology to put render all that has come before obsolete, but what follows is a look at a couple of recently-announced real-time location service (RTLS) approaches that continue to build on this pile of solutions.

Be the Spoon

We’ll start with a self-contained proprietary system. In fact, it’s so proprietary that it has its own phone, although, in reality, the phone is more of a development kit than a product. In fact, they announced three such kits a couple of months ago.

We’re talking about BeSpoon and their SpoonPhone. No, don’t try to eat your soup with it; you’ll void the warranty (I assume…). The SpoonPhone comes equipped with matching Tags.

Figure 1. BeSpoon Tags (Image courtesy BeSpoon)

Tags are small transponders that listen for messages that the SpoonPhone sends out; upon hearing the message, they send a response. The SpoonPhone can calculate the distance to the tag based on the time of flight of the message exchange.

Yup, these are essentially beacons. And the key is this call-and-response bit. The phone parts of the SpoonPhone – well, they’re just so that developers have a platform with the usual capabilities and sensors – probably more than they’ll need in their final system – to figure out how they want things to work. BeSpoon does not intend to compete with Apple or Samsung; it’s likely that a production system would use some simplified module, not the SpoonPhone itself.

So why go through all this? Because they say that they can be accurate to within a few centimeters in all three dimensions. So this isn’t the kind of system you might find in a retail store to see if someone is near a shelf; it’s more precise – intended more, for example, for industrial or logistics use. Tags can be put on equipment or other assets for measurement and tracking.

And they have an operating mode that sounds, based on its name, like a mind-bender: “inverted 3D.” In reality, you need plumb no new dimensions to make sense out of what this is. It has to do with the relationship between the master and the tags in the system.

By “master,” I’m referring to the role that the SpoonPhone plays – which, in production, might be played by something other than a SpoonPhone. I’d call it a “base station” except that, in other beaconing systems that use, say, WiFi, base stations are fixed, not mobile like the SpoonPhone, so that could be confusing.

So you’ve got this master communicating with a set of tags. A typical arrangement is for a master to be fixed in location, keeping track of assets as they move around. This is a normal “3D” arrangement, according to BeSpoon.

But you can also fix the tags in known locations; now you’re not measuring their position anymore (you know that by design); you’re measuring the location of the master as it moves around. The relationship between master and tag has been inverted, and so this is the “inverted 3D” mode.

With all the focus on systems featuring open technology, will this survive? Well, it’s somewhat academic until competing open approaches achieve this level of accuracy. Once that happens? Might be a reasonable question… (Of course, BeSpoon could always migrate to an open approach…)

Crowd Computing

From close precision we move in the opposite direction: broad positioning for applications that are more… indirect than the more obvious indoor apps such as would be used by a retailer. Not talking gross inaccuracy here, but from centimeters we’re moving to, say, 5-meter-or-better accuracy. Developers that are trying to incorporate a general sense of location into some other app may not be trained in the intricacies of indoor navigation, and so CSR believes that they would benefit from a dead-simple way to approach the problem.

So… how simple does this sound? Just use a pull-down menu (or something) in your dev kit to specify your accuracy requirements. The system then goes off and pulls in the appropriate algorithms, and –boom – you’re done. (OK, not done done, but you don’t need to futz with algorithms any more than that.)

This is how CSR’s latest SiRFusion works. But, unlike the BeSpoon approach, which uses one basic technology (albeit proprietary), CSR has their own sensor fusion algorithms that bring together all of the normal dead reckoning and trace GPS technologies typically associated with pedestrian navigation. But there’s a twist.

It all started when they were trying to implement pedestrian navigation in Japan. Tokyo, for instance, has vast underground networks of passageways. I remember discovering them as an alternative to a guaranteed torrential soaking in the Shinjuku district many years ago – my Japanese host, who also had his office in the area, wasn’t even aware of the scale of the tunnels.

So if you’re trying to track someone’s location in Tokyo, it has to work underground. And GPS won’t be any use there. Which leaves dead reckoning. In an environment that turns out to have horrendous magnetic anomalies that make correcting gyroscope drift really difficult. So they needed something else to help out.

As we’ve seen, whether using proprietary signals or public ones, the generic concept of the beacon provides yet another tool for establishing location. BeSpoon is one approach, but WiFi and BlueTooth are commonly leveraged. The question is, however, how do you maintain the map of all the base stations so that it’s a reliable reference?

CSR decided to “crowdsource” that information, starting with WiFi. So, for instance, let’s say you’re running an app on your phone that’s based on the CSR technology. You enter a mall – and no one else running this system has ever been in that mall before. That means there’s no WiFi map of this mall in the cloud yet, so the only thing you have going for you is dead reckoning (plus any GPS leakage). But as you navigate the mall, your phone will be busily uploading information: every 3 or 4 seconds, it will take a fix on your position (as it knows it) based on your phone sensors. It then also scans for “basic service set IDs” (BSSIDs – like SSIDs, but it includes information on access points) and sends those up.

This forms the basis of a WiFi map. Once reliable enough after getting enough data points from enough users, algorithms can now call on that data in addition to the dead reckoning data in order to get a better fix on your location. Over time, CSR can start correlating traffic patterns and intuit, for example, where the entrance is based on the fact that pretty much every user is going through that point before fanning out in different directions.

“Whoa Nellie!” you may utter in caution. “This sounds a lot like what Google got ripped to shreds for doing with its gathering of WiFi access point locations during its StreetView mapping.” I asked about that, and there’s a critical difference between what CSR is doing and what Google did. Google was intercepting actual WiFi traffic and capturing snippets (even if it did nothing with the contents of the snippets other than figure out where the source was).

CSR isn’t looking at actual traffic; it’s simply looking at the broadcast list of network resources. That is, after all, what this broadcast feature is intended to do: tell other equipment about the network. There’s no actual traffic or content being read, so this shouldn’t run afoul of folks concerned about the privacy of their WiFi communications.

While most of their maps will be crowdsourced in this automated way, a big retailer, for example, could send CSR a spreadsheet of specific WiFi base station locations. It can then be incorporated into the map all at once rather than waiting for contributions from multiple passersby.

Of course, while WiFi access points don’t move often, they do move. In fact, many access points – 5-10% of them – are in phones, and they’re constantly on the move. But as part of the BSSID data, CSR can figure out which access points are phones so that they can be rejected.

Moving a stationary WiFi access point, however, may or may not show up as an anomaly for remapping – it depends on how far it has moved. Move it 20’ or so and it probably won’t show up. Move it 60’ and it would. So that gives you a sense of how sensitive this system is. I’m going to go out on a limb here and assume that statistics help, so that even a moderate move eventually would show up as a re-clustering rather than as noise.

And, before you ask, yes: CSR is also working on doing the same thing with BlueTooth, which will give them yet more accuracy – down to 2 meters or so.

And all of these electronic ruminations are done in the cloud. There’s no issue of figuring out how to execute this stuff on the phone without running the battery down; it’s offloaded to the servers in the sky.

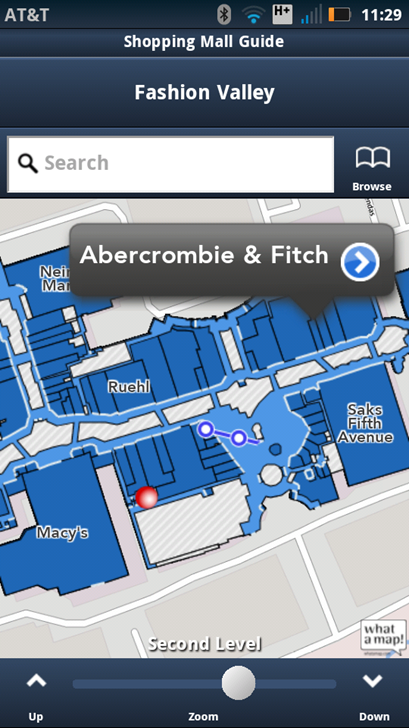

What users would experience would not be CSR technology directly, but an application from someone using CSR technology to establish location. For instance, a mall might hire out the development of a map app (“mapp?”) for the mall. That would mean integrating a physical map of the mall, but the WiFi map would be built and maintained in real time.

Figure 2. An app showing the location of a specific store. (Image courtesy CSR)

CSR just announced a development kit for Android; the kit unlocks this technology for folks developing applications on an Android phone – and, in particular, for folks that don’t want to have to develop a second career in location technology.

So here we’ve seen two completely different approaches. One from BeSpoon, which achieves tight accuracy but requires explicit involvement in developing a “master” and then mapping the tags; the other from CSR, which requires minimal conscious contribution by anyone (developer or user), intended for applications that can do with few-meter rather than few-centimeter accuracy.

OK, off you go. Don’t get lost now…

More info:

Are current RTLS technologies like these meeting your needs, or is more work required?