It might just be the end of another lurch.

Technology doesn’t evolve in a smooth, continuous fashion. Someone has an idea for something totally new and makes it happen. And someone else sees that idea and thinks, “OK, that’s pretty cool, but I have a better way to do it.” And someone else looks on, shakes her head at the pitiful, primitive attempts underway and puts forth yet another approach that does its tricks even more efficiently and elegantly.

And so, from that original brainstorm comes a flurry of innovation. Each modification benefits from hindsight, having in hand the results of those that came before. This goes on for a while until some asymptote is approached and the activity level mellows out. And then, sometime hence, yet another new brainstorm occurs, and the process repeats.

So rather than proceeding in a constant fashion from point A to point ?, technology evolves in a series of lurches followed by variations on the lurch. Until, at some point, people decide that enough variations have been explored and that it’s time to move on; no more value is being added.

The sensor hub has been the focus of this sort of attention of late. Seems like only a couple of years ago that this was a novel idea. By offloading sensor processing, much of which must happen continuously, the application processor (AP) in a phone can be put to sleep, saving critical battery power, to be woken only if something significant has been picked up by the sensor subsystem.

There have been numerous implementations of sensor hubs in hardware and software. It’s certainly not clear that all the space has been explored. But then again, this is a business. If the explored space provides good-enough solutions, then why explore more? Going with a known approach can save a lot of time as compared to having to invent something totally new.

So Sensor Platforms, a purveyor of sensor fusion solutions, in collaboration with ARM, has declared that this is the time to formalize an architecture for AP/sensor hub interaction. They’ve rolled out an Open Sensor Platform specification, to be followed up by supporting open-source code, to establish an interface between the AP and the sensor hub along with a supporting framework that newcomers can simply use rather than having to rethink the entire problem.

The goal is to make it easier to incorporate existing and new sensors in mobile platforms by leveraging a pre-established structure and pre-existing code. One can then focus on value added rather than re-inventing the sensor hub yet again.

A brief summary of OSP

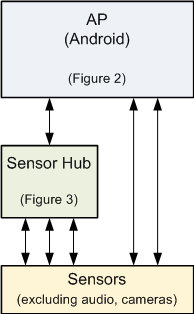

OSP sets up an API, assuming a specific arrangement between the AP and the sensor hub. The high-level configuration is shown in Figure 1. Given the value of the sensor hub, you might think that it would manage all sensors, but OSP provides for the situation where some connect directly to the AP.

Figure 1. High-level AP/sensor-hub configuration

Note that OSP isn’t intended at present to address the data-heavy audio and video spaces. They can see possibly taking on audio in the future; there are no present plans to include cameras.

The following two figures illustrate the framework assumptions and components for the AP and sensor hubs shown above.

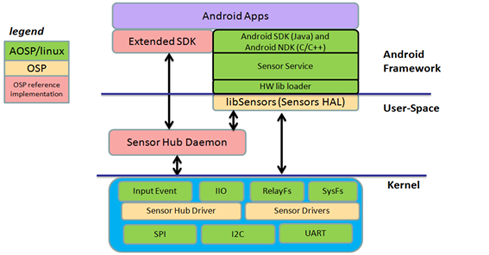

Figure 2. OSP framework – AP side (Courtesy Sensor Platforms)

Figure 2 shows the Android-side framework. Yes, there’s a decided Android tilt to this whole thing. There’s nothing about it that fundamentally ties the concept to Android, but that’s clearly where the initial attention is.

What OSP (not to be confused with the “AOSP” in the drawing, which is “Android Open Source Project,” aka “Android”) specifies are the yellow bits above, the elements necessary for bringing in sensor data – either from a hub or directly from sensors – and pushing it up to make it available to applications per the requirements of the Android hardware adaptation layer (HAL).

It also includes reference implementations of other supporting elements, shown in pink. The main practical difference between the yellow and pink parts is that, if you extend the yellow portions, you need to check that code back in per the license. That’s not required for the pink portions.

So critical bits would be drivers – either for the hub or for any directly connected sensors – and the libSensors library, which adapts this all to the HAL. The sensor hub daemon is the “interceptor” of data as it comes in. It manages the registration of sensors and receives notifications and interrupts from the sensors or sensor hub, passing them on to the HAL. The Extended SDK adds OSP features to the overall Android SDK.

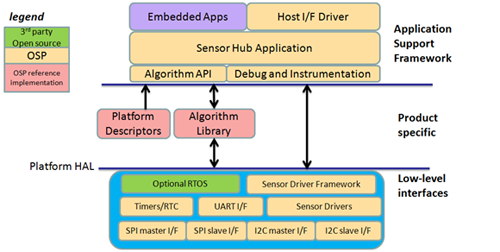

Figure 3 shows the sensor hub side of the relationship. Here the OSP specification covers numerous blocks, starting at the lowest level with the two bus interfaces most common with sensors: SPI and I2C. Above that are a basic clock (important for things like time-stamping data), a UART interface (serial communication with the AP), and drivers for the attached sensors, which feed a framework for the drivers.

Figure 3. OSP framework – sensor hub side (Courtesy Sensor Platforms)

At the top level, alongside any other applications running on that same processor, you find the general sensor hub application itself, along with a driver for talking to the host (at the application level, not at the low-level bus level) and APIs for various sensor fusion algorithms. Here also reside facilities for instrumentation and debug.

In the middle, there are reference implementations of sensor fusion algorithms as well as “platform descriptors,” which describe the system overall – how many and which sensors, for example – and adapt it for Windows or Android (or whatever).

These high-level pieces were announced a few weeks ago; much more recently, a new document showed up on the website that contains more specifics on the APIs and formats. It’s important to note that OSP doesn’t specify the details of sensors or their events; it’s a framework that’s extensible, so it will accommodate new hardware and new functions.

The key player in this is the daemon, which handles the registering of sensors and their events through the establishment of callbacks. It’s this functionality that OSP specifies, not the specific events being registered.

This means that there are two phases to behavior: boot-up, when the system is configured, and during normal operation, when sensor data is being managed or even disabled. It is assumed that calibration data is available, either accessible directly in non-volatile memory or delivered by the host. Calibration will be repeated during normal operation, so this store of calibration data not only provides the initial calibration values, but will also be updated on an ongoing basis.

At boot-up, that calibration data is accessed, followed by a call to OSP_Initialize(). Then, for each sensor, a descriptor SensorDescriptor_t is created, and the sensor is registered using OSP_RegisterInputSensor(). This establishes the appropriate callbacks for use when the sensor hub wants to nudge the AP.

Once running, you’ve got these sensors sampling their world along with two accompanying processes: fusion and calibration. Fusion is modeled as a “foreground” operation; calibration as a “background” operation. Foreground operations are intended to run at least twice as fast as you expect to be sending out data; background operations at least as fast as your data output frequency. Specifically:

- Sensor data is communicated to the AP via OSP_SetData();

- Fusion algorithms are invoked using OSP_DoForegroundProcessing();

- Calibration is invoked using OSP_DoBackgroundProcessing().

In addition, the host can control the hub, enabling, disabling, or changing the timing of sensor updates.

- OSP_SubscribeOutputSensor() is used with a SensorDescriptor_t structure to respond when the host wants to enable a sensor;

- OSP_UnsubscribeOutputSensor() is used when the host disables a sensor; and

- When the host wants to set the data rate it sees, then the sensor is first disabled using OSP_UnsubscribeOutputSensor(), followed by changing the OutputDataRate and then re-enabling the sensor with an OSP_SubscribeOutputSensor().

What do the other guys think?

This isn’t necessarily the culmination event for this technology. It is one implementation that assumes a software approach. It’s not intended to declare other approaches invalid, and it’s not really put forth as a standard candidate (I don’t think). Rather, it’s an option for folks that would rather take a shortcut to a working system rather than expending effort on what would feel to them like over-optimization.

But an obvious question is, what do Sensor Platforms’ colleagues/competitors think about this? The 3rd-party sensor fusion world is dominated by Hillcrest Labs, Movea, and Sensor Platforms. One of these guys – Sensor Platforms – has placed a bet on the table. How are the others responding?

Let’s start by simply providing their verbatim responses

From Hillcrest Labs:

Having a common open-source framework for the sensor hub potentially allows for quicker time to market, higher reliability and easier systems integration. Those are all good things. The potential for rapid evaluation of different solutions could be appealing to certain microcontroller vendors and OEMs, acting as a framework to test (rather like Google’s use of CTS to evaluate algorithms). The easing of certain integration efforts might be particularly appealing to some of the ‘other’ hub location vendors (audio etc) who might be able to use the OSP to have more control over the porting process to their new chipsets. Conceptually from an OEM standpoint you could even see this allowing evaluation and integration of separate components from individual vendors (e.g. PDR from Company X, Context Detection from Company Y), which might be of value.

However, these advantages come at a price. The advantages are only realized if there is very little customization of the code for new platforms. However, there are 3 things that work against the sensor hub framework code being adopted wholesale:

Power management optimization: Power is perhaps the most important features of a sensor hub. In fact, power savings is the primary motivation for its use. However, each chip’s hardware and power characteristics is different. Sometimes even the instruction set capabilities are different. To optimize the sensor hub power for a given chip, the sensor hub architecture and code often needs refactoring. That refactoring leads to then to different architecture and code for individual chips. The alternative is higher than necessary power consumption and a potential competitive disadvantage for the open source code user over the version that was customized. It seems like it will be very difficult for an architecture to be flexible enough to meet the needs and approaches of a wide variety of users while still being optimized enough to meet the performance goals and system constraints of individual products.

Feature and API changes: The features and even API of the sensor hub is rapidly changing. This is driven both by core systems suppliers like Google, by individual phone OEMs, and by sensor hub vendors themselves. With each change, the architecture is potentially impacted and the code needs to be updated. One example is the batching feature that was introduced with the Android KitKat release. But none of the individual potential contributors of that sensor hub code and architecture update will want to share it until at least they release it themselves (for basic competitive reasons). Furthermore, it is unlikely that each of those contributors will adjust the architecture in the same way. The result is that when they eventually come together to harmonize those contributions, the process will not come easy. Then, after that, will all those contributors want to build on the harmonized code? Or will they prefer to build on the version that they had done themselves? So it seems inevitable that fragmentation would happen as each company adds their own secret sauce. Our prediction is that the stronger players will prefer to keep with their own version. That choice in turn will lead to even greater divergence from the open source version over time. Eventually, the divergence will be great enough that that stronger players stop contributing and simply monitor.

Device integration: The overall trend towards device integration yields a number of very different potential sensor hub providers out there. For example, there are micro-sensor hubs in sensor packages, early signs of audio and motion sensing hubs and other ideas as well. Each version has its own set of pros and cons but, importantly to this discussion, they also have different overall software architectural needs. While the generic sensor hub architecture will overlap with each of those versions, will the overlap be large enough to justify its adoption by the companies involved? Each company will have to make that decision for themselves but it’s far from a slam dunk that using the generic software architecture will be helpful in these integrated hub use cases.

In general, the reactions to the OSP offering will depend on where they players sit in the ecosystem. For the weaker and newer software players in the field, using this architecture might make sense. It allows them to focus their resources on developing features outside of the common functions (e.g. focus in on context, PDR etc). The stronger, experienced players will probably continue with their own architectures in order to maintain that differentiation. Mobile OEMs will still look for power, performance and cost and won’t care if the OSP is used or not. Larger chip vendors will want software on their devices that optimizes power more than they will want to see OSP used. Smaller chip vendors may well use OSP as a quick means to at least offer a basic solution to the market.

This is all a game of predictions but if, in the end, only the weaker players contribute to the OSP coding effort, the value of it will diminish over time. For OSP to succeed, the stronger players will have to see active support of its evolution to be in their self-interest. At the moment, I [CTO Chuck Gritton] personally don’t see that happening.

And from Movea:

By providing an open source framework for motion library to ARM, it appears that Sensor Platforms and ARM offer a normalization of the interface, much like a reference design to the industry. We believe this announcement is positive in the sense that all motion processing providers will benefit from standardization, leaving them to focus on the motion processing itself.

As we understand, the framework does not include the Sensor Platforms motion library so any motion library provider will be able to provide their motion library, including Movea. Once the SDK and API are released, our team will quickly develop a wrapper, so that any ARM customer will be able to get Movea’s sensor hub libraries easily.

We have been working with ARM, and still are, on the development of a back end interface with sensors and this announcement does not change our relationship with them.

Finally, this announcement does not affect our ability to sell our solutions to anyone, including ARM, whether with or without e framework we have developed.

My summary: “Real men roll their own” (Hillcrest) and “We can work with this” (Movea).

Sensor Platforms, meanwhile, has said they’re “dedicated to managing this project,” including code reviews and regular releases, in a transparent manner.

We will update as relevant.

More info:

What’s your take on the new Open Sensor Platform open-source project?