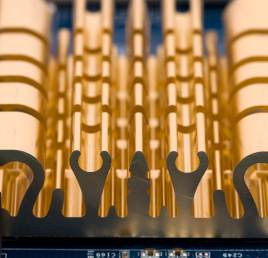

It’s not often you see memory chips with heat sinks. But these are no ordinary memory chips.

No, indeed. These are “bandwidth engines,” a new class of chips from MoSys. Don’t know MoSys? Then you’re probably not an SoC designer. The company has been around for more than 20 years, but it made its name in the IP-licensing business, not the chip business. MoSys created the single-transistor (1T) SRAM cell, which you could license for inclusion in your own chip. 1T SRAMs are a lot smaller than traditional six-transistor SRAM cells, so the MoSys technology was good for packing a lot of memory into a little space.

The bloom has come off the IP-licensing rose, however, so, a few years ago, MoSys started making a corporate about-face, converting itself into a chip company. And Exhibit A in that transformation is the bandwidth engine.

A bandwidth engine (BE for short) is basically a smart SRAM chip. That is, it’s a big memory with some onboard intelligence to make the memory go faster. I know: the term “smart memory” seems like an oxymoron. Or at the very least, an engineering solution in search of a problem. It’s also somewhat tainted. The path to smart memory chips is littered with the bones of failed projects. You’re right to be cautious.

But MoSys seems to have this figured out. For starters, the new BE is designed to solve a specific set of problems often encountered by network line cards and other Interweb appliances. The basic purpose of network plumbing is to get data packets from Point A to Point B as expeditiously as possible, while also massaging that data as intelligently as possible. Thus, line cards tend to be populated with high-end packet processors and/or FPGAs connected to a whole lot of memory. They shuttle data packets in and out of that memory as quickly as they can before moving on to the next packet. There’s a lot of data traffic and a lot of transfer back and forth between the memory and the packet processors.

In that kind of environment, a smart memory chip can help, and this is where MoSys stepped in. Each BE chip is basically a big (576 Mbit) SRAM with a limited RISC processor at the front end. The processor is there to intercept read/write requests from the packet processor and to look for ways to simplify, speed up, combine, or even avoid memory accesses. Any memory access saved is time saved, so the processor pays for itself in terms of faster access speed and lower bus usage.

You might think there are scant opportunities for a mere memory chip to second-guess a big million-gate packet processor, and you’d be right. We’re used to our processors being smart and our memories being dumb. All the thinking goes on in the processor; the memory is just there to do what it’s told. The pitfall that other smart memory designers fell into was in making their memories too smart. You had to program around them, or at least program with an awareness of them. The smart memories became, in a sense, another program thread that was far from transparent to software.

MoSys doesn’t do that. Its bandwidth engine is smart, yes, but also obedient. You treat it just like any other memory, but one that’s frequently faster than it ought to be. A good example is a read-modify-write operation, which network line cards spend a lot of time doing. In a typical processor/memory pair, the processor would read a word out of memory into a register (one bus transaction), modify it is some way (add, subtract, exclusive-OR, etc.), and write the result back to the same address (a second bus transaction). The bandwidth engine recognizes this type of transaction and performs the entire read-modify-write operation internally, saving time and reducing bus activity. The processor doesn’t know the difference, but the memory is much less battered. Do enough of these RMW cycles back-to-back and you start saving real energy. The chip can perform most simple logic and arithmetic operations on its own.

The third component of the bandwidth engine is its bus interface. MoSys designed a special bus protocol specifically for bandwidth engines that has very little overhead compared to, say, Serial RapidIO. The interface is designed for short transfers of small packets: exactly the kind of traffic packet processors typically do. It’s not efficient for long table walks or other computer-esque transactions, but that’s not what it’s intended for. The low pin count and small packet overhead mean fewer, shorter PCB traces on the line card and a bit less EMI.

The downside? Bandwidth engines are not a drop-in replacement for normal memories. You’ve got to design them in from the outset. The new bus interface, of course, requires a memory controller (or a packet engine with its own memory controller) that speaks the new interface language. Right now there are exactly zero such chips, but Altera and Xilinx both support it in their high-end FPGAs. MoSys will happily license the interface IP to you for inclusion in your own chips, too.

Previous stabs at creating smart memory chips were usually too ambitious, and they generally failed the “transparency” test. Nobody wanted to design a computer system around its memory; that just seemed backward. But MoSys has taken on a well-bounded and tractable problem, yet one with a big enough market to be commercially successful. There are a lot of designers out there creating black boxes that speed the ’Net along, and at least of few of them are likely to give the bandwidth engine a shot.

Very interesting. But… feeling a bit dense here… with your RMW example, that means that you not only access with the address of what you want, but you also pass the operation to it so that it can do it internally? Actually, I guess you would do a single request for both operands and include the operation? Which is very different from a standard memory access. How does that happen? Does the compiler have to know about it? Is there something magic in the memory controller?

Right. To use the RMW example, the processor’s bus interface basically fires off a command that says, “add 5 to whatever you find at address 0x0123.” The BE takes it from there. The read from location 0x0123, the add, and the resulting writeback all happen inside the BE chip, not inside the processor. Obviously, this requires a very different kind of memory controller on both the processor chip and the memory chip. It’s not just the usual read/write/refresh transactions we’re used to.

Currently, the BE can do addition, subtraction, and logical operations all by itself.

It requires a lot more intelligence from the bus interface (on both sides) than “normal” processors or memories do. But high-end microprocessors (Core i7, Athlon, SPARC, et al) have had similar features for about ten years. A big Intel chip, for example, will queue up bus transactions internally before sending them out to the memory bus. If it sees two (or more) requests destined for nearby addresses it will combine them into one larger transaction, even though the CPU never asked to do that. Similarly, if the bus interface sees a write to a certain address, followed by some other unrelated read/write cycles to other addresses, followed by a read back from the first address, it will skip the second read and just return the data it was asked to write earlier. (This only works if you assume there is no second bus master sharing the memory that could change the data in the meantime.) They’ll also “hoist” read cycles in front of write cycles in the transaction queue, on the theory that the software stalls waiting for the results of a read, whereas writes are not performance-critical. Bus interfaces like this do all sorts of tricks to reorder, or even eliminate, memory transactions, and it’s all invisible to software.

But what’s different here is not just the rearrangement of accesses that, to the memory controller, may be completely unrelated to each other. This requires two more things:

– The notion that two operands aren’t just accesses that happen to be near each other (they may, in fact, not be near each other) but are part of the same operation – unless this only works by having one of the operands come from a register and get sent with the access

– The inclusion of an operation.

This basically mandates an entirely new bus protocol. Right now, you just have address and data for one access. Now you need address and data + operation + other operand (or other operand address). It’s almost like you need new machine code instructions to do that, since the CPU won’t have any way to communicate that directly to the memory. I still can’t figure out how this happens at a low level without some CPU and compiler magic. Perhaps the memory manager is itself memory mapped so you can do a “write” to it with the necessary data (other operand, operation) and then the store location. But the compiler would still need to create all of those writes instead of what would normally be a fetch, fetch, operate, store sequence.

And I can’t even start to think about how cache would interact with this. Ouch!

A “normal” memory interface has an address, a direction (read vs. write), and the data. The interface on the bandwidth engine is very different. It includes an address, an operation (such as add or exclusive-OR), and potentially an operand. That allows the BE chip itself to perform simple arithmetic and logical operations on its contents, which is much quicker than the traditional RMW method.

The bus controller on the host side can identify these operations opportunistically without explicit software commands from the CPU. It’s true that new low-level CPU instructions and/or compiler tricks could make this work even better, but then it wouldn’t be invisible. The BE can’t exploit every opportunity to collapse memory ops, but it can do enough to be worthwhile. The only cost is the new host-side bus controller itself.