[Editor’s note: this is the second in a series of articles on revitalizing the chip environment in Silicon Valley. You can find the first article here.]

One of the central tenets of the Lean Chip Startup (LCS) model is frequently executed rapid hypothesis testing to ensure that a minimum viable product is developed – a product that has all the necessary features and capabilities (and nothing superfluous) to meet the requirements of 80% of the mainstream customer base. Since chips are hardware, and it takes time to develop and manufacture hardware, it is vital that very early on in development some sort of representation of the hardware and its software be made available to the target customer base so that the frequent feedback loop can be established and product course corrections can occur early on in development. It is imperative that this representation also accurately reflect the features and capabilities of the product well enough to serve as a virtual version of it in lieu of the actual physical manifestation of the product – both for development and final testing purposes.

High-level chip modeling today

ESL has come a long way over its three-decade evolution. Chip designs contain so many hardware accelerators, CPUs and DSPs today that a Transaction-Level Modeling (TLM) approach lends itself quite readily to high-level modeling of today’s SoCs.

Furthermore, industry standards organizations such as Accellera and high-level abstraction languages such as SystemC and SystemVerilog, as well as standards such as UVM (Universal verification Methodology), have helped to drive EDA support for verification towards much higher levels of quality and effectiveness.

But is it enough?

Are the current ESL and verification EDA environments a help or a hindrance to the LCS model? Can the existing set of technologies and solutions support the LCS method? Is there anything that can or should be done to make them work better and further improve chip startup ROI?

How It’s Done Now – The Good, Bad and Ugly

There is still a widespread preference for building ESL models in C or C++. This is the fastest in simulation and lends itself extremely well to software development, as well as being the most familiar descriptive language to software developers.

The ESL model is – ideally – intended to be the Golden Model for defining chip functionality and performance expectations and for the correctness of the verification model used in the SoCs back end design phase. As such, the testbenches in the ESL model are developed with eventual transferability to the SoC verification suite in mind.

100M-gate designs in 28nm are not considered unusual nowadays, even taking into account that typically half of an SoC die consists of SRAM. The generic IP content is amazingly high – empirically about 75-80% of the typical SoC. This, however, complicates both ESL and verification efforts, since the IP is somewhat ‘foreign’ to the design team. The Accellera methodology standards have helped, but there are still difficulties arising from differences in quality and feature support in generic IP offerings and compatibility across EDA tools.

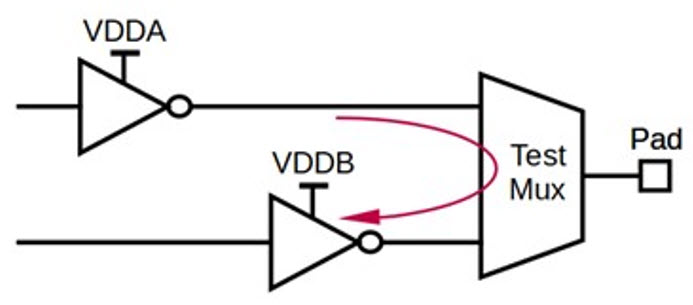

Some IP providers offer superlative verification support – MIPS and ARM are excellent examples. Others, however, offer only bus-functional models and leave the heavy lifting to the SoC developers. With 16 corners requiring characterization and timing closure in 28nm, the lack of sufficient verification support from a disturbingly high number of generic IP providers is a galling issue with many an SoC team. As a result, verification has become equally or more complex than the actual design work.

Rapid prototyping – first with an ESL model and then through FPGA emulation until first silicon is available – and accurate verification modeling are integral to the rapid hypothesis testing required for the LCS model to achieve its 90%+ product success rate. The rest of this article will focus on the individual strengths and weaknesses of ESL and verification technology today and suggest directions for improvement with the attendant benefits to cost, turn-around time (TAT), and ROI for the LCS method.

ESL – the Golden Model

Current ESL technology improves code density and simulation time by 10-100x over RTL and is a real gift to the LCS method. ESL tools are finally starting to take hold because they focus better on dataflow and have backed off attempts to replace RTL as control. Often built in C or C++, ESL models provide early architectural modeling as well as the necessary environment for hardware/software co-development. High-level synthesis (HLS) lets developers virtualize multi-CPU architectures with hardware accelerators, DSPs, hierarchical busses and software stacks and quickly prototype them for early performance estimates. Such hardware architectures favor a dataflow approach over a control approach and also lend themselves very effectively to FPGA emulation for system validation, realtime debug and functional evaluation. Thus, ESL is a natural part of the Lean Chip Startup engineering regimen.

However, despite the great strides made by EDA vendors in ESL over the last two decades, the implementation of this highly abstracted golden model is still somewhat of an art form. There are issues regarding the capture of various parts of the hardware and software design. There are also profound challenges to correlating the ESL abstraction with more concrete silicon-level backend issues: multi-corner timing closure, signal integrity, on chip power management and packaging. In short, current ESL deficiencies include both interfacing and modeling inadequacies.

Generic IP issues – with wide variations in licensing terms and quality of vendor products being what they are – mean that microarchitecture and implementation details have to be minimized so that there is minimal target dependency on hardware and maximum retargetability. Thus, the modeling problems for ESL representations of SoC’s with high generic IP content can be a headache.

The ability of ESL to properly handle memory – both on and off chip – is a particularly acute issue. On-chip memory is about half the total SoC area and half or more of total power dissipation. Automated memory partitioning for power and performance, memory merging and data reordering are all required areas of improvement for EDA vendors. Off-chip memory is a more severe problem area. Memory hierarchies and protocols for quick, efficient accesses are poorly modeled and cause a major C-to-gates disconnect. There are efforts underway to create virtualized caching and prefetching, but much more work on improving models is needed.

ESL members of SoC teams have compensated for these deficiencies by substituting pointers for properly modeled memory activity. However, the heavy use of pointers, the use of dynamic memory allocation and the very nature of the abstractions makes the conversion from TLM to a synthesizable design that can be compiled into a gate-level netlist still quite laborious. Also, the HLS synthesizable subset is to this day non-standardized and thus vendor-specific.

Consequently, SoC teams often support two ESL models – one for simulation and software development, the other for implementation and hardware development. And the fun doesn’t stop there: the teams spend cycles keeping the two models functionally equivalent. ESL was supposed to be a labor-saving device, but current limitations in memory support swim against the current, so to speak. Thus, reconciliation between the dynamic nature of the simulation model and the static nature of the implementation model at the HLS level is a critical problem for EDA vendors. Even more helpful would be an industry standard with a vendor-common synthesizable subset consisting of templates and libraries for interfaces, bus hierarchies and models for memory functions, optimized for efficient memory footprint and performance.

The latest developments in ESL support are trying to permit estimation of reliability and yield over process/voltage/temperature (PVT) variations. However valiant these attempts are, though, there is still a lot of trail to clear. RTL decisions on bus sizes, hardware/software partitioning and pipelining play a huge role in accurate power estimation. However, ESL still needs to have a way to capture switching activity across logic and registers, clock tree effects, clock gating, voltage islands, power gating, and voltage/frequency scaling. In other words, the ESL has to reach through its abstraction down to the area and power you would see in actual silicon, including interconnect and its parasitics, with attendant performance and power implications.

Thus, to support design constraints stemming from targets for the three P’s (performance, power, price/cost/area), HLS in ESL will need vastly improved modeling of actual physical implementations of circuits, memories and interconnect, including third- and fourth-order effects. Also, deeper submicron is making deterministic physical variables much harder to find, and parameters are more and more being handled as probability distributions – which will pose a rather interesting challenge to ESL modeling, but a challenge that will have to be faced. In effect, we’re back to the physical modeling needs of RTL through synthesis that resulted in physical synthesis tools development, but now at the ESL level of abstraction.

Verification – the ‘Reality Check’

SystemVerilog now seems to be winning the ‘hearts and minds’ battle over SystemC as a modeling language thanks to the maturity and familiarity of close-to-the-silicon Verilog. However, verification suffers some of the same implementation and support problems of ESL, and a few of its own besides.

Neither UVM nor OVM has libraries for standard interfaces. With standardized interfaces in place, modularization could be achieved across the generic IP space. Eliminating the need to port verification IP (VIP) to individual tools would also be to the benefit of both the EDA industry and its customers.

The maturity of the verification model is a problem as well – it takes many cycles to make it truly robust. Coverage must go beyond simple protocol-specific stimuli, but must also include a valid range of likely implementations and should include metrics on both functional and compliance coverage, with granularity ranging from the individual IP block all the way to the full SoC implementation.

The problem of deciding the completeness of verification coverage has been referred to as an NP-complete problem. Avoiding the NP-complete problem of unsolvable complexity requires planning, specifying and setting boundary conditions.

And therein lies the rub. Deciding on the appropriate boundary conditions requires experience with the IP block and the standard(s) to which it purportedly complies. Developing that expertise can take months or even years. And what is the benefit to a chipmaker to have such expertise in-house?

Because of the aforementioned complexity of design in 28nm or deeper nodes, as well as the variability in verification modeling support from generic IP vendors, it is common for an SoC team to have an equal number of design and verification engineers. Yet this is, in every respect, a bad thing. Verification is a vital capability, yet is not the source of value-add. The unique technology created by the design engineering team is what gives the product traction in the market. Verification, vital as it is and requiring expertise at least equal in caliber to that of the design team, only adds to overhead costs. Furthermore, a full verification team cannot keep busy in a company that produces less than five or six chips per year – they will have idle times, and, as a result, their skillsets will tend to lag the industry at large. To add salt to the wound, today’s SoC development is dominated by the verification burden, which, on empirical evidence, constitutes 70%-80% of the entire design effort. Reducing this burden is a first-order strategic necessity for improving on the TAT and ROI of the LCS model.

The Way Forward

The most beneficial thing the EDA sector could do in the near term to support the LCS model would be to address the interface issues. The great commonality between ESL and verification needs is a common interface format that can be employed by generic IP developers, system integrators and EDA firms. This would streamline both efforts and facilitate front-eEnd/back-end engineering cohesion at the hardware level, as well as simplifying testbench development and software stack work.

With an OVM/UVM/VVM standard interface library, we contend, based on our collective experience, and reinforced by research that includes the items in the bibliography at the end, that ESL and verification efforts could be reduced from a collective 8-10 months to half of that. ARM’s widely adopted hierarchical, multi-tiered AMBA bus standard is the logical choice. EDA, generic IP and system integrators are obvious candidates to contribute to expansions of such a standard to ameliorate bandwidth, performance or control issues that remain in any application-specific employment of the AMBA bus architectures, if only ARM could be convinced to move AMBA towards an open-source model.

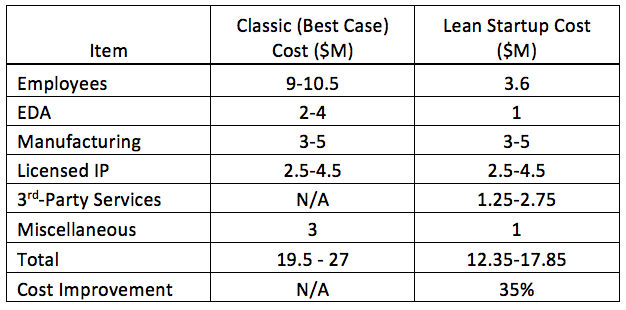

Thus, a standard interface library would cut costs by roughly 50% for ESL and verification support, which are both outsourced in LCS. There would be cascading positive effects to other front-end and back-end engineering services from the chip startup third-party ecosystem in terms of simplifying the workload and reducing contracted length of engagement. On average, a $1M – $1.5M savings in outsourced engineering costs and 3-4 months reduction in development schedule should be immediately realizable, consequently achieving a 35%- 40% reduction in cost and development time in the LCS model over the best-case classic model. There may be further efficiencies – even in the staffing levels of the disruptive technology inventor, since modularization of VIP and interface standardization for both ESL and verification will reduce development efforts broadly for both hardware and software.

As pointed out earlier, verification teams, staffed at 1:1 with design teams, are necessary but non-value-adding overhead and are particularly burdensome to midsize or small startup chipmakers. It’s clear at this point that verification IP is something specialized and complex enough that it should truly be treated as a separate and distinct IP class, and that it should be outsourced for licensing, implementation and support, thus sharing the development, support and maintenance costs of the VIP with multiple customers. One can expect that VIP vendors will compete vigorously to provide verification models supporting multiple tools, languages (SystemVerilog, SystemC, etc) and methodologies (UVM/OVM/VVM/etc.) Furthermore, it will be the burden of these VIP vendors to provide models with sufficient maturity, including protocol coverage through assertions and regressions, application-specific block/subsystem/SoC stimuli, capturing a sufficient set of useful metrics, and supporting simulation, acceleration/emulation and formal verification.

Over a longer time frame, improved memory modeling and bringing ESL abstractions closer to actual silicon will ripple thru every aspect of the LCS method. Costs and development timelines will be positively affected for every line item in the LCS model, with happy consequences to the number and diversity of disruptive inventions pursued in the semiconductor industry.

Concluding Thoughts

One can also observe that implementation and support of the LCS model has a hidden benefit: it is cyclically self-reinforcing. The very effort of refocusing chip startup efforts with the LCS method in order to bring disruptive inventions to market faster and with vastly improved ROI puts pressure on EDA, generic IP and system integrator firms to innovate their current product and/or service offerings, or develop disruptive inventions of their own, with beneficial effects to the LCS ecosystem and the entire chip sector. This, in turn, further improves the LCS model’s ROI potential, restarting the cycle as a positive feedback loop for new technology creation and having industry-wide impact.

Bibliography:

“Verification IP: Changing Landscape”, Gaurav Jalan

“Best Practices for Selecting and Using Verification IP”, Richard Goering

“The Next Major Shift in Verification is SoC Verification”, Michael Sanie

“The Truth About SoC Verification”, Adnan Hamid

“Chip Design Without Verification”, Lauro Rizzatti

“Verification – You Have To Start Smart”, Mike Gianfagna

“Field Manual for Verification Planning, Parts 1 and 2”, Suhas Belgal

Authors:

Peter Gasperini – VP of Business Development, Markonix LLC; previously President and GM of Iunika North America, with 22+ years of experience in Silicon Valley with ASIC, FPGA, embedded microprocessors and engineering services.

Qasim Shami – CEO and co-founder of Comira Solutions; previously, VP of Software development & architecture at Ikanos

Contributor:

Bob Caulk, VP of ASIC Design at TZYX, Chief Architect at Metta Technology

Image: Ilija Kovacevic

These authors have suggested that unified IP interfaces would be a huge productivity enhancer. (Along with other things.) Do you agree? And is it even possible to corral all these IP guys together?

Good article. Outsourcing the verification piece to / with the right partner can also significantly reduce overhead cost-of-sales and supports the LCS model