At process nodes below 100 nanometers (nm), achieving yield ramp becomes both more critical and a greater challenge for semiconductor manufacturers. New manufacturing steps, materials and device types, coupled with escalating process variations and a host of other challenges, continually increase the difficulty in device scaling. At the same time, growing market pressures are further squeezing already tight development and delivery windows. In this environment, there’s no room for error in yield learning; devices that don’t yield the first time spell doom for chipmakers, who must get to yield ramp as quickly as possible.

Developing methodologies that provide reliable workflows has helped process and yield engineers break down this challenge into manageable steps – one of the most important being detection of systematic failure mechanisms, such as scratching of wafers during chemical-mechanical planarization (CMP). These macro-scale failures are spatially localized and thus both easy to capture and relatively easy to correct using wafer inspection and statistical process control techniques. However, process steps are expected not merely to build up defect-free devices but to deliver parametric quality so that the devices built on the wafer will function as expected.

Process steps typically show a variation in the rate at which device materials are modified across the surface of a wafer, but this variation is subject to control limits and is thus closely maintained. With the advent of sub-100-nm manufacturing nodes, the extent of this variation is dictated not only by process condition but also by the local topology of the design, which the process steps build into the device, layer by layer. Thus, to understand the true nature of the parametric variation1, engineers need to determine the unique control limits for each device being processed, and then assess the electrical impact for each topological feature within the design. There are several methods by which this can be accomplished.

Analyzing the analysis methods

Techniques such as failed bitmap analysis, used on static random-access memories (SRAMs), became highly popular for their ability to assess electrical impact at the granular, per-device level and then localize the impact to a specific bit topology on the wafer2. For the logic portions of the chip, however, bitmap analysis is less viable due to the complex relationship between the location of a logic element in a design and the corresponding topological feature in the physical device.

This challenge was a key reason for the surge earlier this decade in the use of structural testing techniques for yield learning. Structural testing relies on a) inserting design-for-testability (DFT) circuitry in the device design, and b) using this circuitry to generate test vectors that target specific structural elements within the logic design using a failure hypothesis based on key fault models. Typically created through automatic test pattern generation (ATPG), these test vectors enable the engineer to analyze the collective test results in diagnosing the specific logic elements of the design that are demonstrating incorrect electrical switching during test application.

Its ability to localize observed faults is one of the strongest advantages of structural testing over conventional testing approaches3. The diagnostics capability localizes faults based on a heuristic analysis of observed versus expected switching behavior, isolates the logic elements in the design where this difference could originate and propagate, and ultimately yields a set of defect candidates for all observed faults. This approach has been used to identify a systematic failure mechanism, diagnose a large volume of failing devices and determine from the collective results the design elements that failed most often. In other words, the volume diagnostics approach can employ statistical analysis to localize and identify electrical defects pointing to the most dominant failure mechanisms4.

However, while volume diagnostics denote the specific logic elements that are candidates for an electrical defect, the defect may actually reside on the wiring that connects logic blocks. Diagnostics data alone cannot pinpoint where the defect may have come from, particularly when the wires in question can be hundreds of microns long. As the following examples show, physical design data combined with volume diagnostics analysis provides the best possible localization of the electrical defect5,6, enabling the engineer to determine when yield problems occur and ascertain the root cause.

Finding the failures

Figure 1 illustrates the volume diagnostics flow and corresponding corrective action path. For each tested wafer, the desktop test equipment, a Verigy ZFP tester, generates one Standard Test Data Format (STDF) file and one ASCII datalog with the record of ATPG fails (i.e., both failing pins and failing cycles). The diagnostics utility uses the datalog to generate a table of defect candidates for all observed faults. The STDF files, defect candidate tables, parametric test (PT) results, layout description and inline inspection data all serve as input information for the volume diagnostics analysis performed with the yield management system, Synopsys’ Yield Explorer.

Fig. 1. Flow diagram of volume diagnostics and corrective action path

Locations of failing devices obtained from STDF files are plotted as wafer maps and analyzed for zonal signatures. The diagnostics results provide the logic cell instance and pin ID for each of the logic elements identified as a defect candidate for one or more particular faults. Performing statistical analysis on these elements reveals instances that fail repeatedly, indicating a strong systematic failure mechanism. All the nets that connect through these failing instances are also traced, using the physical design data, and analyzed for any topological signatures, such as the use of specific via configurations, or pronounced use of certain metal layers. Correlating defect candidate signatures to inline inspection data and removing from further analysis candidates that match up to inline defects avoids spending time on failure mechanisms already known during manufacturing.

Finally, all hypotheses regarding systematic failure mechanisms are validated in silicon, with selected failing devices being sent for physical failure analysis (PFA) accompanied by the layout X-Y coordinates of high-frequency defect candidates and net elements. Finally, the feedback is given to process engineers for fixing the marginalities highlighted by the volume analysis, thereby closing the flow.

Looking at the results

In an example case, investigation of low yield trend on four lots showed that many of the failing devices failed a chain test. A chain test reveals failure of a sequential element within the design, which provides access to the combinatorial logic for targeted testing. Chain test diagnostics led to identification of the sequential elements as defect candidates, some of which were shown, through statistical analysis, to be much more frequently associated with chain test failures than others. Each defect candidate was also analyzed for its impact on the various chains to which it connected.

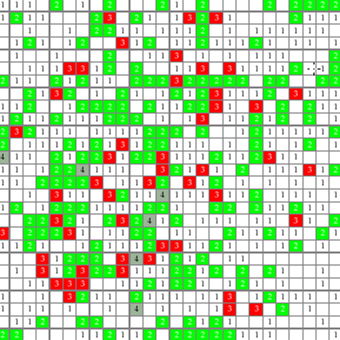

The analysis showed that most of the devices failing due to chain failures were concentrated in the central zone of the wafers. Figure 2 shows a stacked wafer map of devices with chain test failures. Further analysis indicated one particular candidate cell was highly correlated to multiple chains failing the test. With this candidate cell superimposed on the device layout (see Figure 3), a very densely packed topology of polysilicon lines can be seen.

Fig. 2. Devices failing chain test tended to be localized in the central zone on the wafers

Fig. 3. Candidate cell boundary superimposed on layout of failing device

Nanoprobing of this cell identified a particularly densely packed pair of transistors with a lower-than-expected drain current, which was related to the transistors’ source-drain and channel formation. Based on these data, a failing device was sent for PFA. A subsequent cross-section image of the transistors highlighted a lack of undercut of spacer oxide underneath the two transistors, leading to the determination that the thicker spacer oxide prevented the correct implant dose from reaching the silicon between the densely packed transistors, making the channel resistive. In addition, the wafer map indicated that the remaining oxide was not uniform across the wafer; more remaining oxide was seen at the center than at the edge. Similar analysis of lots processed at spacer oxide thickness close to lower specification conditions confirmed that thinner oxide deposits enabled the most uniform performance across the wafer.

Following these analyses, process-centering towards a thinner remaining spacer oxide was adopted, and the counts of devices failing the chain test dropped significantly. In Figure 4, which compares chain test failures, the five lots at left were made with the old spacer process, and the four lots at right with the new spacer process. The improvements in failure rate and uniformity are clear.

Fig. 4. Comparison of chain failures between old and new spacer processes

Enabling design-specific analysis

In earlier generations of CMOS technology, the process baseline remained relatively constant for a given technology node and flavor, regardless of the design. However, for sub-100-nm technology nodes, tuning the process conditions to optimize yield and performance on each specific design is becoming more common. As a second example indicates, volume diagnostics analysis is a key component in process-centering for a new device, enabling seemingly esoteric fault determinations.

Several scan test failures were found on the first characterization lot. Failing dice were diagnosed and defect candidates from all the failing dice analyzed statistically. As indicated in Figure 1, the focus of this analysis was to identify any standard cell type that repeatedly failed across multiple dies, leading to yield loss on these dies, and then prioritize them for further scrutiny based on a range of factors: number of repeated fails, number of times the cell type was used across the entire design, area of each cell type etc. A specific cell type was repeatedly found to be associated with scan fails. The composite wafer-maps and stacked die-maps did not reveal any specific zonal signatures or lens field effects adversely impacting printability of this cell along one edge of the die.

Given the lack of any other pointers toward any macro process signatures that affected the failure of this cell type, the next step was to investigate the exact nature of the cell type’s electrical failure and physical design attributes. The test patterns that captured a malfunction of this cell were predominantly testing for a ‘stuck-at’ hypothesis. Also, in six out of the eight failing dice, the failure had been localized to a specific instance of this cell type, but no in-line defects corresponded to these particular locations. Repeated failures of the same instance suggested that a systematic defect mechanism was operating at this unique cell instance.

When the layout of this cell type and the net associated with it examined carefully, the instance did not divulge any known-marginal critical geometry. However, the associated net passed very closely to another net at Metal-3 layer, which, in Figure 5a, can be seen as a layout clip. In the layout, the spacing between the two metal shapes, indicated by the blue arrows, was 140 nm, but when measured in-line (Figure 5b), the spacing between two lines was clearly deviating from the expectations, narrowing to 70 nm at one point. Simulation for test pattern application with this new spacing the neighboring nets displayed a capacitive coupling, leading to a bridging fault, which a ‘stuck-at’ test would indeed capture without any presence of a physical defect. Upon further investigation of the layout, per Figure 5c, a Via-2 – placed exactly at the site of the protrusion – went through the Metal-3 shape and connected to Metal-2 below.

Fig. 5a. Layout clip showing Metal-3shape for a suspect net

Fig. 5b. CD-SEM image of the suspect netat Metal-3

Fig. 5c. Layout clip showing Metal-3 shapewith via filled in

This observation led to the conclusion that the process center needed to be where the Via CD would print slightly smaller, and that the Via-2 overlay to Metal-3 needed tightening to avoid the via shape being printed and etched beyond the metal boundaries. The scan-chain failure percentage plot for the characterization lot and the subsequent lots with the centered process is shown in Figure 6.

Fig. 6. Comparison of initial and subsequent characterization lots

Conclusion

Volume diagnostics have proven effective and efficient in seeking out systematic failure mechanisms during early ramp of a new sub-100nm technology – in both typical yield debug and more preventive process-centering situations. In both scenarios, volume diagnostics allow the combination of design, fab and test data to isolate the dominant but subtle failure mechanisms with relative ease. Statistical analysis ensures that mechanisms contributing to the largest failures are prioritized for investigation; cross correlation between design, fab and test data provides a much clearer path to understanding and correcting the root causes behind the failures; and split-lot analysis enables quantitative comparison between process conditions and drives process-centering to minimize systematic failures. Deployed in conjunction with design attributes and in-line parameters, volume diagnostics show excellent potential for use in defining unique multivariate signatures for failures, making this approach a key step toward achieving true adaptive production yield monitoring.

References

1. Raman K. Nurani et al., “In-Line Yield Prediction Methodologies Using Patterned Wafer Inspection Information”, IEEE Transactions on Semiconductor Manufacturing, Vol. 11, No. 1, Feb 1998.

2. Nermine H. Ramadan, “Redundancy Yield Model for SRAMS”, Intel Technology Journal, Q4 1997.

3. B. Mathew et al., “Understanding yield losses in logic circuits”, IEEE Design & Test Magazine, May/June issue, 2004, pp 208-215.

4. M. Sharma, B. Benware, M. Keim, H. Tang, I.Y. Chang, A. Man, “Identifying Physical Root Causes for Yield Excursions from Test Fail Data”, IEEE European Test Symposium 2008, Verbania, Italy.

5. D. Appello et al. ‘Enabling Effective Yield Learning through Actual DFM-Closure at the SoC Level’. ASMC Conference Proceedings, ASMC 2007.

6. D. Appello et al. ‘Advances on Yield Learning through Concurrent Evaluation of Design and Process Data’. ASMC Conference Proceedings, ASMC 2009 Centered Process

About the Authors:

Davide Appello holds a degree in Electronics Engineering from the Universita’ di Pavia in Italy. He has been with STMicroelectronics since 1994. He is the DfX technologies senior expert in the Automotive Product Group and he manages the silicon validation and test engineering activities. He has authored and co-authored more than 50 papers in the area of DFT, Diagnosis and DFM. He is part of the Program Committees of VTS, ETS, DATE, IOLTS, DRV, SDD, TTEP.

Vincenzo Tancorre received a BS in Electronic Engineering from Politecnico’ di Bari in 2000. He works as Yield Enhancement Engineer at STMicroelectronics. His major responsibilities include parametric correlation and statistical data analysis to support yield enhancement of Automotive SoC devices during the ramp up phase of new technologies.

Jacky Gomez received a Diplome Universitaire de Technologie in Electronics from the University of Grenoble in 1982. He has been a member of Product Engineering and Test Development teams since 1985. He has worked at EFCIS, Thomson Semiconductor and STMicrolectronics in various product divisions. Since 2007 he has concentrated on Failure Analysis Engineering efforts.

Daniele Li Rosi achieved degree in Physics in 1995 from Universita’ di Catania. He has been with ST Microelectronics since 1997, managing several roles in both Process and Device Engineering Groups. He has worked on Stand Alone Flash Memories and SoC devices with Embedded Flash at 90 nm node. He focuses on statistical analysis and yield improvement during development, ramp-up and full productions phases.

Christophe Suzor holds a degree in Chemical Engineering from University of Melbourne, Australia, 1992. He has worked at Tokyo Electron, Philips Semiconductors, Electroglas, HPL, and Synopsys. He uses his strong manufacturing and test background to provide yield analysis expertise as member of Yield Management R&D team at Synopsys.

Sagar A. Kekare holds a Masters degree in Materials Science and Engineering from University of Texas at Arlington. He is currently Group Manager of Product Marketing at Synopsys. He is responsible for the Yield Management group of products. Prior to joining Synopsys, he was a Senior Yield Management Consultant for KLA-Tencor and a Process Integration Team Leader for Rockwell / Conexant Systems.

5 thoughts on “Faster Yield Ramp at Sub-100-nm Technologies Using Design-Centric Volume Diagnostics Approach”