It’s no secret that EDA tools that process billions of transistors have a lot of work to do and can take a really long time to do it. It’s also no secret that having multiple computers do much of that work in parallel should be an obvious way to speed things up.

What is surprising, then, is the number of different approaches that have been taken to making parallel algorithms work. Which says that, as obvious as multi-processing looks, how to get there is not obvious.

There are two classic ways of pulling apart – or “decomposing” – a program if you want to exploit opportunities for parallelism. The first is to pull apart the data and have multiple machines do the same thing to different clumps of data. The other is to separate out the tasks and have different machines do different tasks. In practice, some methodologies rely on a bit of both.

But lest we get sloppy with concepts here, let’s be a bit more precise. For example, what constitutes a “machine”? And what constitutes a “task”? Here we risk wading back into threading terminology hell, so let’s take it a bit easy.

First, let’s deal with what a “machine” is. We’ve talked before about the multi-processor/core terminology overloading problem. Whereas we’ve blown off the differences before, here we should be more careful, because it matters.

Within a single computing box, you may have one or more processors, each of which may have one or more cores. Assuming a simple standard server configuration, such a box will run a single SMP OS that governs all of those processors and cores.

But when you hook multiple computers together, even though it’s still a form of multi-processing, it’s different because each computer has its own OS. There is no OS that has visibility over the entire computing grid (other than a load-sharing program that may be assigning processes to boxes).

So on an individual box, you have the option of splitting the computing up into individual processes or threads within a single (or multiple) processes. But, from box to box, you must have different processes: you can’t have a single true über-process distributed over all the boxes because there isn’t an über-OS to manage the whole thing across a pan-computer agglomerated memory space.

So if you’re going to send part of the processing to another box, spawning a thread is out of the question. You have to create a new process in the other box. Threads are more convenient because they automatically inherit a lot of the context of their parent process, but, when you create a new process, you have to build everything from scratch. And you have to “marshall” the data from one box to the other. That takes time, and figuring out how much data to be sending over the network from box to box can make or break an algorithm.

So, getting back to the original question, when we talk about multiple “machines,” it really does matter whether it’s one box or multiple boxes. And, accordingly, the “task” may be a process or thread.

And I’m really hoping to go for several weeks or months here without having to think about threads and processes any more.

So if you’re going to be splitting up the data set, then you may be well served by using different boxes. You can essentially run the same process on each box, each having its own set of data to work on. Of course, if you’re doing something like place and route, or, more relevant to our topic today, timing and signal integrity analysis, then you have issues of boundary crossing where the data was sliced, and that’s where the really hard part is for this approach.

On the other hand, if you split up tasks, then a multi-threaded program can add value within a box. In theory, you can create as many threads as you want and let the OS take advantage of however many processing units there are. In practice, this can add some thread management overhead such that spawning fewer threads when fewer can run in parallel means less thread thrash.

You can actually deal with this a couple ways. One is essentially to create your own “dispatcher” to create and schedule threads. We looked at Mentor’s interesting approach to this with their Olympus tool back in ‘08. Another is to pre-compile your design to target a specific system configuration for the way you want to split things up; Synopsys did this last year with VCS.

Now Synopsys is announcing its parallelization results for PrimeTime. And their approach is yet again different. And multi-faceted. You can split a job up amongst cores in one box, or split the job across many boxes, or both. To clarify terminology here, they refer to the multi-box approach as “distributed” and the multi-thread approach as “threaded.”

They’ve actually supported the distributed approach in the past. The problem was that, regardless of the computing resources in each box, they could target only one core per box.

So now they support multi-threading within the box. Only it’s not quite so simple, since the threading approach has been tuned for the number of cores. By default they’re optimized for four cores. If you want to target boxes with more or fewer and if you want that operation to be optimized, then someone who knows what he or she is doing has to get in there and monkey with the system to tune it.

This is sort of unusual. When you run Excel, you don’t get in there and tweak how the threading is done based on how many cores are in your computer. (Not sure that it would matter anyway, but that’s a separate problem.) So why is this useful for PrimeTime?

Synopsys’s response is that these are massive “juggernaut” programs plowing through incredible volumes of data, so keeping the highways free of congestion makes a huge difference. Ken Rousseau, their VP of Engineering for PrimeTime, acknowledges that, even for users, it helps to be more multicore savvy to take best advantage of this. This isn’t your simple desktop tool, where you’re managing only the odd user mouse-click or keyboard-tap as they occur in what feels to the computer like geological time.

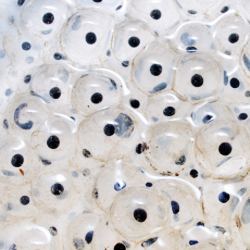

The real way you judge whether you’re getting the best performance is to look at the loading of the cores as they’re used. Ideally, they should all be busy all the time, and they should all end at the same time. When Synopsys monitored the cores for a four-core system, they saw relatively even loading of the cores. Presumably, if you don’t tweak the secret parameters, using a box with more cores might be faster, but not as efficient, with some cores seeing more idle time than others. It actually takes experimentation to figure out how best to tweak it for different core counts.

So chalk it up to yet another in the panoply of approaches to parallelizing lengthy EDA processes. And to another situation where the solution isn’t as obvious or simple as you might hope.