Green Hills, the company known more for embedded systems and real-time OSes and such, is now a Certificate Authority (CA). Or, more accurately put, their Integrity Security Services subsidiary is the CA.

“What??” you say. Does this put them into competition with the likes of Verisign and Symantec? Actually… no. For this we need to dig deeper into the world of security and certificates. Because Integrity isn’t a CA for the types of certificates we’re used to; they’re going to serve the automotive market, which will work differently.

Browser certs

When computers want to interact with each other, there’s this problem: how does one computer know that the other computer is who it says it is? We’ve covered this in some detail in the past, but the short answer is that there’s a certificate chain that can ultimately be traced back to some well-known and well-trusted entity, which we refer to as a CA. The certificate typically includes a public key as well as a “they’re who they say they are” assurance so that a secure conversation can be started with a key exchange.

If you want to obtain this kind of certificate, you apply to one of the CAs, giving them information about your company and, critically, paying them some money. They do some due diligence (how much depends partly on how much you pay), and then they send you a certificate.

Of course, you don’t have to go through a CA; you can be your own CA. But your customers’ browsers don’t know about you in the same way that they already know and trust the traditional CAs, so if you go that route, then you have to make sure your customers add your certificate to their browsers – a less user-friendly approach.

So that’s the computer/browser/IoT world. The next big inter-communication thing is automotive, and, as we’ve seen, there are a couple of communications standards vying for primacy in that space. But even in the fast-moving world of cars starting a conversation as they approach and then ending them once they pass each other, we still need to make sure those communications are secure.

That includes having confidence that someone representing to be the car next to you – you know, the one with the driver winking at you? – is truly who they say they are. Heck, for all you know, that attractive person could actually be someone sitting on their bed that weighs 400 pounds (the someone, not the bed). And it has been amply demonstrated that a hacked car is not fit for the road, so we can’t compromise on security just because we’ve got this highly dynamic environment.

And there’s yet one more problem: privacy. Let’s say that each car came with a browser-style certificate. We’ll assume, for simplicity, that the automotive OEM included the cost of that in the BOM just to bury that annoyance so the customer (who, in all likelihood, knows – and cares – nothing about the details of security) doesn’t have to worry about it.

In that scenario, you’re driving around in your car as it makes and breaks conversations with surrounding cars. All of those communications carry your certificate. Which means that anyone who’s paying attention can pretty much track your whereabouts at all times. You say you were at the gym from 6-8:30? That’s strange… there are no gyms anywhere near where your certificate was bouncing around during those hours… So the Feds have mandated untrackability.

There is also a mandate on how long car-to-car authentication can take. After all, at 65 mph, it’s too easy to imagine an electronic conversation starting and ending something like, “OK, yes, we can talk.” “Naw, never mind; I passed you long ago.”

So the automotive cert process is being defined as being rather different. And, as you might expect from a system that’s needed because a simpler system is inadequate, this one is somewhat more complicated.

Can you top off my certs please?

The idea is to make all vehicle certs short-lived. You use a cert for a while… and then you move on to a new cert. In this way, no one can identify you with a single certificate and watch where you go. If they can pin a cert to you, they’ll be able to watch where you go only while that cert is active. Once you switch, they’re back in the dark.

But there’s another challenge here. Let’s say you use your certificate and then it expires; now you need a new one. Do you have to go through some rigmarole each time this happens? After all, we’re talking, like, 1000 certs per year per vehicle. That’s, like, 3 new requests a day. Not something the traditional CAs are set up to do.

At a high level, the way this will work is that, when you make a request to your CA, you’re not going to get only one cert; you’ll get a stash of them. As they expire, you move on to the next one in the trove. As you start getting low on certs, you can start a new request. That request can be filled in a more reasonable time, since you still have certs that haven’t expired. No need to complete the transaction before that semi mows you over.

The proposed process involves so-called “butterfly keys.” Usually, the term “butterfly” tends to invoke the image of wings spreading open – like butterflied shrimp or the failed butterfly ballot. But in this case, there appears to be more of a link to the concept of metamorphosis: the journey from caterpillar to cocoon to butterfly.

There are several CA-like operators that get involved here. There’s a root CA at the top, an intermediate CA in… surprise… the middle, a “pseudonym” CA (PCA), and a registration authority (RA). The process is started within the car using what’s referred to as the on-board equipment (OBE). An outline of the process was laid out by the CAMP Vehicle Safety Communications 3 (VSC3) Consortium (where CAMP stands for Crash Avoidance Metrics Partnership) – an effort largely spearheaded by major auto names like Ford and GM.

The process includes provisions for enrolling cars and for detecting misbehavior, but we’ll focus on the simpler aspects of requesting a cert top-off. You’ll note that some of the complexity arises from a desire not to be able to track the generation of certs. In other words, given a particular cert, there’s no way to follow it back to the granter and, ultimately, the requestor. Those tracks are all covered.

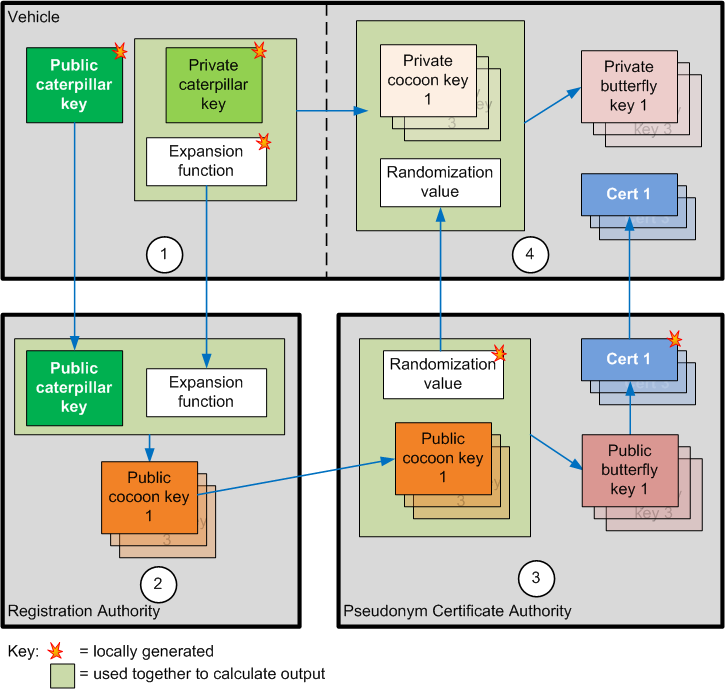

We start with the OBE generating a public/private pair of “caterpillar” keys, along with an expansion function. The public key and expansion function are sent to the RA.

The RA runs the expansion function on the public caterpillar key to generate any number of “cocoon keys.” Those are sent to the PCA, where they are embedded into certificates. The process randomizes the keys into butterfly keys embedded within the certificates so that it’s not possible to correlate keys generated in the same request; they appear to be independent. Likewise, the RA won’t be able to correlate actual certs with requests it got.

So now you have a bunch of certs with public butterfly keys in them; these are returned by the PCA directly to the OBE. Meanwhile, the OBE has also run the original expansion function on its private caterpillar key, which didn’t get sent to the RA. So it’s got a bunch of private cocoon keys. Along with the certs, the PCA returns a randomization number (the one used to create the public butterfly keys) directly to the OBE, which the OBE uses to generate private butterfly keys from the private cocoon keys. Because they used the same random number, those private butterfly keys will correlate with the public butterfly keys embedded in the returned certificates.

In the figure, it looks like the finished goods are sent by the PCA to the OBE in the clear. But, in fact, the OBE includes a public key in its initial request that can be used to encrypt the return package. I was considering whether the public caterpillar key could serve that purpose. My concern there is whether broadly communicating what is essentially a seed for the anonymous certs could somehow be reversed.

Presumably, the expansion functions are one-way functions that are very hard (impossible?) to reverse; if the seed key were included in the package with the expanded keys, this would thwart that one-way effort by providing the answer along with the cocoon keys. So my gut says that’s not a good idea; a separate public key should be used for encrypting the results. It would go from OBE to RA to the PCA, which would then encrypt the product before sending it back to the OBE.

I’m also going to assume that the frequently-conversing RA and PCA will have set up their own key pair so that the RA can encrypt the package that it sends to the PCA.

This is the process in which Integrity will be participating. They’ll act as Root CA as well as providing the capabilities needed for the other roles. To be clear, much of this appears to be still in the proposal stage, and business models haven’t really been sorted yet. Integrity sees early cars simply being provisioned up front with about 12,000 certs or so, with the top-off mechanism coming later. And, once it all settles out, the process details might have changed.

Integrity doesn’t see the traditional CAs wanting to participate in this business. While there might be some cash exchange going on in the process (details being part of the as-yet-to-be-determined biz model), it’s more a micro-transaction sort of thing, as compared to the more substantial transactions that happen today.

The high-volume nature of vehicular certs also means that the process has to be automated to a larger degree than the browser cert process is. So the existing infrastructure as used by browser cert folks may not give them any head start for the vehicular version.

There’s also an open question as to whether this model might play better with low-cost, high-volume IoT devices. It might reduce costs, it would relieve the computation burden on the device side, and it could make adding security easier. But, realistically, I don’t think there’s a lot of energy in this direction – at least not until they get vehicles sorted.

Integrity says that they’re the first vehicular CA. Which also demonstrates that this is early days. We’ll watch to see what happens as this settles out into a firm process.

More info:

What are your thoughts on how the automotive certificate system is shaping up?