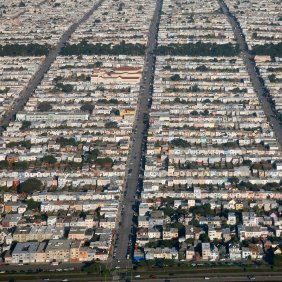

It doesn’t take an old-timer to notice that traffic in Santa Cruz sucks. The number of people has grown, and it’s still relying on the same old road network. While once it might have taken a half hour to get from Westside to Aptos, it can now take at least that long just to get to the freeway at the wrong time of day. For the most part, it’s faster to use a bicycle.

Clearly, scaling has failed. Well, during rush hour anyway. Adapting to more users of the traffic resources can mean doing things very differently, a difficult thing in towns with a historical legacy and emotional appeal. You can’t just bulldoze the town and start over.

We do have that luxury to a much greater extent when pushing technology. As we continue to add processors to bring more computing power, you might also expect that traffic might get congested – and here we can rip things up and start again (with some possible attention to legacy code). The high end of multicore would not survive without it.

Any processor with more than one core used to qualify as “multicore.” But, as embedded stood on the sidelines, multicore was largely taken up by the desktop world. And so it has tended to mean dual- or quad-core. Nothing overwhelming, just a nominal amount of extra coreage to keep from having to clock at terahertz rates, thereby avoiding having to attach a nuclear power plant to each processor.

But multicore is hard, and, for systems with just a few cores running a general-purpose operating system, the OS wizards that understand scheduling can handle the multicore magic for us so that regular everyday software programmers can write multi-threaded programs (enough of a challenge in its own right, if done well) and let the OS take care of what runs on which core.

It’s the simple, happy, symmetric multi-processing (SMP) model where all cores look the same and all reference the same memory. The latter point is important since, if a task gets moved from one core to another, it can still find all its data without having to move the data too.

But you can take SMP only so far. If every core is trying to get to the same memory space, then you’ve got a huge bottleneck waiting to throttle performance. Maybe if you’ve got only, say, four cores contending for the same memory, then you might do ok, if your programs aren’t too aggressive. But try to start scaling up the number of cores, and you’re going to be in trouble.

Things are worst, of course, if different tasks all want the same specific data. You may then need to burden the whole process with locks and other paraphernalia to make sure that one task doesn’t clobber another task’s atomic operation. This adds overhead as tasks wait in line to get to their data, patiently acquiring and releasing locks.

But even without that, you’ve got a memory bus that everyone needs to use to get to the memory. Too many cores means it’s gonna get clogged up. Even if you tried to use multiple busses, the memory itself ultimately has only so many ports (if it’s even multi-ported in the first place), and that’s where all those busses are going to end up. So you’re just moving the bottleneck downstream.

Obviously caching helps, since, having gone through the work of retrieving some data from external memory, you can now work with it locally, with write-throughs back to memory mostly taken out of the critical execution path. How well that works will depend on how many cores need to see a clean up-to-date version of the same data, of course.

So a generally accepted expectation is that, with this kind of multicore, you can get up to, oh, eight or so cores before running out of steam.

And yet there are companies touting many, many more cores than this. Tilera is moving to 100. Adapteva is talking thousands – 4096, to be precise. These chips are so far from the plodding dual-core processor that they’ve been accorded a separate name – many-core. Multi no longer does justice. So what the heck gives here? How can all these cores be slammed together and still manage data in memory?

These aren’t the first chips to exceed eight or so cores. But processor chips of the ilk provided by Netlogic and Cavium have always been focused on the packet-processing world, and that model has been specially honed for the painstakingly detailed programming models perfected over many years. A general programmer may not feel particularly at home in this environment.

If a many-core processor is going to be used for more general-purpose programming, it somehow has to look more like a big version of something familiar without the problems of just trying to scale the basic multicore chips. So what have they done differently in their memory and bus systems?

Both Adapteva and Tilera use their own home-grown processor cores. I’m not going to focus on the cores, making the risky (and, to them, probably infuriating) simplifying assumption that they’re more or less commensurate. That’s simply because I’m more interested in the memory and interconnect.

And, indeed, the memory approaches are very different from what would be scaled up from an everyday PC processor. And they’re different from each other.

Adapteva solves the problem by putting lots of memory on chip. In theory, there are 4 GB available via a 32-bit address. That memory is split up 4096 ways for 4096 cores, giving each core a 1-MB allocation. In actual fact, today each core has 32 KB of memory, with the remainder reserved for future expansion. (Just think: so many more undefined addresses to write to in C other than address 0!)

They refer to this as distributed shared memory because, in truth, the entire 4 GB map is available to any core. So it is, in fact, shared. The fact that it’s distributed means that it is literally many separate memories, eliminating the issue of one giant memory needing lots of ports.

Access to these memories is via an on-chip network, which is pretty much the only way a chip of this scale is going to work. Each core becomes part of a mesh node, accompanied by a DMA engine, its local memory, and an interface to a local network “eMesh router,” one of which is associated with each core or node. The router can take traffic five ways: the four cardinal directions and into/out of the node.

There are three networks overlaid on each other in the mesh. The first is the on-chip memory network, which can transfer 8 bytes per cycle as part of a single transaction “packet” that also contains the source and destination addresses, meaning that there’s no start-up transaction overhead. This is the means by which any part of the internal memory can be accessed by any core. Of course, if one core wants to read from a location associated with a far-away core, there will be a latency hit depending on how many links it takes to get there. But each link in the mesh can be traversed in one clock.

The five ways through the router per node, each of which can handle 8 B/cycle, give total throughput of 40 B/cycle. At a mesh frequency of 1 GHz, that’s 40 GB/s per node.

There’s also an off-chip memory bus that can handle 1 byte per clock and a request network running at 1 byte per clock.

From an actual programming perspective, a programmer can deal with the entire memory space as one flat, unprotected memory. If synchronization safety is an issue, they have a task/channel programming model where library calls facilitate a simple memcopy to get the data across. Messaging in general is handled using an MCAPI library.

Tilera uses a very different model: they focus on external memory with an elaborate caching and coherency scheme. Each node has a 32-KB level-1 instruction cache and a 32-KB level-1 data cache, supported by a unified (instruction and data) level-2 cache of 256 KB. At current implementation sizes, the L1 data cache is the same size as the Adapteva local memory per node.

The hardware-built cache coherency mechanism is such that, simplistically put, each L2 cache entry anywhere is visible everywhere. So any data request from any core for data that happens to reside in some other core’s L2 cache will be serviced from that cache, not from a new external memory access. The effect is that a programmer can treat the device as having a single global shared memory, like Adapteva, although the enabling mechanism is different.

Tilera has five overlaid networks in a mesh configuration to move this data around. One is for memory; two are related to the cache, with one handling cache-to-cache traffic and the other transporting coherency traffic. There are two other “advanced programming” networks that programmers can use for message passing, with an MCAPI library again being available for abstracting the message-passing calls.

Tilera has done a lot of study to see how best to size the network switches. They claim 1 Tb/s of peak bandwidth in each switch, with the meshes typically running under 50% utilization.

Tilera also addresses the external memory bottleneck by supporting memory striping across several memories. This scatters a memory space that’s logically contiguous across different memories so that you can actually write more than one at a time – you do have multiple ports because you have multiple memories.

The one really big difference between these two is scale: Adapteva is aiming for 40 times more cores than Tilera, which is moving up to 100 cores in their latest family. Whether the Tilera coherency scheme can survive an order of magnitude growth remains to be seen. On the other hand, the Adapteva scheme is best suited to the types of programs associated with DSP algorithms, where tight tasks focus on local data until done. The simplicity of their flat memory would break down given more general programs and data traffic, so it’s not a panacea for all programming.

So there’s no one-size-fits-all solution. These guys first have to demonstrate that there’s really a need for this level of scaling: while they both claim some success, there’s been no apparent rush. It’s then likely that players in this area will have to pick what they want to do best and tune the architecture for that.

While they figure that out, the rest of us will try to avoid driving during rush hour. I’m not expecting any new cab coherency scheme to appear that might make me available in more than one place at a time to ease my need to get to all those places on my own.

Actually, I think I’m ok with that.

More info: