“Somethin’ just ain’t right about that boy.”

She snuck a sideways glance at him sitting alone at the counter, nursing a cup of coffee, rumpled like he just woke up in those clothes, oblivious to the furtive comments of those sizing him up at the nearby table.

“Yep, I cain’t quite put my finger on it,” she continued, “but he ain’t from this county, that’s for sure. He don’t look folk in the eye, he pert near run me down on the sidewalk th’other day not watchin’ where he was a’goin’. He don’t seem to have a job or nuthin. Just ain’t right in the head, I’m thinkin’.”

“Oh, come on Mae, you’re always getting’ all suspicious and such about new folk. What’s he done to hurt you?”

“Well, I done had my eye on him ever since that car come into town and dropped him off at Ricky’s ol’ place where he’s stayin’. Don’t know who was drivin’ the car; ain’t seen it since. Like they just dumped him off and that was that. He don’t seem to bother nobody, and I been watchin’; mostly he just sticks to hisself and don’t pay nobody no never-mind. So it ain’t like he’s doin’ somethin’ wrong, it’s just that, well, I don’t know… He just ain’t right.”

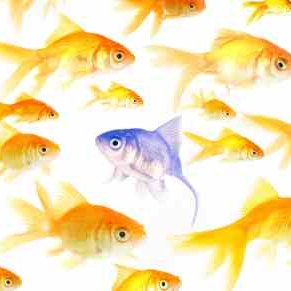

In a structured environment, things stick out when they look different. And if being different is a bad thing, it must be fixed. The nails that stick up must be hammered down.

Of course, sometimes we only think being different is bad. Sometimes it’s just… different. So the challenge is to tell the difference.

Now that synthesis tools have stabilized for many years, patterns have presumably developed that indicate the kinds of circuits that are typically created by synthesis. A scan of the EDIF circuits will generally provide a selection of familiar circuits and structures that suggest that all is well.

Or at least that is what EDA startup ASIC Analytic is betting on. Another company likening itself to Google, it finds and ranks things it sees in the EDIF file that look odd as a way to bolster verification.

Whereas once upon a time the EDIF file was something that people used to describe circuits (or, at the very most, was the direct output of a schematic program or some other such input aid), nowadays it’s pretty much just an internal interchange format that links the output of synthesis to the input of the next backend steps.

Back in the early days of synthesis, equivalence checking provided a way to ensure that the content of the EDIF file correctly matched the intent of the RTL description. This wasn’t some high-level verification step; it was just a confidence-builder to convince the engineer that the synthesis tool wasn’t making a mistake.

Those days are long gone. We pretty much trust synthesis tools now, and they make so many optimizations and transformations that there would be no way to use simple equivalence checking anymore anyway. This is generally considered progress.

So, for the most part, there’s no longer any real reason to go poking around in the EDIF file. Just take it as output and feed it to the next tool.

But ASIC Analytic doesn’t see it this way. They see in the EDIF file an indication of the health of the circuit. They comb through the file looking for anomalous structures, listing and ranking what they find in order of what they believe to be the priority of the anomaly. It sounds more or less like a process of linting the EDIF file, except that not everything found will necessarily be a mistake.

What they search for and how they do the rankings are not public information (although I don’t know if they are as top-secret as Google’s algorithms). But, for example, if both floating inputs and floating outputs are found, the floating inputs will be ranked above the floating outputs since they appear more sinister. At that level of decision, you can imagine a complex set of ranking rules.

The things they find won’t all necessarily point to problems – and in fact right now they don’t filter; they just list everything. They are working on some post-filtering so that the amount of output that needs to be analyzed by the engineer is reduced. But the operating theory here is that significant issues may well lurk within that list, and they need to be addressed.

In general, there are three possible sources for any problem found: a mistake in the engineering, a mistake in the RTL, or a mistake in synthesis. The analysis tool explicitly operates on the basis of “assume nothing,” but, in fact, most of the problems originate with the first two. Mistakes caused by synthesis will typically arise from incorrect options, false paths, constraint problems, clock settings, and other such input problems. You’re typically not discovering new mistakes that the synthesis engines are making.

When design problems are found, some can be fixed at the EDIF level, some at the RTL level. It’s not clear to me how many designers actually go mucking about in the EDIF file, and making a change there breaks continuity between the original design file and the backend, so it would seem like that might be a less preferred option. But it would be naïve to assert that no one ever breaks the continuity chain when it comes to last-minute ECOs, so I guess this would fit in that same category.

The challenge for an approach like this is that it operates at a low level of abstraction. The better job verification tools do earlier on in the process, the less likely it will be that errors show up in the EDIF file. In fact, it would seem that one of the proofs of the effectiveness of higher-level verification tools is a gradual diminution in the number of errors that propagate all the way through synthesis without being detected.

So, as a fundamental technology, this has to move forward with the confidence that, no matter how much engineers and other verification companies do to improve the quality of the input to synthesis, something’s inevitably going to get screwed up. That the strange things found in the EDIF file will actually be wrong, not just different. And that, without an approach like this, there will always be a few bugs that, left undetected, would escape to create a faulty mask set.

And that just wouldn’t be right.

More info: