It was seven in the evening on the 5th of November, 1984, and we had almost finished copying the press pack. The launch was the next day, and I was leaving for London in half an hour, when Iann came into the room and said, “Did you know it has the power of at least a hundred of Sinclair’s home computers?”

We turned the photocopier back on, rewrote and copied the press release, and sure enough, the story that most media channels carried, including the BBC in news bulletins, was, “Inmos launches chip with the power of a hundred home computers.” While this was satisfying in its way, in the longer term it merely continued the problems with the way the product was perceived.

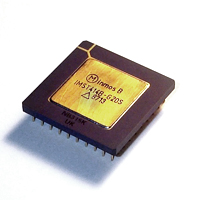

What we were launching was the transputer. Today it would be seen as a parallel processing tile from which to build large parallel processing systems. Then it was seen in many different ways, depending on the standpoint and knowledge of the person viewing it.

Where Inmos started from when creating the transputer was embodied in the name, derived from trans, meaning across, with the suffix ‘puter, from computer. The thinking was that applications were increasingly involving flows of data rather than requiring more structured activities on predefined sets of data, as are characteristic of a “normal” computer. This was the thinking that was creating the digital signal processor (DSP). But where a DSP takes data in from a source, processes it, and passes it on, the transputer had four channels of bi-directional communication, or links. That made it simple to build a two-dimensional array, each transputer linking to four neighbours.

As with today’s debates about multicore, while it was physically easy to build arrays, programming them was (and still is) much more difficult. At the time, there was a lot of debate about bloated instruction sets for processors, and RISC (Reduced Instruction Set Computers) architectures were fashionable. But RISC was also an ideology, and Inmos instead went for an instruction set that was sometimes called optimised and was designed for data flow and parallel processing. Inmos also built its own programming language, occam. This was inspired by occam’s razor “entia non sunt multiplicanda praeter necessitatem,”which you all know translates as “do not multiply entities unnecessarily,” or Keep It Simple, Stupid.

Alongside occam was the transputer development system (TDS) which had an editor, compiler, linker, and debugger.

The whole approach of the transputer, the instruction set, and the language was based firmly on Tony Hoare’s theory of “Communicating Sequential Processes” (CSP). Tony, now with Microsoft in Cambridge, England, was a consultant to Inmos while teaching at Oxford University, and his views were an important influence on the early decisions.

A problem at launch was that people were comparing the transputer to Intel and Motorola processors. The terms embedded and microcontroller were not widely used then. In that comparison Inmos failed a whole load of inappropriate tests. Where was the operating system? What about drivers? Where was the supporting chip set? And why was there no support for high-level languages? The fact that the transputer was a building block was lost on many commentators, both friends and enemies.

There was a lot of novelty to take on board for someone in industry: a new architecture, a new language, and a new development environment. In fact a whole new mode of thought: thinking parallel is hard for someone whose whole working approach has been forced into a sequential mode.

However, the university sector loved it. It provided them with the tools to explore wide ranges of different areas, and today there are many senior managers whose eyes light up and who can tell you in great detail about their student projects of the late 1980s and early 1990s using the transputer.

The first transputers were 16-bit, followed by 32-bit versions. And although some were used in large supercomputers, others as microcontrollers (at one stage most laser printers used a transputer), the transputer was never a commercial success.

Why was this? A significant reason was Inmos itself. Inmos was owned by the British government. In 1978, a Labour administration, worried that Britain was falling behind in the use of silicon chips, invested in a business plan put together by two Americans, Dick Petritz and Paul Schroeder, and a Brit, Iann Barron, to create an nMOS silicon company. Soon after the investment was announced, a general election brought Margaret Thatcher to power. She was a great believer in the free market, and one of her advisors on industry in general and electronics in particular was Lord Weinstock, the head of GEC (the UK one, not the US one). His view was that transistors were like nuts and bolts – you buy them on the open market at the lowest possible price and concentrate on what we now call the system level. Weinstock did not understand the changes that were happening with the integrated circuit and the microprocessor.

The original plan was that an investment of £50 million, to be paid over time, was to create an Anglo-American company with the US end (in Colorado Springs) designing memory devices and creating the process, and the UK, initially in Bristol, developing the transputer and, from a site or sites to be determined, carrying out volume production.

When Thatcher was elected, there was a debate over whether the government should complete the investment. The debate was protracted and at times acrimonious. The biggest problem from the Inmos perspective wasn’t the enemies; it was the supporters who were making claims that went way beyond anything that the company was prepared to make.

The uncertainty meant that the management accepted that the US plant should be built, not as a development facility, but as a full-blown production facility that would begin making and selling 16k SRAMs. These became a successful product, particularly when Intel, who had been promising a competitive part, admitted that it wasn’t going to be delivered, leaving the market wide open. The US management, without the knowledge of the main board, were busy trying to find venture capital money to buy the memory business as a whole, wanting the plant to look as profitable as possible. The US management and sales force were heavily memory-oriented and didn’t really understand the transputer, so, with limited capacity for test chips and prototypes, the transputer was pushed well down in the queue, adding significant delays to the first family and limiting design exploration for the later products.

The British government sold Inmos to Thorn EMI (and made a significant return on their investment) just as Inmos was preparing to go public. A downturn in the industry soon after the sale meant there were lay-offs, and, at the same time, some of the transputer team left to form a super-computer company using transputers. The changes meant that momentum behind the transputer was dissipated, and the last model, the T9000, was very late and underpowered. This was in part attributable to a second change in ownership, when Thorn EMI did a complex deal with STMicroelectronics, in effect paying ST to take Inmos. ST did use some of the transputer technology in a range of microcontroller areas but effectively left the rest to wither on the vine.

A second factor was that the transputer was ahead of its time. The problems it was trying to solve, except in supercomputing, were not urgent, and Moore’s Law allowed many of them to be swept under the carpet for a further twenty years. Other issues were resolved with multi-threading operating systems.

There were also process issues – the technology wasn’t sufficiently advanced to fit all the elements needed for an efficient processing unit, particularly memory, on to a single chip. Today, of course, the transputer’s gate-count would be lost in a corner of any production device.

But while it was a commercial failure, the legacy of the transputer is enormous. The Bristol area of Britain is a hotbed of companies in electronics, many of them directly or indirectly descended from Inmos. The technology world has many senior managers who were exposed to the ideas of the transputer in their youth. And now there is an urgent need to remove the processing bottleneck, and, as multi-core becomes a hot topic, companies are turning back to the ideas that were embodied in the transputer. Microsoft has even hired Tony Hoare, so maybe we can look for help in solving the issues of programming multiple processors from an unexpected direction.

One thought on “A Parallel World”