It’s crunch time. The prototype for our board has been spun and is in transit back to our lab for testing. The project is already two weeks behind schedule thanks to late changes to the spec and problems discovered during signal integrity analysis of the layout. It’s been good for me because, quite frankly, I needed those two weeks to get my test bench to where I am satisfied that I have done due diligence to the simulation.

The project has been coded in VHDL, and I have taken a fairly disciplined approach – maintaining hierarchy throughout, using entity declarations for all black-boxes, primitives and macros (so the design is more portable and meets the IEEE standard as much as possible), and a mostly RTL-styled approach. Of course, some of my design is behavioral, or else I have ignored the major benefit of HDLs altogether – the ability to use behavioral abstraction.

So I put in the extra mile, and now I can launch the simulation. I run it for a couple of milliseconds’ simulation time. I like what I see so far – my reset logic is taking the exact number of clock cycles I predicted, the clock synthesizers are all functioning correctly, and I can measure the duty cycles and periods in the wave editor and get the results I want. And my I/O signals show me the ones, zeros and tri-states I was hoping for. It’s important to mention that as far as I can remember in my few thousand lines of code, I have carefully avoided asynchronous processes, clock domain crossings and most of all, resolved signals. I hear my mentor-in-the-craft’s voice echo in my head with a derogatory Tristates are for PCB guys and polygon pushers.

Feeling that early rush of confidence, I get adventurous and decide to synthesize the design. Fortunately, the tool I am using also lets me try several different synthesis engines with a couple of mouse clicks, so I start with one of the built-in ones. Due to the few complex behavioral state machines in my project, it takes a few minutes to optimize, but it completes with only a few minor warnings. So far, so good.

Feeling slightly more confident, I go ahead and click the Build button to continue on to the Map, Translate, Place and Route and Bit File Generation stages of the flow – these are all performed behind the scenes in the chip vendor’s command line interface. Map design runs for about a minute and a half, and stops, giving me an esoteric message about an IBUFT and an OBUFT. Great… I knew my bubble was going to burst, just knew it.

My usual next step is to shrug my shoulders and switch to the FPGA vendor’s synthesizer to see if its optimizer will produce a result that will place and route successfully. So, a couple more mouse-clicks, and I re-run Synthesis and Build. This time, I notice synthesis is somewhat faster than before. I become hopeful that this is because the vendor’s engine is doing less optimization and will result in a larger but more accurate implementation. Then during Map, biff – it stops in exactly the same place, with the same esoteric error message, followed by a warning:

ERROR:NgdBuild:924 – bidirect pad net ‘DATA_IO<15>’ is driving non-buffer

primitives:

pin I1 on block U_dspboard_fpga/fb_epb_intf_inst/n12g with type AND2B1

WARNING:NgdBuild:465 – bidirect pad net ‘DATA_IO<15>’ has no legal load.

Obviously a few choice sibilants have slipped out the side of my mouth – the irises of the coworker in the next cube narrow and he peers sidewise at me in a Sam Eagle kind of way. Luckily, I am able to cross-probe from the error message in my messages panel to the line of code each error is coming from. A double-click of the first error message, regarding two buffers connected in series, takes me to the following code fragment:

DATA_IO <= DATA_IN when CNTL_IN(4) = ‘0’ — write to Ext. Device

else (others => ‘Z’);

DATA_OUT DATA_IO — data from core to CF (5000_0050)

My initial thought is yeah, so I have a tri-state port and a mux, what’s the big deal? Astute and experienced readers will probably pick up the issue, but this is also the kind of error that can stump a new FPGA designer for days, causing lack of sleep and hair loss. I gazed at these three lines of code for a half minute and realized I should draw on a random piece of paper what my original intent was:

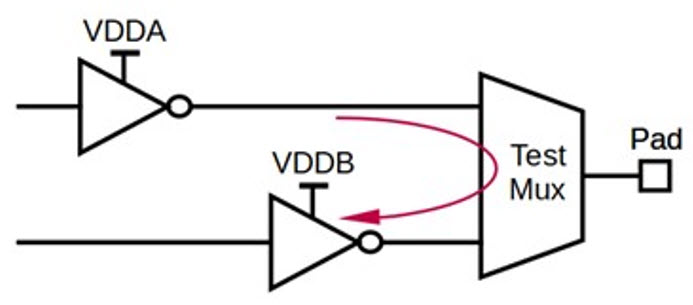

Now I realize that I previously assumed the synthesis engines would understand I don’t really mean to put high impedance signals inside the device. In fact, when I re-read the error and warning messages it comes to me that this is what synthesis has done:

If you are a good FPGA designer and actually read the data sheets and library guides, you’ll know right away that this is not possible. There are no routing resources in any FPGA I know of that would allow this kind of connection.

The first observation that strikes me is that what I would have drawn if designing a schematic would be the simple IOBUF circuit shown below:

And since DATA_IO and DATA_OUT route to IO pads in a higher level document, the synthesizer would insert the appropriate OBUF for DATA_OUT, so I don’t need to draw it here. This is a great example of how a schematic and block diagram approach to design actually can reduce errors up-front. The second and more startling observation is that everything I wrote in my VHDL code simulated correctly, showing signal transitions that I actually intended. I always knew of course, the difference between something capable of being simulated, versus being synthesized. This is a new twist – I can simulate, and synthesize my design without errors. I will assert (pun intended) that it should now be asked “Can it be simulated, versus synthesized, versus mappede?”

This scenario has been elaborated (again, pun intended), although it’s based on real events I have experienced. I have spoken at length with many FPGA designers who prefer VHDL and Verilog for their design flows, and I can agree with them that most of their designs are too complex for a schematic based approach. That is, if you are doing your design mostly at RTL. HDLs were invented to reduce the amount of effort in describing a logic function because the number of gates and flip-flops simply became too large and unwieldy. However, FPGAs (and ASICs) have continued to follow, ahem, Moore’s Law and designs have grown accordingly, to the point where even VHDL and Verilog will get you stuck in a quagmire where you no longer see the overall design intent. Problems like this one are proof of that.

What designers will have to do to stay on top of their game – and I honestly believe they will not have a choice in this much longer – and move to a higher level approach which gives them the time and freedom to focus on what’s most important in their product: what differentiates them in their market. The challenge the industry faces here is that experienced, skilled designers will have to swallow their pride and use IP that’s been offered to them royalty free with the tools, rather than re-build everything themselves in HDL. I can understand the challenge too: being a true engineering geek I actually do what I do because it’s a challenge and not many other people in this world can (or so I like to believe). But the reality is, if I want to make better products and do it faster, I will have to stand on the shoulders of others, say “thanks”, and piece together my system rapidly using a block diagram method. Then I can work on my own piece to integrate it into the overall system, making it reliable and razor sharp.

9 thoughts on “The Simulizater Is Not God”