About twenty years ago, there were two well-known approaches to custom IC implementation. The first, which we called “Full Custom,” was the high-end methodology. Polygons were painstakingly pushed across Calma screens by determined designers working to eek out every ounce of performance from fiery five-micron silicon technology. Full Custom design was difficult and expensive – not for the faint of heart or the financially challenged.

The second option at that time was Gate Array. Gate Array was for product teams with deadlines to meet and more important things to do than fighting with design rule violations and transistor-level layout problems. Gate Array offered lower risk, faster time-to-market, and reduced development cost. Economy of scale was on your side because only a relative handful of masks was required to complete the final personalization of a Gate Array, whereas Full Custom required a complete set, just for you.

Fast forward a decade, and a shift had occurred. Full Custom methodologies had almost dropped out of use except for mixed-signal or exotic-technology designs. Its place at the high end of the scale had been taken over by “Standard Cell” – a technology that brought some of the automated design techniques and module-level re-use of gate array to a more highly optimized environment. Standard Cell had inserted itself in between Gate Array and Full Custom and pushed Full Custom up and off the map.

At the same time, Gate Array’s position on the low end of the scale was under attack. “FPGA” had emerged, growing out of the much simpler PLD and PAL technology, and threatened Gate Array as the fast time-to-market method for getting custom functionality into silicon. Gradually, Gate Array gave way in the market to FPGA, relegated to a cost reduction option for successful FPGA designs going to higher volume.

For the next ten years, FPGA and Standard Cell ruled the roost. Each had slipped in below the prevailing technology and driven upward on the technology curve, uprooting the incumbent and stealing market share, starting from the base of the pyramid and moving upward. Standard Cell was for well-funded, high-volume projects that required maximum performance, minimal unit cost, or some combination of the two. FPGA was for lower-volume, fast turnaround designs that didn’t care as much about register-to-register delay as they did about design-to-cash-register delay.

Today, a new interloper is on the scene. Up from the ashes of Gate Array, we have Structured ASIC. Much like Standard Cell a decade earlier, Structured ASIC brings a lot of the design simplicity, lower development cost, and faster turnaround of FPGA technology to the performance level, density, and lower unit cost of Standard Cell. Just like a decade before, the new kid is working his way up the tree, picking off the low-hanging fruit of less demanding Standard Cell projects, and threatening to starve the stream of revenue that supports the forward progress of the more mature Standard Cell methodology.

Interestingly, FPGA is under attack primarily from itself. With price points approaching 100X lower than previous generation FPGAs, new super-low-cost FPGA technologies are pushing up the sophistication ramp against their higher-end siblings. As FPGA companies struggle to compete with one another on low-cost, high-volume FPGA lines, they are gradually migrating more of their flagship capabilities into the lower-margin product lines.

If this scenario plays out according to its current trends, and no new, disruptive technologies appear on the scene to change the rules, we may be left with two classes of devices making up the bulk of custom digital logic in the market: Low-cost, high-capability, zero NRE FPGAs, and lower-cost, higher-capability, low-NRE structured ASICs. If you plot a cost/capability curve for both options together, it would make a more or less continuous function, with the exception of a discontinuity where we switch from the zero-NRE FPGA to the low-NRE structured ASIC.

Considering Reprogrammability

Behind the scenes, the single differentiator that most clearly separates these two approaches will be (and is today) programmability, or, more specifically, reprogrammability. Reprogrammability is what puts the “FP” in “FPGA”. It is a powerful property that merits closer review, as it fundamentally alters the very concept of digital design. The distinction between zero-NRE FPGA and low-NRE Structured ASIC is more than just a few dollars (or Yen, or Euros, or…). Cost differences of this scale are almost always lost in the economics of even a modest-scale product development project.

The real difference between reprogrammable and mask-programmed technology is the presence or absence of a wall between design and deployment. NRE (even a low one) is due when you pass through a turnstile between those two phases. Masks are made, wafers are processed, and virtual concrete is poured. The design team is always clearly and definitely on one side or the other of that barrier at any given time.

Reprogrammability, however, blurs that barrier – even dissolving it completely in many applications. For designers that come from the ASIC legacy, this may seem like a simple softening of a line that was previously hard. “Tapeout” is no longer a solid schedule barrier, but a flexible one instead. Last minute design changes can be incorporated without career-limiting implications, and overall project schedules can more easily adapt to slight delays and engineering iteration.

For designers that come from other backgrounds, however, reprogrammability represents a much more significant step. For these engineers, unencumbered by pre-existing mental models of an ASIC design methodology, The NRE wall isn’t merely softened or blurred. It never existed at all. High Performance Computing (HPC) experts designing reconfigurable computers, for example, view reprogrammable FPGAs as something akin to a new type of processor with built-in program memory. Their physical hardware is designed with no notion at all of a particular personalization of the FPGAs. The bitstreams that customize these devices will be created at what reconfigurable computer users would consider “compile time,” and will perhaps be dramatically different each time the machine is powered up.

For an ASIC designer, synthesis and place-and-route of the FPGA design are definitely part of the design cycle. The fuzziness of the design/deployment line does little to change that perspective. For the reconfigurable crowd, however, these steps are definitely not part of the design cycle. FPGA-based hardware is developed, built, sold and delivered to the customer before these steps are run. For the HPC crowd, synthesis and place-and-route are two steps performed by the user in the process of “compiling software” to run on the machine.

Design Schedule Concurrency

The ASIC-based and HPC-based perspectives represent two extremes in the spectrum of views on reconfigurabity, but engineering teams can be found at almost every point along that curve. Starting at the ASIC-based end, we have design schedule concurrency. With reconfiguability, board design and layout can proceed in step with, and even ahead of, finalizing of the custom logic design. Changes to the FPGA design can be rolled in even after boards are built and being used for integration and development. The delaying of the “commit” time on the FPGA design allows the custom logic to be modified right to the end of the project. Overall system-design schedules can thus be compressed, improving time-to-market and reducing design risk.

Field Upgrades

Moving another step along the curve, we get to field upgrades. Design errors can be corrected, new features added, and evolutionary adjustments made to a design after it is in customer hands. As long as there is a provision for delivering and installing bitstream upgrades for the FPGA in the field, the life of a product can be significantly extended. As long as the board-level hardware doesn’t have to be modified, the custom logic can evolve with the times, the market, and the technology.

Product Variants

Continuing our walk of reconfigurable wonders, we get to product variants. For many products, the market requires more than just one version. There may be regional standards that are different for different geographies, I/O interfaces that vary for different applications, or feature levels that require different levels of capability for different price points. Each of these can be accomplished using reprogrammability to establish product variants. Your design team can build a single board, module, or box that is common to all versions of the product, and the FPGA can be reconfigured based on the particular variant that is needed for each deployment.

Product Variants are again a cost optimization, allowing the design, manufacture, and inventory of a single version of the hardware to support many versions of the product. Combined with field upgrades, both the longevity and breadth of a single hardware design can be extended, giving a dramatic return in the form of lower design and manufacturing cost, as well as the reduction of required inventory to support varying demands of a variety of product versions.

The use of variants can also improve a product’s competitiveness, allowing the release of minor competitive tweaks (1.1, 1.2, 1.3, etc.) instead of just major releases (like 1.0, 2.0, 3.0). Improving a product’s ability to respond to competitive pressures and to quickly adapt to changing conditions can be a clear advantage over the more traditional cycle time of an ASIC-style methodology.

Density Optimization

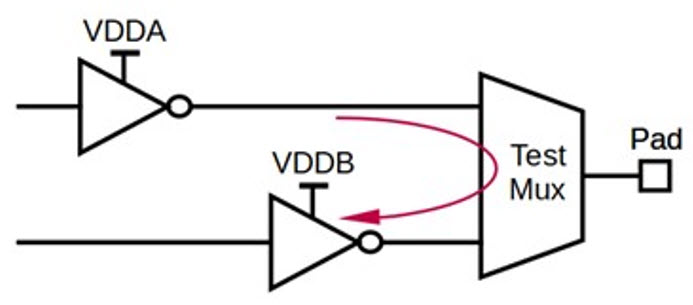

Moving still farther from the ASIC-based concept, we have the concept of “density optimization” where reconfigurability allows a lower-density device to do the work of a higher-density one by swapping in different bitstreams at different times. Typically this is useful in devices with modal functionality, where only a single mode is active at any given time, and the device can be reconfigured for the current operating mode.

The benefit of density optimization is usually strictly economic. You’re getting several FPGAs for the price of one, or a larger effective FPGA for the price of a smaller one. The only requirement is that your design must have some modal nature that can tolerate a few milliseconds of reconfiguration time, and your I/Os need to be easily shared or multiplexed between modes.

Reliability Enhancement

In the space of high-reliability design, FPGAs suffer from skepticism regarding their ability to hold their own in hostile environments (such as radiation-intense applications) where metal-programmed technologies offer higher resistance to radiation events such as single event transients (SETs) and single event upsets (SEUs). One of the best mitigation strategies for those issues, however, is reconfigurability. The FPGA can periodically check itself for errors and “scrub” its configuration logic by reloading or partially reloading its configuration bitstream.

Reconfigurability also allows a few additional benefits on the reliability front. Because they can be in-system reprogrammed, FPGAs can be set up to compensate for the failure of other devices in the system, redirecting I/O traffic through alternate paths and bypassing failed hardware. For applications such as satellites, where a service technician can’t be deployed, reprogrammability offers a remote-control patch capability where system failures can be repaired from the ground.

One of the key failure modes of any design is human error. Reconfigurability improves reliability in this aspect as well, allowing a corrected design to be uploaded in the event that a design mistake is discovered. For products that are widely deployed, this can save a bundle in recall expense, and it effectively improves the reliability of the deployed hardware by offering an ex post facto fix-up path.

Another protection afforded by in-field programmability is design security. By enabling the loading of proprietary design separate from the manufacture of the hardware, vulnerability to IP theft can be dramatically reduced. If your hardware is useless without being combined with a properly-obtained bitstream, a number of strategies can be deployed to eliminate the threat of overbuilding and other common theft modes.

Dynamic Operation and The Future

Arriving finally at the HPC end of our spectrum, we have the already-discussed reconfigurable computing applications of FPGA. Joining these, and ironically bringing our investigation somewhat full circle, is the use of FPGAs for ASIC prototyping. Emulators have used FPGAs for years to allow the dynamic loading and re-loading of an ASIC design under development for high-speed verification purposes. The emulator itself is built with no up-front knowledge of the various designs it will be validating. That information is to be supplied by the consumer.

These types of runtime reconfiguration represent the current extreme of the FPGA reconfigurability spectrum, but the front is always moving. For example, much like memory folks have been doing for years, it is possible to use reconfigurability for yield enhancement. An FPGA whose LUTs aren’t all firing correctly can be dynamically reconfigured to use alternates, slightly reducing density but allowing a much higher effective process yield, thus lowering device costs.

Now that FPGAs can also contain embedded processors and memory, both hardware and software, as well as the fundamental platform design, can be dynamically modified in the field. Using soft-core processors, even the processor itself could be adjusted and optimized on the fly on a per-application basis. Once you make the jump to the mindset of truly dynamic operation, the possibilities expand enormously. As a new generation of designers emerges without some of the mental baggage that many of us call “experience,” we may see a complete new generation of design unlike anything we’ve tried before.

15 thoughts on “Rationalizing Reconfigurability”