I really wish I could have attended this year’s Chiplet Summit, which took place from February 17 to February 19, 2026. This auspicious event was held at the Santa Clara Convention Center in California. I know this facility well—it’s where I met my first telepresence robot—but that’s a story for another day.

As you can see from the 2026 Program at a Glance, there was an abundance of terrific topics, captivating keynotes, and scintillating special presentations. I would have been in my element, rubbing shoulders with my “peeps,” but it was not to be. Call me “old-fashioned,” if you will, but these days I only ever attend conferences if someone else is paying for my time, transport, and accommodation (this really is the best way to travel).

One of the keynotes I really wanted to see was Designing the Future Today: AI-Driven Multi-Die Design. This was delivered by Abhijeet Chakraborty, who is VP of Engineering at Synopsys. Abhijeet is responsible for the development of multi-die and 3D heterogeneous integration technologies and solutions—both topics that are close to my heart.

Abhijeet played to a packed audience at the 2026 Chiplet Summit (Source: Synopsys)

Happily, I got to chat with Abhijeet after the summit, and he was kind enough to bring me up to speed on his presentation, along with a bodacious bunch of other stuff. Abhijeet set the ball rolling by noting that two interdependent forces are currently at play. The first is the tremendous growth of AI applications and technologies, which are driving the need for chiplet-based multi-die systems. Paradoxically, the multi-die

systems themselves are becoming so large and complex—coupled with the pressures of ever-shrinking time-to-result and time-to-market windows—that engineering (designing, testing, verifying…) these devices requires AI. In a crunchy nutshell (my words, not Abhijeet’s), AI needs multi-die systems, while engineering multi-die systems needs AI.

A survey conducted by Synopsys showed significant growth in the adoption of multi-die designs across the company’s customer base, both over the last three years and into the future. In 2025, for example, 43% of companies were creating chiplet-based designs, while 51% were planning to use 3D stacking technologies in the coming year (which would be this year at the time of this writing).

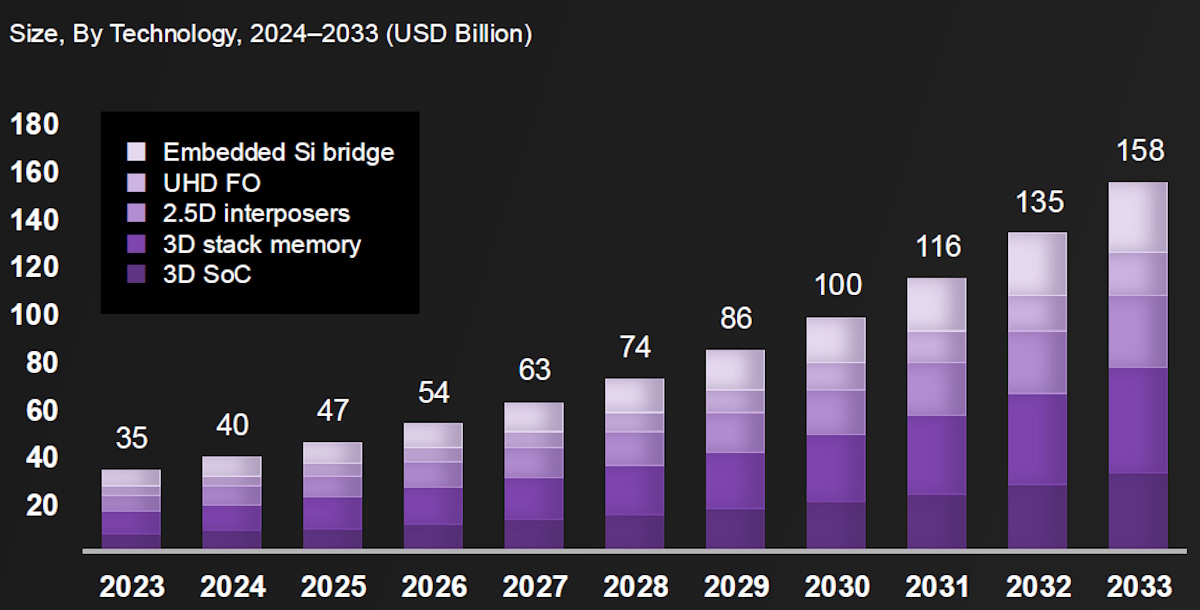

As Abhijeet noted, much of the growth in multi-die design is driven by advances in packaging technologies, including 2.5D interposers, 3D memory die stacking, and 3D SoC stacking (i.e., stacking SoC dies on top of one another). What we are talking about here is a worldwide packaging market that’s projected to reach $158B by 2033, up from “only” 35B in 2023, at a GAGR of 16.4%.

The rapid growth of advanced packaging technologies (Source: Synopsys)

This provides another example of interdependency. On the one hand, advanced packaging is needed for multi-die systems; on the other hand, the growth of multi-die systems is fueling advances and investment in advanced packaging. As Abhijeet says, “One could argue that this is a golden era for advanced packaging.”

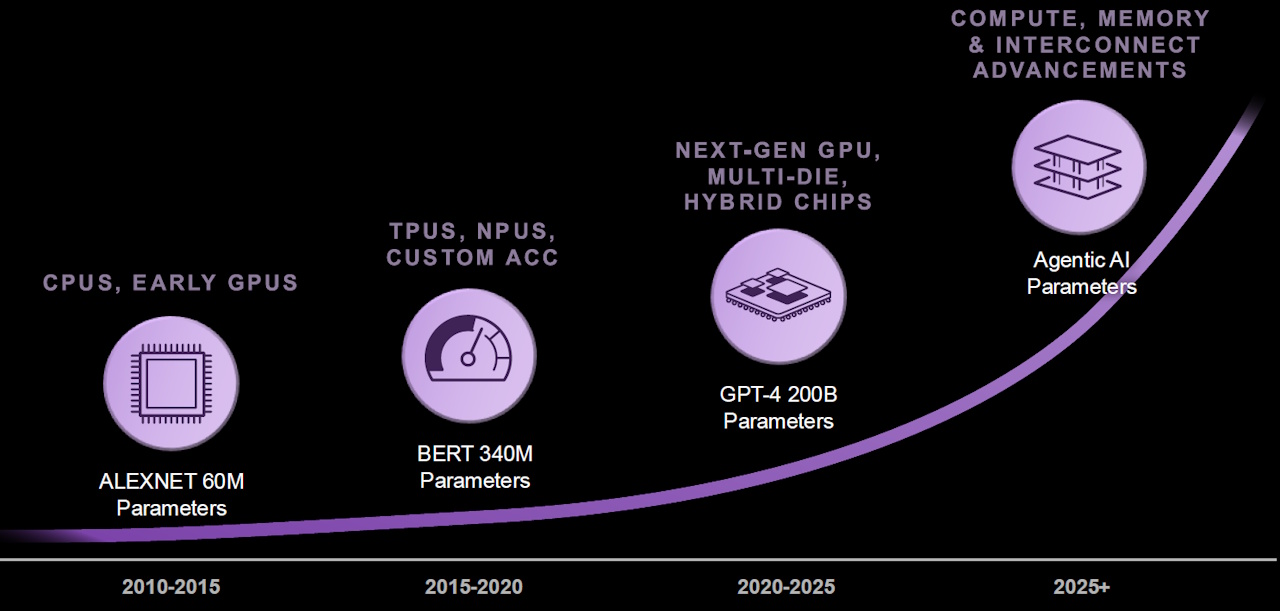

Returning to the evolution of AI, just to make sure we’re all tap dancing to the same skirl of the bagpipes, perceptive AI senses and interprets the real world using vision, audio, and other sensor inputs; generative AI creates new content—text, images, audio, video, and code—based on patterns learned from data; and agentic AI acts autonomously to achieve multi-step goals, combining reasoning, planning, and the use of tools. Bearing this in mind, the following image provides an easy-to-understand overview of the parameter problem (I like easy-to-understand).

If you thought generative AI was bad… (Source: Synopsys)

Let’s start with AlexNet, a perceptive AI in the form of a convolutional neural network that takes in visual data and recognizes what it’s looking at (e.g., “cat,” “dog,” “teapot”). That is, it perceives and interprets the world, but no generation is involved. This revolutionized the computer vision industry when it was introduced back in the mists of time we used to know as 2012, but it had “only” 60 million parameters.

Now consider BERT, which is primarily a perceptive AI, but with a toe or two in the generative AI world. Introduced in 2018, BERT is a transformer-based language model trained to understand context in text and perform tasks like classification, question answering, sentiment analysis, and entity recognition (i.e., understanding language). BERT is not generative in the modern sense; it uses masked language modeling, which looks generative, but its purpose is better comprehension, not open-ended generation. But the important point to note here is that BERT, which trailed AlexNet by only six years, boasted 340 million parameters.

Next, let’s turn our “attention” (there’s a pun here for those in the know) to generative AI in the form of GPT-4, which was introduced in 2023, just five years after BERT. This little scamp flaunts 200 billion parameters. And now we are another three years further on, with agentic AI applications sprouting all around us like metaphorical mushrooms.

Would you care to guess how many parameters we’re talking about now? (I know I wouldn’t.)

What really struck me as Abhijeet walked through his keynote presentation is that multi-die design isn’t just “big chip design with extra steps”—it’s a fundamentally different problem space. You’re no longer optimizing a single piece of silicon; you’re orchestrating an entire system of dies, each potentially built on different process nodes, stitched together with high-speed die-to-die links, and wrapped in increasingly exotic packaging technologies. The number of decision points explodes—partitioning, connectivity protocols, packaging choices, thermal strategies, power delivery, reliability, security—you name it. And the kicker is that many of these decisions need to be made early, when the cost of getting them wrong is at its highest, and when the sum of what you know is at its lowest.

This is where Synopsys is leaning hard into AI—not as a bolt-on, but as an integral part of the design flow. As Abhijeet explained, traditional iterative refinement (set constraints, run tools, analyze results, rinse and repeat) simply doesn’t scale when you’re navigating a vast, multidimensional design space. By contrast, Synopsys’ reinforcement learning–based technologies effectively learn the landscape, guiding designs toward near-optimal solutions with far fewer iterations. Whether it’s optimizing power, performance, and area (PPA), accelerating verification closure, or even tackling thorny problems like die-to-die signal integrity, the results are compelling—faster runtimes, better quality of results, and less reliance on a handful of “wizard-level” engineers to make the magic happen.

And then there’s multiphysics (Q: “Have you got the multiphysics?” A: “No, I always walk this way!”), which turns the dial up to eleven. In a multi-die world, you don’t get to think about electrical, thermal, and mechanical effects in isolation—they’re all intertwined. Running full-fidelity simulations across these domains is computationally brutal, which is why many teams do it sparingly, often late in the flow. Synopsys is using AI to change that equation, enabling faster solvers, smarter abstractions (such as reduced-order models and digital twins), and earlier insights into system behavior. The goal is clear: bring signoff-quality thinking upstream so you can make better decisions sooner and avoid expensive downstream rework.

All of which brings us neatly to Synopsys Converge, which took place just a couple of weeks ago as I pen these words. This is where the company pulled back the curtain on what comes next: agentic AI. If generative AI gave us copilots, agentic AI gives us teammates. Synopsys’ AgentEngineer technology introduces orchestrated, multi-agent workflows in which specialized AI “engineers” collaborate to tackle complex design tasks—generating RTL from specifications, building testbenches, running verification, and iteratively refining results toward defined goals.

This isn’t just about speeding up individual steps; it’s about rethinking the entire flow. Instead of a human manually shepherding each stage, you have a coordinated system that can plan, act, evaluate, and adapt, much like a team of experienced engineers working in concert. Early results are already impressive, with productivity improvements reported in the 2X-5X range for complex SoC design tasks. More importantly, these agentic workflows are being designed to integrate with existing tools, data, and customer environments, making them a natural extension of today’s design ecosystems rather than a disruptive replacement.

At the same time, Synopsys is advancing its vision of system-level engineering through initiatives such as its Electronics Digital Twin (eDT) platform, which integrates silicon design, software development, and full-system validation in a unified, cloud-enabled environment. By enabling teams to develop, test, and validate systems long before physical hardware exists, digital twins complement agentic AI by providing the virtual playground in which these intelligent workflows can operate.

And so we come full circle. At the beginning of this column, we discussed two interdependent forces: AI driving the need for multi-die systems, and the engineering of those systems demanding AI in turn. What Synopsys is doing—across chiplet design, multiphysics analysis, digital twins, and now agentic AI—is to bring all these forces into alignment.

Multi-die systems provide the raw computational fabric required for the next generation of AI, while AI—evolving from assistive to generative to fully agentic—provides the intelligence needed to design, optimize, and validate those systems. It’s a virtuous (and slightly dizzying) cycle in which each feeds the other, accelerating innovation on both sides.

To put it another way, we’re entering an era in which the tools we use to design our machines are becoming as sophisticated as the machines they are designing—and that’s when things start to get really interesting.