“When a dog bites a man, that is not news, because it happens so often. But if a man bites a dog, that is news.” – attributed to at least three journalists and publishers including UK publisher Alfred Harmsworth, John B. Bogart (editor, New York Sun), and Charles Anderson Dana.

National Highway Traffic Safety Administration (NHTSA) data shows that 37,461 people were killed in 34,436 motor vehicle crashes in the US in 2016. That’s an average of 102 deaths attributable to car crashes every 24 hours or about four per hour, every hour of every week of every month. Despite the carnage, most of us get into our cars every day, turn the key (or push the button) to start the car, and head out into the mean streets with not a second thought of our possible impending doom.

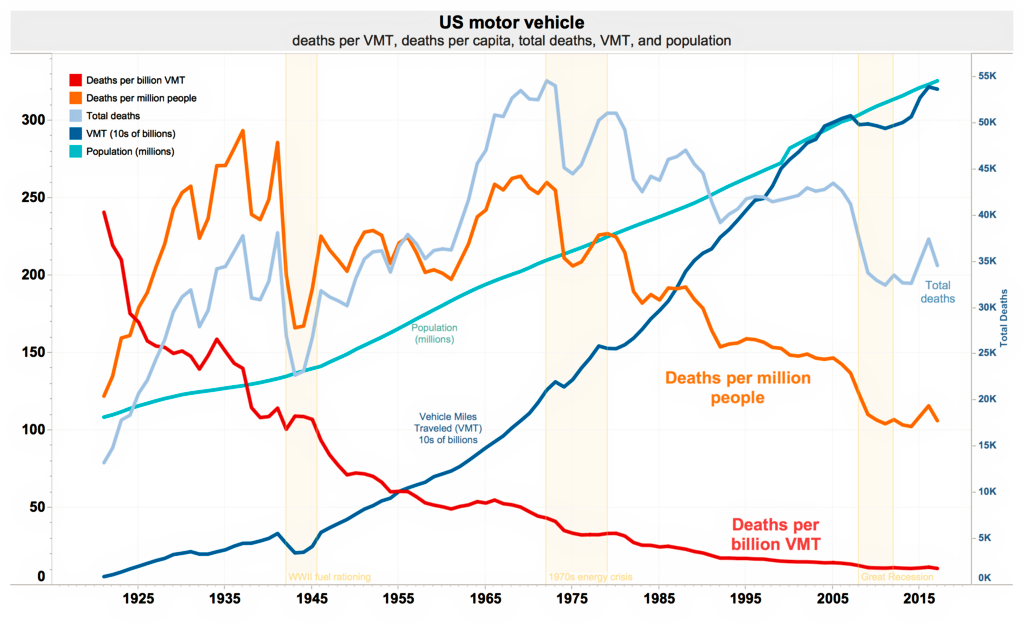

Perhaps that’s because the number of deaths is miniscule compared to the number of passenger hours in vehicles every year. NHTSA measures passenger hours in terms of vehicle miles traveled (VMT), and the number of deaths per VMT has steadily dropped since… well, since General Motors and Ford were founded. Even the total number of crash-related deaths in the US has dropped since 1975, despite the rapid growth in the number of vehicles on the road, until that trend reversed in 2015. (More on that later.)

Here’s NHTSA’s graph, telling all:

So people dying in car crashes is not news, because it happens every day. Unless those crashes are caused by self-driving cars.

A Series of Unfortunate Accidents

In 2018, at least two of those deaths will be connected to self-driving vehicles, thanks to two very unfortunate accidents. One such accident took place at night on March 18. It occurred on an uncrowded but dark stretch of divided highway in Tempe, Arizona. A special Uber-equipped Volvo XC90 collided with a woman walking her bicycle across the road. She was subsequently declared dead. The Uber vehicle was driving autonomously.

The Tempe police department released video from the car’s dash cam recorded just prior to and during the accident in a Tweet. The dash cam simultaneously recorded the view of the road ahead and an interior view of the safety driver. (You’ll find all sorts of copies of this video from various news agencies posted to YouTube.) The dash cam footage shows that the pedestrian was in shadow as she crossed the street and she becomes visible in the video only one second or so before the car hits her. The car does not appear to slow in the video until after the collision and it appears that the impact caused the safety driver to finally look up at the road from what appears to be a cell phone or tablet held at lap level.

(You’ll have a hard time convincing me that the spike in collision-caused deaths since 2015 that appears on NHTSA’s graph is not caused by cell phone-induced driver distraction. I see way too much of it every day on the streets of Silicon Valley to believe otherwise.)

According to a March 29 article in the San Jose Mercury News, the attorney for the husband and daughter of the Tempe crash victim has said that the “matter has been resolved.”

On March 23, less than a week after the Arizona incident, a Tesla Model X SUV belonging to Walter Huang was negotiating the connector ramp between Highways 85 and 101 in Mountain View, California. These are both high-speed divided roads. Huang was behind the wheel when the car slammed into a concrete divider on the ramp. The force of the impact tore the entire front end off of the Tesla, all the way back to and including the dashboard. Huang was killed in the crash.

At first, it was not clear whether this accident was the result of human error or machine error. However, after analyzing data saved in the Model X SUV’s black-box recorder, Tesla released the following information in a March 30 blog post:

“In the moments before the collision, which occurred at 9:27 a.m. on Friday, March 23rd, Autopilot was engaged with the adaptive cruise control follow-distance set to minimum. The driver had received several visual and one audible hands-on warning earlier in the drive and the driver’s hands were not detected on the wheel for six seconds prior to the collision. The driver had about five seconds and 150 meters of unobstructed view of the concrete divider with the crushed crash attenuator, but the vehicle logs show that no action was taken.”

The same Tesla blog post also says:

“In the US, there is one automotive fatality every 86 million miles across all vehicles from all manufacturers. For Tesla, there is one fatality, including known pedestrian fatalities, every 320 million miles in vehicles equipped with Autopilot hardware. If you are driving a Tesla equipped with Autopilot hardware, you are 3.7 times less likely to be involved in a fatal accident.”

Fixes: Some will be Easier than Others

These unfortunate and fatal accidents indicate a number of engineering failures. Some will be easier to rectify. Some will be darn hard to fix.

Let’s start with the easy stuff. The Uber-modified Volvo SC90 automobile reportedly bristles with sensors including seven video cameras, ten radar sensors, and a lidar. The lidar appears to be a Velodyne HDL-64E sensor with 360-degree coverage and a 120-meter range. Based on photos of Uber’s roof-mounted sensor package, at least three of the video cameras face forward to monitor the situation in front of the vehicle. According to an Uber infographic, the radar units provide 360-degree surrounding coverage for obstacle detection.

With that sort of sensor firepower, the Uber-modified Volvo should have stopped short. Information from any of these sensors should have been able to prevent this accident long before the collision occurred. Lidar and radar are active sensors. They emit energy (light or microwaves) and detect any energy reflected back by potential obstacles. Radar and lidar are unimpaired by darkness. The dashcam video from the Volvo suggests that the vehicle’s visible-light video cameras might have been impaired by the darkness, but several good Samaritans who drove the same stretch of road in Tempe at night after the accident have posted videos shot with cellphone cameras that suggest that the road’s not as dark as the Volvo’s dashcam portrays.

Something appears to have gone very wrong between the collection of the sensor data and its use to stop the car. That’s a comparatively easy thing for engineers to find and fix.

To Be or Not to Be (Paying Attention)

At this point, it’s important to remember that Uber’s autonomous vehicles are still being tested and improved. They’re in beta. They need to be tested in real-world situations, and it’s unfortunate that these tests put people at some level of risk. However, people are also at some level of risk just by getting into their cars and driving themselves to work, as the NHTSA data above proves.

And that brings us to the much harder problem. In both the Uber and Tesla accidents, there was clearly some level of human failure. In the case of the Uber accident in Tempe, the safety driver appears not to have been paying attention. In the moments leading up to the collision, the dashcam video shows that the safety driver is not providing additional safety.

In the case of the Tesla crash, the black box recorder data from the doomed Model X SUV shows that the vehicle knew there was some sort of a problem because it reportedly tried to get the human driver to take control for several seconds before the accident occurred. The black box data indicates that the human driver’s hands were not on the wheel for at least six seconds before the fatal crash. This is one of the most common types of auto accidents in the United States.

A March 27 Tesla blog post states:

“Our data shows that Tesla owners have driven this same stretch of highway with Autopilot engaged roughly 85,000 times since Autopilot was first rolled out in 2015 and roughly 20,000 times since just the beginning of the year, and there has never been an accident that we know of. There are over 200 successful Autopilot trips per day on this exact stretch of road.”

In both cases, for the Volvo and Uber vehicles, the human driver apparently did nothing to prevent the crash. This should not be a surprise. Drivers are alert precisely because they are driving. The act of piloting a vehicle through traffic helps to keep a driver alert. When the autonomous driving controls take over, it’s inevitable that people behind the wheel will eventually be distracted. They might even doze off from boredom. It’s human nature.

For military jet fighter pilots, the answer is rigorous training. That’s not going to work for the billions of automobile drivers on the planet. A few days of driving around Silicon Valley firmly establishes that there are plenty of human drivers on the road today who have forgotten a lot of the meager driver training they may once have gotten. They don’t signal. They dive bomb across several lanes of traffic to catch an exit in time. They weave in traffic and drive 20 MPH below the speed limit while checking social media or email and texting. I don’t see driver training getting rigorous enough to fix the human condition in the short- or medium-term future.

One technological fix for this problem that will most certainly fail is for the autonomous car to measure driver alertness. Often, autonomous driving systems rely on an interior video camera aimed at the person behind the steering wheel. If the eyes of the person in the driver’s seat are pointed straight ahead at least most of the time, the inference is that it’s likely the person is paying attention to road conditions. However, this metric infers driver alertness. It by no means guarantees that the driver is sufficiently alert to prevent an accident.

Safe at any speed?

Autonomous cars need to be safe enough to drive themselves. Period.

Are they already?

It depends on your definition of “safe.” In the aftermath of the Tempe accident, Uber suspended its autonomous car program around the world; Nvidia halted public tests of its hardware in autonomous vehicles (including Uber’s); and Toyota also temporarily suspended its autonomous vehicle program. It appears that Uber’s, Nvidia’s, and Toyota’s answers to this question are all “No.” The cars are not yet safe enough to release to the public.

Tesla is in a different situation entirely. Tesla’s vehicles with the Autopilot feature are already being sold to the public and have been for years. Tesla first offered the Autopilot feature on October 9, 2014. Since then, some of Tesla’s vehicles have been involved in fatal crashes while in Autopilot mode but Tesla’s own data suggests that Tesla’s vehicles are safer than human drivers under certain conditions.

In that same March 30 blog, Tesla wrote:

“Over a year ago, our first iteration of Autopilot was found by the U.S. government to reduce crash rates by as much as 40%. Internal data confirms that recent updates to Autopilot have improved system reliability…

The consequences of the public not using Autopilot, because of an inaccurate belief that it is less safe, would be extremely severe. There are about 1.25 million automotive deaths worldwide. If the current safety level of a Tesla vehicle were to be applied, it would mean about 900,000 lives saved per year.”

From Tesla’s March 30 blog post quoted above, the Tesla Autopilot is far less likely to kill you than you are—with one obvious caveat. There aren’t nearly enough Tesla vehicles on the road to make this generalized statement with statistical confidence.

Bottom line: There are still some mighty big engineering obstacles to overcome with autonomous vehicles before the general public will consider them “safe,” but the advent of widespread autonomous driving at some time in the future appears inevitable.

I, for one, welcome our new vehicular overlords (once they’re improved).

Unless they start using Facebook.

After attending the competitors’ conference and registering my DARPA Grand Challenge team in 2003 we went hard to work with hope of solving a difficult sensor and navigation processing problem. The affordable sensors and processing power (even including a heavy FPGA front end) didn’t even come close to human perception … off by several orders of magnitude. In the end we choose not to submit the application paper work. There were strong reasons for this, a course and mandated speeds tough for humans, look at the rules and route description: http://archive.darpa.mil/grandchallenge04/conference_la.htm

That was relatively safe compared to self driving cars on streets and highways were people are.

The LIDAR Uber is using has a typical left/right angular resolution of about 0.1152 to 0.4608 degrees over a 360 degree field at 10 fps. The typical up/down angular resolution for LIDAR is however about 0.4 degrees over a narrow 26.8 degree field. The LIDAR can double it’s left/right angular resolution dropping to 5fps, or decrease it by 50% at 15fps. The human eye has left/right/up/down angular resolution of about 0.02 degrees at something faster than 12fps, up to a best case perception of around 90fps for certain trained activities. All said and done, the human eye is something 100x better than this LIDAR for normal things, and as much as several orders of magnitude for specialized events.

Why is this important? It comes down to how many pixels does it take to recognize an object. At 100ft a single pixel is about a 6″ square with a 0.28 degree angular resolution, and a 0.5″ square at your eyes 0.02 degree angular resolution. 144 more pixels.

Our brains process images AND rational decisions at a significantly higher rate than the feeble AI in these cars.

Unless someone is a psychopath, they will make critical decisions that include restrictions not to harm other people when multiple choices are presented to avoid dangerous situations. The computer in the car probably doesn’t consider such ethical issues when navigating to avoid a collision.

When people ask how safe are self driving cars, I generally say about as safe as a geriatric psychopath with cataracts and dementia, because when it’s all said and done, that is about what their skill level is.

No mater how you slice it, any sane engineer know that 90% of basic functionality takes the first 10% of the project development cycle. Finishing the project to near 99% functionality will take another 90% of the development cycle. The last 1% will take another 3x of the 99% schedule.

So given that self driving cars are maybe several years along the development path, and less than 90% complete, they will probably be fully functional (and reasonable safe as a human replacement) in something longer than another 10 years. The mythical man month provides hard limits on progressing faster.

How many more people will die … a lot more than either Uber or Tesla project.

Why is this LIDAR sensor resolution important?

At 45mph it takes about 100 feet to stop a car. A small child facing head on to the LIDAR angular resolution will be less than a dozen pixels at 100ft, and probably not recognizable unless perfectly aligned within a very tight pixel mask … 3″ to either side, and the limbs will blur into two pixels, and present a blob likely to blend into the background image. If the child is sideways, there are not enough pixels.

Now is that safe?

And there is the Golden Handcuffs problem … these engineers are accepting high industry salaries for saying yes they can solve the problems … but are they ethical enough to walk away before killing more people and kids?

Uber and the police did not release the LIDAR images for the woman killed a few weeks ago.

But taking a guess, the bicycle will not even show up on the LIDAR because the cross section of the tires, spokes, frame, etc are all a tiny fraction of the pixel resolution and were probably blurred into the background return signal since there cross section is so minimal … effectively transparent at this resolution.

The woman was sideways to the LIDAR with minimal cross section, and only a few pixels wide/tall at the stopping distance of the car.

This is where the human eye’s pixel resolution makes all the difference between safe, and the poor prototype that self driving car developers want the public to believe is safe.

On the time for a driver to react to a cars request to assume control … https://www.nytimes.com/2016/07/08/automobiles/wheels/makers-of-self-driving-cars-ask-what-to-do-with-human-nature.html

Quote from the previous NYTimes article, and driver time assume control: “Experiments conducted last year by Virginia Tech researchers and supported by the national safety administration found that it took drivers of Level 3 cars an average of 17 seconds to respond to takeover requests. In that period, a vehicle going 65 m.p.h. would have traveled 1,621 feet — more than five football fields.”

This is what killed the Tesla driver last week, that died shortly after giving the Tesla control.