The propagation of unknown (X) states has become a more urgent issue with the move toward billion-gate SoC designs. The sheer complexity and the common use of complex power management schemes increase the likelihood of an unknown ‘X’ state in the design translating into a functional bug in the final silicon. This article describes a methodology that enables design and verification engineers to focus on the X states that represent a real risk, and to set aside those that are artifacts of the design process. The goal is to reduce project time, particularly time spent in simulation, and overcome the limitations at both the RTL and gate level.

X proliferation

Billion-gate designs have millions of flip flops to initialize. Many of the IP blocks used in such designs also have their own initialization schemes.

It is neither practical nor desirable to wire a reset signal to every single flop. It makes more sense to route resets to an optimal minimum set of flops, and initialize the rest through the logic, but this is a significant RTL coding challenge.

The analysis of any system with such a reset and initialization scheme is bound to identify many X’s. The issue is how to know which ones matter, because dealing with unnecessary X’s wastes time and resources. However, missing an X state that does matter can increase the likelihood of late-stage debug, cause insidious functional failures and ultimately, respins.

Today’s power schemes further complicate the analysis of X issues. Blocks that are subject to power management have additional flops to retain the state across power-state transitions. Any analysis of their reset structures must be undertaken dynamically. Interaction between blocks in different power states must also be considered.

Two simulation phenomena need to be resolved.

- X-optimism is primarily associated with RTL simulation, and is caused by the limitations of HDL simulation semantics. It occurs when a simulator converts an X state into a 0 or a 1, creating the risk that an X causes a functional failure to be missed in RTL simulation.

- X-pessimism is primarily associated with gate-level simulation of netlists (although it also can occur in RTL simulation). As its name suggests, it happens when legitimate 0s or 1s are converted into an X state. This can lead to precious debug resources being directed toward unnecessary effort. Additionally, after synthesis has done its work, debug at the gate level is more challenging than in RTL.

Stuart Sutherland’s “I’m Still In Love With My X, But Do I Want My X To Be An Optimist, A Pessimist, Or Eliminated?” paper at DVCon was an excellent summary of the good, the bad and the ugly with X’s in a design.

Sutherland began with a quick review. X is a digital simulation-only value that can mean

- Uninitialized

- Don’t Care

- Ambiguous

It has no reality in silicon. Sutherland identified 11 possible sources of X’s:

- Uninitialized 4-state variables

- Uninitialized registers and latches

- Low-power logic shutdown or power-up

- Unconnected module input ports

- Multi-driver conflicts (bus contention)

- Operations with an unknown result

- Out-of-range bit-selects and array indices

- Logic gates with unknown output values

- Set-up or hold timing violations

- User-assigned X values in hardware models

- Testbench X injection

In simulation, X-optimism occurs when uncertainty on an input to an expression or gate resolves to a known result instead of an X. This can hide bugs. If…Else and Case decision statements are X-optimistic and do not match silicon behavior. Sutherland cites nine different constructs and statement types that can be X-optimistic:

- If…else statements

- Case statements without a default-X assignment

- Casex, casez, case… inside statements

- Bitwise, unary reduction, and logical operators

- And, nand, or, nor primitives

- Array index with X or Z bits for write operations

- Net data types

- Posedge and negedge edge sensitivity

- User-defined primitives

X-pessimism exists when uncertainty on an input to an expression or gate always resolves to an unknown result (X). It does not hide design ambiguities but again does not match silicon. It can make debug difficult, propagate X’s unnecessarily and cause simulation lock-up. Sutherland cites nine different operators and assignments that have X-pessimistic behavior:

- If…else statements with X assignment

- Conditional operator

- Case statements with X assignments

- Edge-sensitive X pessimism

- Bitwise, unary reduction, and logical operators

- Equality, relational, and arithmetic operators

- Bit-select, part-select, array index on right-hand side of assignments

- Shift operations

- User-defined primitives

While it is possible to have just a two-state simulator with zero and one only, verification cannot check for ambiguity, which is important to have. Sutherland says that the X is your friend and you should live with it.

Methodology Principles

Any methodology to handle X issues efficiently must focus on solving the problem in RTL, using tools and methodologies that can be applied to RTL simulation. Gate-level simulation is slow and tends toward X-pessimism. Any real bugs uncovered at the gate level are more difficult and time-consuming to fix than in RTL.

Assuming a focus on RTL, the next desirable feature of the methodology would be that it addresses the different skills and requirements of design engineers and verification engineers.

In discussing X issues with customers, Real Intent concluded that while both types of engineers share the same overall concerns – avoiding X-propagated functional bugs and catching them as early as possible – they have different perspectives.

Many design engineers now work with strict guidelines aimed at achieving X-accurate coding. This is a delicate task that requires further automation, but it can catch many X issues early. The designer’s priority is to know where the X-prone regions of the RTL may be.

In contrast, a verification engineer typically thinks about controlling the amounts of X-optimism and X-pessimism at each stage of the verification flow.

Finally, the methodology needs to accept that there is no single practical technology that will deliver the quickest and most accurate X-analysis in all cases. For example, formal analysis techniques such as model checking and symbolic simulation can be a big help, but they face capacity challenges (such as memory usage).

Therefore, a successful methodology to handle X propagation must combine several techniques balanced to deliver thorough results in the best available turnaround time.

Methodology

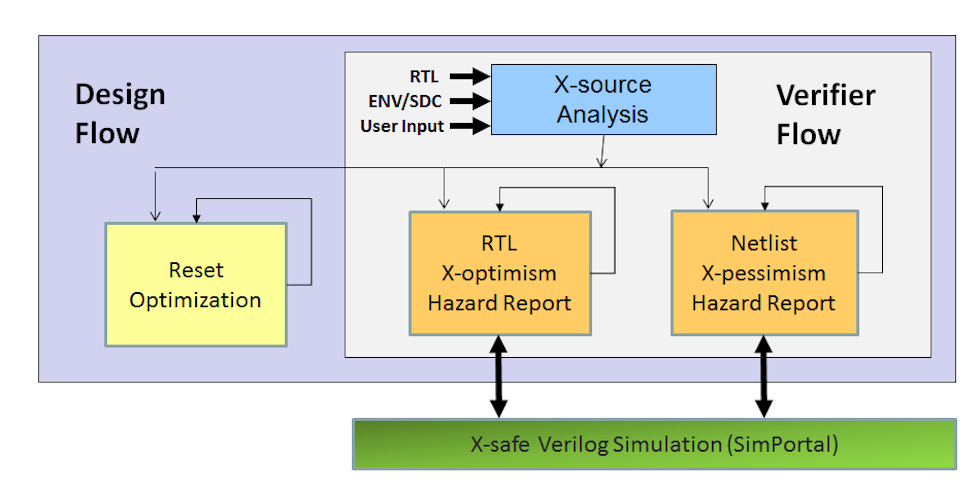

Caption for Figure 1: This diagram shows the methodology Real Intent developed based on its Ascent XV tool suite.

The methodology depicted tries to capture relevant X issues as early as possible in the design flow. Separate phases are design-centric and verification-centric.

Design-centric flow

The first thing a designer wants is to minimize the number of X-sources that exist. The methodology first identifies where X’s might originate. Appropriate structural analysis looks at the characteristics of the RTL to identify potential sources. Lint tools can flag hazards such as explicit X-assignments, signals within a block that are used but not driven, out of range assignments, and flops that don’t have a reset signal, to name a few.

However, structural analysis cannot determine whether a real X-issue exists, and does not include any sequential analysis. Our methodology uses sequential formal analysis to determine the baseline list of uninitialized flops, and then suggests additional flops, that if reset, would lead to complete initialization.

The creation of the hazard report is therefore augmented by using formal techniques to result in a more precise list. The designer can then manage and respond to this list as appropriate.

Designers also want to identify which X-sources can propagate to X-sensitive constructs. This portion of the flow uses automation to trace X-source propagation through X-sensitive constructs. It then presents the results in a hazard report, which is focused on relevant constructs using a number of static techniques in the simulation context.

Verification-centric flow

Customer conversations revealed that verification engineers tend to focus on X-optimism and X-pessimism in their efforts to manage X-propagation.

X-accurate modeling is used to address both, and existing simulation checkers then help to detect functional issues. This is done in a way that does not touch the user’s code and is easily integrated. The performance overhead of the X-accurate simulation is strictly controlled.

Once an issue is discovered, the verification engineer needs further information to isolate the cause. For this, it is useful to know which signals were sensitive to X-optimism and which control signals had X’s.

The methodology uses the simulator’s assertion counters to track statistics for these signals. Monitors can then print a message the first time a signal is flagged as sensitive to X-optimism, which is useful for determining its root cause.

An important constraint here, though, is that the monitor does not slow simulation to a crawl by offering a print every time a flag is raised. The aim is to provide a readout so that the verification engineer knows where to use waveform analysis and trace a signal back to its source.

This X-accurate modeling can be used at both the RTL and netlist levels although, again, the emphasis is on undertaking appropriate simulation at as high a level as possible.

Conclusion

Pragmatism and balance is as important as the power of the technologies used in addressing X-propagation in today’s complex designs.

The methodology outlined here targets a specific but important problem. It aims to ensure, that design and verification engineers can focus their resources on avoiding and fixing bugs, and minimize design turnaround time.

Further information

A more detailed discussion of the Real Intent methodology discussed here and the various technologies available to combat X-propagation can be found here.

About the Author

Lisa Piper is a Technical Marketing Manager at Real Intent. She has been involved with using assertions in simulation-based verification, acceleration and formal verification. This included methodology work, active participation in standardization work, and product definition work. Prior to that, Lisa worked at Lucent Microelectronics and AT&T Bell Labs. She has a BSEE from Purdue University and MSEE from Ohio State University.

Lisa Piper is a Technical Marketing Manager at Real Intent. She has been involved with using assertions in simulation-based verification, acceleration and formal verification. This included methodology work, active participation in standardization work, and product definition work. Prior to that, Lisa worked at Lucent Microelectronics and AT&T Bell Labs. She has a BSEE from Purdue University and MSEE from Ohio State University.

Real Intent’s Lisa Piper has provided some guidance on managing the dreaded “X” during verification. What do you think?