There’s a battle shaping up as yet another entrant into the assertion synthesis field makes some noise this week.

We’ve kept an eye on assertion synthesis over the last year or so. Tools and methodology vendors lament that assertion-based verification has been slow to catch on. Some say that’s because it’s too hard to generate and work with assertions. Hence tools to make that easier.

Last summer we reported on NextOp’s entry into the fray. We also noted that their approach was qualitatively different from that of another player, Zocalo, in that Zocalo enables assertion synthesis before the design is done; NextOp generates assertions from RTL already written.

This week, Jasper is tossing their hat into the ring as well. And, unlike Zocalo and NextOp, Jasper not only generates, but also processes assertions using their formal technology.

Jasper joins NextOp in their approach to synthesis: they do it after the RTL has been created. To be specific, Jasper refers to this as property synthesis, since they can create assertions (generated at block outputs), constraints (generated at block inputs), and cover properties.

Now, it would be really nice if you could just toss your design to a tool and say, “Wake me when you’re done.” Problem is, the tools can only guess at what is going on in the design. They may be able to make some good guesses, but they’re only inferences, and, as the repository of knowledge of what’s really going on, you have to help the tool out.

So Jasper describes a basic three-step process for getting properties going.

The first step involves gathering all known information about the design. Mostly this is the RTL, but you can bring the testbench and simulation waveforms into the picture as well, all of which help establish intent. From here you identify the RTL block signals and tell the tool which things you’d like to look at. Jasper refers to these as “points of interest” – POIs. These POIs are tracked throughout the tool as specific reference points.

Once the tool knows how the design is defined, you can then run ActiveProp to generate a set of candidate properties. You do this either by running the simulation in advance and then providing the results to ActiveProp, or you can actually use PLI to have ActiveProp “watch” the simulation as it happens.

What’s going on here is that ActiveProp looks at the design and then at the simulation results and decides, “OK, based on what I’m seeing, here’s what I think the overall design intent is.”

This is a critical step. The whole idea of formal verification is that you can prove things without having to exercise every possible scenario in a simulation. If you’re testing a counter, it’s easier to prove that n always leads to n+1 than it is to run through every possible value of n to show that it always works. So if, between the RTL and the behavior during simulation, the tool can see that there are enough cases of n leading to n+1, it can generalize the higher-level rule. From that rule you can prove the general case rather than simulating the missing specific cases.

A particular simulation run may also be misleading. For example, it may show a counter only increasing its count. The inference there could be either that it should be an up-counter or that you’ve forgotten to test down-counting. Jasper allows subsequent simulations to supplement the existing data set; you can use this to fill in holes and improve the quality of inference and extrapolation that the tool makes.

But, of course, the tool is making assumptions. Ordinarily, when you and I assume, we make the proverbial donkey out of you and me. But when you have a tool doing the assuming, then only you are at risk of looking ass-inine. So you have to confirm the tool’s decisions.

Which is the third step in the process. The properties are all listed, and you can explicitly disposition them as “certified,” “uncertain,” and “don’t care.”

All of this is well and good, but, given an arbitrary design, a bunch of stimulus results, and a non-human reasoning machine with no a priori knowledge of design intent, you can imagine that the tool might imagine a huge number of different design characteristics that may or may not be relevant or important. It would then be up to you to wade through this morass to make sense out of it and to decide which things to keep and which to jettison.

So the ability for a tool to do a better job than its competitor of generating good properties would be a critical differentiator. The gold standard would be a tool that could infer the necessary and sufficient set of properties that were neither under- nor over-scoped. That, of course, is a tall order.

And it seems to me that this is where the battle lines will be drawn. Zocalo is different in that they’re mostly making manual property creation much easier, so it doesn’t have this issue. But because NextOp and Jasper infer properties from the design automatically, this could be a key point of competition. Jasper might argue that they have an edge because of their integration with their formal engines, but it’s entirely likely that users would sacrifice that integration if necessary for the sake of better properties or better formal. So Jasper can count on that only if they’ve done their homework both on quality of properties inferred and on formal analysis.

Jasper claims to have a high “signal-to-noise” ratio, suggesting that the vast bulk of the properties they generate are relevant and properly extrapolated. They also classify, rank, and merge properties to give a better starting point as you wade in to examine what ActiveProp generated.

Jasper also claims that ActiveProp can generate complex multi-cycle properties, which is a bit of a fuzzy claim. It’s one thing to generate properties that span a couple cycles; it’s much harder if the property deals with a complex protocol that has several signals interacting over the course of many cycles.

Multi-cycle coverage, then, can form another aspect of the quality of properties generated. Which leads to a potential challenge for users: how do you measure the overall quality of a set of properties? How would you benchmark two tools? This whole domain is a relatively amorphous blob, and it’s not clear that benchmark standards exist.

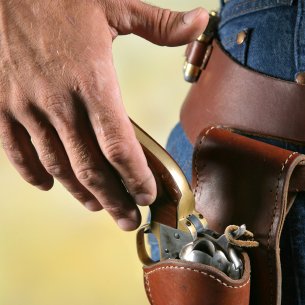

So, even as we watch the combatants’ hands hover by their holsters, loosening their figures and staring each other down, the rules of engagement are not at all clear. Perhaps they will materialize organically as the technology matures. If not, then, if history is any guide, these guys are likely to define their own self-serving ones, leaving it to users to figure out who the real winner is.